Centauri Dreams

Imagining and Planning Interstellar Exploration

A New Tool for Exoplanet Detection and Characterization

It’s been apparent for a long time that far more astronomical data exist than anyone has had time to examine thoroughly. That’s a reassuring thought, given the uses to which we can put these resources. Ponder such programs as Digital Access to a Sky Century at Harvard (DASCH), which draws on a trove of over half a million glass photographic plates dating back to 1885. The First and Second Palomar Sky Surveys (POSS-1 and POSS-2) go back to 1949 and are now part of the Digitized Sky Survey, which has digitized the original photographic plates. The Zwicky Transient Facility, incidentally, uses the same 48-inch Samuel Oschin Schmidt Telescope at Palomar that produced the original DSS data.

There is, in short, plenty of archival material to work with for whatever purposes astronomers want to pursue. You may remember our lengthy discussion of the unusual star KIC 8462852 (Boyajian’s Star), in which data from DASCH were used to explore the dimming of the star over time, the source of considerable controversy (see, for example, Bradley Schaefer: Further Thoughts on the Dimming of KIC 8462852 and the numerous posts surrounding the KIC 8462852 phenomenon in these pages). Archival data give us a window by which we can explore a celestial observation through time, or even look for evidence of technosignatures close to home (see ‘Lurker’ Probes & Disappearing Stars).

But now we have an entirely new class of archival data to mine and apply to the study of exoplanets. A just published paper discusses how previously undetectable data about stars and exoplanets can be found within the archives of radio astronomy surveys. The analysis method has the name Multiplexed Interferometric Radio Spectroscopy (RIMS), and it’s intriguing to learn that it may be able to detect an exoplanet’s interactions with its star, and even to run its analyses on large numbers of stars within the radio telescope’s field of view.

We are in the early stages of this work, with the first detections now needing to be further analyzed and subsequent observations made to confirm the method, so I don’t want to minimize the need for continuing study. But if things pan out, we may have added a new method to our toolkit for exoplanet detection.

The signature finding here is that the huge volumes of data accumulated by radio telescopes worldwide, so vital in the study of cosmology through the analysis of galaxies and black holes, can also track variable activity of numerous stars that are within the field of view of each of these observations. What the authors are unveiling here is the ability to perform a simultaneous survey across hundreds or potentially thousands of stars. Cyril Tasse, lead author of the paper in Nature Astronomy, is an astronomer at the Paris Observatory. Tasse explains the range that RIMS can deploy:

“RIMS exploits every second of observation, in hundreds of directions across the sky. What we used to do source by source, we can now do simultaneously. Without this method, it would have taken nearly 180 years of targeted observations to reach the same detection level.”

The researchers have examined 1.4 years of data collected at the European LOFAR (Low Frequency Array) radio telescope at 150 MHz. Here low frequency wavelengths from 10 to 240 MHz are probed by a huge array of small, fixed antennas, with locations spread across Europe, their data digitized and combined using a supercomputer at the University of Groningen in the Netherlands. Out of this data windfall the RIMS team has been able to generate some 200,000 spectra from stars, some of them hosting exoplanets. While a stellar explanation is possible for star-planet interactions, this form of analysis, say the authors, “demonstrate[s] the potential of the method for studying stellar and star–planet interactions with the Square Kilometre Array.” LOFAR can be considered a precursor to the low-frequency component of the SKA.

Here we drill down to the planetary system level, for among the violent stellar events that RIMS can track (think coronal mass ejections, for example), the researchers have traced signals that produce what we would expect to find with magnetic interactions between planet and star. Closer to home, we’ve investigated the auroral activity on Jupiter, but now we may be tracing similar phenomena on planets we have yet to detect through any other means.

Image: Artistic illustration of the magnetic interaction between a red dwarf star such as GJ 687, and its exoplanet. Credit: Danielle Futselaar/Artsource.nl.

Let’s focus for a moment on the importance of magnetic fields when it comes to making sense of stellar systems other than our own. The interior composition of planets – their internal dynamo – can be explored with a proper understanding of their magnetosphere, which also unlocks information about the parent star. That sounds highly theoretical, but on the practical plane it points toward a signal we want to acquire from an exoplanetary system in order to understand the environments present on orbiting worlds. And don’t forget how critical a magnetic field is in terms of habitability, for fragile atmospheres must be shielded from stellar winds so as to be preserved.

At the core of the new detection method is cyclotron maser instability(CMI), which is the basic process that produces the intense radio emissions we see from planets like Jupiter. CMI is an instability in a plasma, where electrons moving in a magnetic field produce coherent electromagnetic radiation. Here is a link to Juno observations of these phenomena around Jupiter.

Detecting such emissions, RIMS can point to the presence of a planet in a stellar system. Working with radio observations, we can move beyond modeling to sample actual field strengths, which is why radio emissions (not SETI!) from exoplanets have been sought for decades now. Finding a way to produce interferometric data sufficient to paint a star-planet signature is thus a priority.

Exoplanetary aurorae would indicate the existence of magnetospheres, and that’s no small result. And we may be making such a detection around a star some 14.8 light years away, says co-author Jake Turner (Cornell University):

“Our results indicate that some of the radio bursts, most notably from the exoplanetary system GJ 687, are consistent with a close-in planet disturbing the stellar magnetic field and driving intense radio emission. Specifically, our modeling shows that these radio bursts allow us to place limits on the magnetic field of the Neptune-sized planet GJ 687 b, offering a rare indirect way to study magnetic fields on worlds beyond our Solar System.”

There are also implications for the search for life elsewhere in the cosmos. Turner adds:

“Exoplanets with and without a magnetic field form, behave and evolve very differently. Therefore, there is great need to understand whether planets possess such fields. Most importantly, magnetic fields may also be important for sustaining the habitability of exoplanets, such as is the case for Earth,”

Using low-frequency radio astronomy, then, we turn a telescope array into a magnetosphere detector. Researchers have also applied the MIMS technique to the French low frequency array NenuFAR, located at the Nançay Radio Observatory south of Paris, detecting a burst from the exoplanetary system HD 189733 that was described recently in Astronomy & Astrophysics. As with another possible burst from Tau Boötes, the team is in the midst of making follow-up observations to confirm that both signals came from a star-planet interaction. If the method is proven successful, such interactions point to a new astronomical tool.

The paper is Tasse et al., “The detection of circularly polarized radio bursts from stellar and exoplanetary systems,” Nature Astronomy 27 January 2026 (abstract). The earlier paper is Zhang et al., “A circularly polarized low-frequency radio burst from the exoplanetary system HD 189733,” Astronomy & Astrophysics Vol. 700, A140 (August 2025). Full text.

Holography: Shaping a Diffractive Sail

One result of the Breakthrough Starshot effort has been an intense examination of sail stability under a laser beam. The issue is critical, for a small sail under a powerful beam for only a few minutes must not only survive the acceleration but follow a precise trajectory. As Greg Matloff explains in the essay below, holography used in conjunction with a diffractive sail (one that diffracts light waves through optical structures like microscopic gratings or metamaterials) can allow a flat sail to operate like a curved or even round one. I’ll have more on this in terms of the numerous sail papers that Starshot has spawned soon. For today, Greg explains how what had begun as an attempt to harness holography for messaging on a deep space probe can also become a key to flight operations. The Alpha Cubesat now in orbit is an early test of these concepts. The author of The Starflight Handbook among many other books (volumes whose pages have often been graced by the artwork of the gifted C Bangs), Greg has been inspiring this writer since 1989.

by Greg Matloff

The study of diffractive photon sails likely begins in 1999 during the first year of my tenure as a NASA Summer Faculty Fellow. I was attending an IAA symposium in Aosta, Italy where my wife C Bangs curated a concurrent art show. The title of the show , which included work by about thirty artists, was “Messages from Earth”. At the show’s opening, C was approached by visionary physicist Robert Forward who informed her that the best technology to affix a message plaque to an interstellar photon sail was holography. A few weeks later, back in Huntsville AL, Bob suggested to NASA manager Les Johnson that he fund her to create a prototype holographic interstellar message plaque.

It is likely that Bob encouraged this art project as an engineering demonstration. He was aware that photon sails do not last long in Low Earth Orbit because the optimum sail aspect angle to increase orbital energy is also the worst angle to increase atmospheric drag. He had experimented with the concept of a two-sail photon sail and correctly assumed that from a dynamic point of view such a sail would fail. A thin-film hologram of an appropriate optical device could redirect solar radiation pressure accurately without increasing drag.

Our efforts resulted in the creation of a prototype holographic interstellar message plaque that is currently at NASA Marshall Space Flight Center. It was displayed to NASA staff during the summer of 2001 and has been described in a NASA report and elsewhere [1].

I thought little about holography until 2016, when I was asked by Harvard’s Avi Loeb to participate in Breakthrough Starshot as a member of the Scientific Advisory Committee. This technology development project examined the possibility of inserting nano-spacecraft into the beam of a high energy laser array located on a terrestrial mountain top. The highly reflective photon sail affixed to the tiny payload could in theory be accelerated to 20% of the speed of light.

One of the major issues was sail stability during the 5-6 minutes in a laser beam moving with Earth’s rotation. Work by Greg and Jim Benford, Avi Loeb and Zac Manchester (Carnegie Mellon University) indicated that a curved sail was necessary. to compensate for beam motion. But a curved thin sail would collapse immediately during the enormous acceleration load.

Some researchers realized that a diffractive sail that could simulate a curved surface might be necessary. Grover Swartzlander of Rochester Institute of Technology published on the topic [2].

Martina Mongrovius, then Creative Director of the NYC HoloCenter, suggested to C that one approach to incorporating an image of an appropriate diffractive optical device in the physically flat sail was holography; this was later confirmed by Swartzlander. Avi Loeb arranged for C to attend the 2017 Breakthrough meeting and demonstrate our version of the prototype holographic message plaque.

A Breakthrough Advisor present at the demonstration was Cornell professor and former NASA chief technologist Mason Peck. Mason invited C to create, with Martina’s aid, five holograms to be affixed to Cornell’s Alpha CubeSat, a student-coordinated project to serve as a test bed for several Starshot technologies.

Image: Fish Hologram (Sculpture by C Bangs, exposure by Martina Mrongovius). A holographic plaque could carry an interstellar message. But could holography also be used to simulate the optimal sail surface on a flat sail?

During the next eight years, about 100 Cornell aerospace engineering students participated in the project. Doctoral student Joshua Umansky-Castro, who has now earned his Ph.D. was the major coordinator.

In 2023, there was an exhibition aboard the NYC museum ship Intrepid (a World War II era aircraft carrier) presenting the scientific and artistic work of the Alpha CubeSat team. Alpha was launched in September of 2025 as part of a ferry mission to the ISS. The cubesat was deployed in Dec. 2025.

All goals of the effort have been successfully achieved. The tiny chipsats continue to communicate with Earth. The demonstration sail deployed as planned from the CubeSat. A post-deployment glint photographed from the ISS indicates that the holograms perform in space as expected, increasing the Technological Readiness of in-space holograms and diffraction sailing.

In May 2026 a workshop on Lagrange Sunshades to alleviate global warning is scheduled to take place in Nottingham. The best sunshade concepts suggested to date are reflective sails. Two issues with reflective sail sunshades are apparent. One is the meta-stability of L1, which requires active control to maintain the sunshade on station. A related issue is that the solar radiation momentum flux moves the effective Lagrange point farther from the Earth, requiring a larger sunshade. At the Nottingham Workshop. C and I will collaborate with Grover Swartzlander to demonstrate how a holographic/diffractive sunshade surface alleviates these issues.

References

1.G. L. Matloff, G. Vulpetti, C. Bangs and R. Haggerty, “The Interstellar Probe (ISP): Pre-Perihelion Trajectories and Application of Holography”, NASA/CR-2002-211730, NASA Marshal Spaceflight Center, Huntsville, AL (June, 2002). Also see G. L. Matloff, Deep-Space Probes: To the Outer Solar System and Beyond, 2nd. ed., Springer/Praxis, Chichester, UK (2005).

2.G. A. Swartzlander, Jr., “Radiation Pressure on a Diffractive Sailcraft”, arXiv: 1703.02940.

Shelter from the Storm

The approaching storm will almost certainly cause power outages that will make it impossible to post here. If this occurs, you can be sure that I’ll get any incoming messages posted as soon as I can get back online. Please continue to post comments as usual and let’s cross our fingers that the storm is less dangerous than it appears.

Cellular Cosmic Isolation: When the Universe Seeds Life but Civilizations Stay Silent

So many answers to the Fermi question have been offered that we have a veritable bestiary of solutions, each trying to explain why we have yet to encounter extraterrestrials. I like Leo Szilard’s answer the best: “They are among us, and we call them Hungarians.” That one has a pedigree that I’ll explore in a future post (and remember that Szilard was himself Hungarian). But given our paucity of data, what can we make of Fermi’s question in the light of the latest exoplanet findings? Eduardo Carmona today explores with admirable clarity a low-drama but plausible scenario. Eduardo teaches film and digital media at Loyola Marymount University and California State University Dominguez Hills. His work explores the intersection of scientific concepts and cinematic storytelling. This essay is adapted from a longer treatment that will form the conceptual basis for a science fiction film currently in development. Contact Information: Email: eduardo.carmona@lmu.edu

by Eduardo Carmona MFA

In September 2023, NASA’s OSIRIS-REx spacecraft delivered a precious cargo from asteroid Bennu: pristine samples containing ribose, glucose, nucleobases, and amino acids—the molecular Lego blocks of life itself. Just months later, in early 2024, the Breakthrough Listen initiative reported null results from their most comprehensive search yet: 97 nearby galaxies across 1-11 GHz, with no compelling technosignatures detected.

We live in a cosmos that generously distributes life’s ingredients while maintaining an eerie radio silence. This is the modern Fermi Paradox in stark relief: building blocks everywhere, conversations nowhere.

What if both observations are telling us the same story—just from different chapters?

The Seeding Paradox

The discovery of complex organic molecules on Bennu—a pristine carbonaceous asteroid that has barely changed in 4.5 billion years—confirms what astrobiologists have long suspected: the universe is in the business of making life’s components. Ribose, the sugar backbone of RNA. Nucleobases that encode genetic information. Amino acids that fold into proteins.

These aren’t laboratory curiosities. They’re delivered at scale across the cosmos, frozen in time capsules of rock and ice, raining down on every rocky world in every stellar system. The implications are profound: prebiotic chemistry isn’t a lottery. It’s standard operating procedure for the universe.

This abundance makes the silence more puzzling. If life’s ingredients are everywhere, why isn’t life—or at least communicative life—equally ubiquitous? The Drake Equation suggests we should be drowning in signals. Yet decade after decade of increasingly sophisticated SETI searches return the same answer: nothing.

The traditional responses—they’re too far away, they use technology we can’t detect, they’re deliberately hiding—feel increasingly like special pleading. What if the answer is simpler, more systemic, and reconcilable with both observations?

Cellular Cosmic Isolation: A Synthesis

I propose what I call Cellular Cosmic Isolation (CCI)—not a single explanation but a framework that synthesizes multiple constraints into a coherent picture. Think of it as a series of filters, each one narrowing the funnel from chemical abundance to electromagnetic chatter.

The framework rests on four interlocking observations:

1. Prebiotic abundance: Chemistry is generous. Small bodies deliver life’s building blocks widely and consistently. Biospheres may be common.

2. Geological bottlenecks: Complex, communicative life requires rare conditions—specifically, worlds with coexisting continents and oceans, sustained by long-duration plate tectonics (≥500 million years). Earth’s particular geological engine may be uncommon.

3. Fleeting windows: Technological civilizations may have extraordinarily brief outward-detectable phases—measured in decades, not millennia—before transitioning to post-biological forms, self-destruction, or simply turning their attention inward.

4. Communication constraints: Physical limits (finite speed of light, signal dispersion, beaming requirements) plus coordination problems suppress even the detection of civilizations that do exist.

The result? A universe where the chemistry of life is ubiquitous, simple biospheres may be common, but detectable technospheres remain vanishingly rare and non-overlapping in spacetime. We’re not alone because life is impossible. We’re alone because the path from ribose to radio telescopes has far more gates than we imagined.

The Geological Filter: Earth’s Unlikely Engine

This is perhaps CCI’s most counterintuitive claim, yet it’s grounded in recent research. In a 2024 paper in Scientific Reports, planetary scientists Robert Stern and Taras Gerya argue that Earth’s specific combination—plate tectonics that has operated for billions of years, creating and recycling continents alongside persistent oceans—may be geologically unusual.

Why does this matter for intelligence? Because continents enable:

• Terrestrial ecosystems with high energy density and environmental diversity

• Land-ocean boundaries that create evolutionary pressure for complex sensing and locomotion

• Fire (impossible underwater), which enables metallurgy and advanced tool use

• Seasonal and altitudinal variation that rewards cognitive flexibility

Venus has no plate tectonics. Mars lost its early tectonics. Europa and Enceladus have subsurface oceans but no continents. Earth’s geological engine—stable enough to persist for billions of years, dynamic enough to continuously create new land and recycle old—may be a rare configuration.

Mathematically, this adds two probability terms to the Drake Equation: foc (the fraction of habitable worlds with coexisting oceans and continents) and fpt (the fraction with sustained plate tectonics). If each is, say, 0.1-0.2, their joint probability becomes 0.01-0.04—already a significant filter.

The Temporal Filter: Civilization’s Brief Bloom

But the most devastating filter may be temporal. Traditional SETI assumes civilizations remain detectably technological for thousands or millions of years. CCI suggests the opposite: the phase during which a civilization broadcasts electromagnetic signals into space may be extraordinarily brief—perhaps only decades to centuries.

Consider the human trajectory. We’ve been radio-loud for roughly a century. But already:

• We’re transitioning from broadcast to narrowcast (cable, fiber, satellites)

• Our strongest signals are becoming more controlled and directional

• We’re developing AI systems that may fundamentally transform human civilization within this century

What comes after? Post-biological intelligence operating at computational speeds? A civilization that turns inward, exploring virtual realities? Self-annihilation? Deliberate silence to avoid dangerous contact?

We don’t know. But if the detectable technological phase (call it Lext) averages 50-200 years rather than 10,000-1,000,000 years, the probability of temporal overlap collapses. In a galaxy 13 billion years old, two civilizations with century-long detection windows need to be synchronized to within a cosmic eyeblink.

This isn’t speculation—it’s extrapolation from our own accelerating technological trajectory. And acceleration may be a universal property of technological intelligence.

The Mathematics of Solitude

The traditional Drake Equation multiplies probabilities: star formation rate × fraction with planets × habitable planets per system × fraction developing life × fraction developing intelligence × fraction developing communication × longevity of civilization.

CCI expands this with additional constraints:

Ndetectable = R* × Tgal × [biological/technological terms] × [foc × fpt] × [Lext / Tgal] × C(I)

Where C(I) captures propagation physics—distance, dispersion, scattering, beaming geometry. Each term is a probability distribution, not a point estimate.

In 2018, Oxford researchers Anders Sandberg, Stuart Armstrong, and Milan Ćirković performed a rigorous Bayesian analysis of Drake’s Equation using probability distributions for each parameter. Their conclusion? When uncertainties are properly handled, the probability that we are alone in the observable universe is substantial—not because life is impossible, but because the error bars are enormous.

CCI takes this Bayesian framework and adds the geological and temporal constraints. The result: a posterior probability distribution that is entirely consistent with both abundant prebiotic chemistry and persistent SETI nulls. No paradox required.

What We Should See (And Why We Don’t)

CCI makes testable predictions. If the framework is correct:

1. Biosignatures before technosignatures

Upcoming missions like the Habitable Worlds Observatory should detect atmospheric biosignatures (oxygen-methane disequilibria, possible vegetation edges) before detecting techno signatures. Simple biospheres should be discoverable; technospheres should remain elusive.

2. Continued SETI nulls

Radio and optical SETI campaigns will continue to find nothing—not because we’re searching wrong, but because the detectable population is genuinely sparse and temporally fleeting.

3. Technosignature detection requires extreme investment

Detection of artificial spectral edges (like photovoltaic arrays reflecting at silicon’s UV-visible cutoff) or Dyson-sphere waste heat requires hundreds of hours of observation time even for nearby stars. Their absence at practical survey depths is predicted, not puzzling.

Importantly, CCI is falsifiable. A single unambiguous, repeatable interstellar signal would invalidate the short-Lext assumption. Multiple detections of artificial spectral features would refute the geological filter. The framework lives or dies by observation.

The Cosmos as Organism

There’s an almost biological elegance to this picture. The universe manufactures prebiotic molecules in stellar nurseries and delivers them via comets and asteroids—a kind of cosmic panspermia that doesn’t require directed intelligence, just chemistry and gravity. Call it the seeding phase.

Some of those seeds land on worlds with the right geological configuration—the awakening phase—where continents and oceans coexist long enough for complex cognition to emerge. This is rarer.

A tiny fraction of those awakenings reaches technological sophistication—the communicative phase—but this phase is fleeting, measured in decades to centuries before transformation or silence. This is rarest.

And even then, physical constraints—distance, timing, beaming, the sheer improbability of coordination—suppress detection. The isolation phase.

The cosmos isn’t hostile to intelligence. It’s just structured in a way that makes electromagnetic conversation between civilizations vanishingly unlikely—not impossible, just so improbable that null results after decades of searching are exactly what we’d expect.

Each civilization, then, is like a cell in a vast organism: seeded with the same chemical building blocks, developing according to local conditions, briefly active, then transforming or falling silent before contact with other cells occurs. Cellular Cosmic Isolation.

What This Means for Us

If CCI is correct, we should recalibrate our expectations without abandoning hope. SETI is not futile—it’s hunting for an extraordinarily rare phenomenon. Every null result tightens our probabilistic constraints and guides future searches. But we should also prepare for the possibility that we are, if not alone, then at least effectively alone during our detectable window.

This shifts the weight of responsibility. If technological civilizations are rare and fleeting, then ours carries unique value—not as a recipient of cosmic wisdom from older civilizations, but as a brief, precious experiment in consciousness. The burden falls on us to use our detectable phase wisely: to either extend it, transform it into something sustainable, or at least ensure we don’t waste it.

The universe seeds life generously. It’s indifferent to whether those seeds grow into forests or fade into silence. CCI suggests that the path from chemistry to conversation is longer, stranger, and more filtered than we imagined.

But the building blocks are everywhere. The recipe is universal. And somewhere, in the vast probabilistic landscape of possibility, other cells are awakening. We just may never hear them call out before they, like us, transform into something we wouldn’t recognize as a civilization at all.

That is not a paradox. That is simply the way the cosmos works.

Further Reading

Prebiotic Chemistry:

Furukawa, Y., et al. (2025). “Detection of sugars and nucleobases in asteroid Ryugu samples.” Nature Geoscience. NASA’s OSIRIS-REx mission (2023) also reported similar findings from Bennu.

Bayesian Drake Analysis:

Sandberg, A., Drexler, E., & Ord, T. (2018). “Dissolving the Fermi Paradox.” arXiv:1806.02404. Oxford Future of Humanity Institute.

Geological Filters:

Stern, R., & Gerya, T. (2024). “Plate tectonics and the evolution of continental crust: A rare Earth perspective.” Scientific Reports, 14.

SETI Null Results:

Choza, C., et al. (2024). “A 1-11 GHz Search for Radio Techno signatures from the Galactic Center.” Astronomical Journal. Breakthrough Listen campaign results.

Barrett, J., et al. (2025). “An Exoplanet Transit Search for Radio Techno signatures.” Publications of the Astronomical Society of Australia.

Technosignature Detection:

Lingam, M., & Loeb, A. (2017). “Natural and Artificial Spectral Edges in Exoplanets.” Monthly Notices of the Royal Astronomical Society Letters, 470(1), L82-L86.

Kopparapu, R., et al. (2024). “Detectability of Solar Panels as a Techno signature.” Astrophysical Journal.

Wright, J. et al (2022). “The Case for Techno signatures: Why They May Be Abundant, Long-lived, and Unambiguous.” The Astrophysical Journal Letters 927(2), L30.

Technology Acceleration:

Garrett, M. (2025). “The longevity of radio-emitting civilizations and implications for SETI.” Journal of the British Interplanetary Society (forthcoming). See also earlier work on technological singularities and post-biological transitions.

Pandora: Exoplanets at Multiple Wavelengths

Sometimes we forget how overloaded our observatories are, both in space and on the ground. Why not, for example, use the James Webb Space Telescope to dig even further into TRAPPIST-1’s seven planets, or examine that most tantalizing Earth-mass planet around Proxima Centauri? Myriad targets suggest themselves for an instrument like this. The problem is that priceless assets like JWST not only have other observational goals, but more tellingly, any space telescope is overbooked by scientists with approved observing programs.

Add to this the problem of potentially misleading noise in our data. Thus the significance of Pandora, lofted into orbit via a SpaceX Falcon 9 on January 11, and now successful in returning robust signals to mission controllers. One way to take the heat off overburdened instruments is to create much smaller, highly specialized spacecraft that can serve as valuable adjuncts. With Pandora we have a platform that will monitor a host star in visible light while also collecting data in the near infrared from exoplanets in orbit around it.

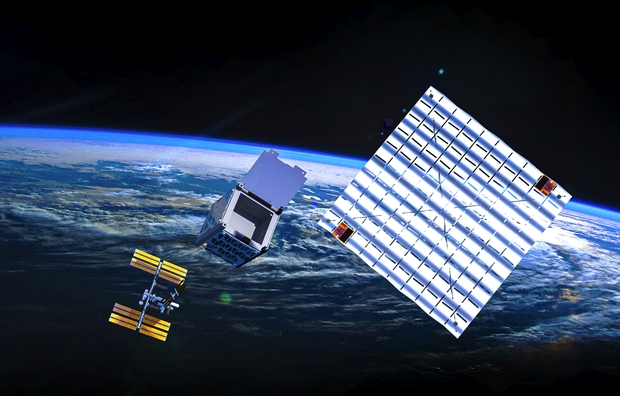

Image: A SpaceX Falcon 9 rocket carrying NASA’s Pandora small satellite, the Star-Planet Activity Research CubeSat (SPARCS), and Black Hole Coded Aperture Telescope (BlackCAT) CubeSat lifts off from Space Launch Complex 4 East at Vandenberg Space Force Base in California on Sunday, Jan. 11, 2026. Pandora will provide an in-depth study of at least 20 known planets orbiting distant stars to determine the composition of their atmospheres — especially the presence of hazes, clouds, and water vapor. Credit: SpaceX.

We can use transmission spectography to study an exoplanet’s atmosphere, providing it transits the host star. In that case, the data taken when the planet transits the stellar disk can be compared to data when the planet is out of view, so that chemicals in the atmosphere become apparent. This method works and has been used to great effect with a number of transiting hot Jupiters. But contamination of the result caused by the star itself remains a problem as we widen our observations to ever smaller worlds.

Dániel Apai (University of Arizona) and colleagues have been digging into this problem for a number of years now. Apai is co-investigator on the Pandora mission. He refers to “the transit light source effect” one which he has been working on since 2018. Apai put it this way in an article in the Tucson Sentinel:

“We built Pandora to shatter a barrier – to understand and remove a source of noise in the data – that limits our ability to study small exoplanets in detail and search for life on them.”

The multiwavelength aspect of Pandora is crucial for its mission. The goal is to separate exoplanet signatures from stellar activity that can mimic or even suppress our readings on compounds within the planetary atmosphere. Pandora will examine a minimum of 20 already identified exoplanets and their host stars (some of these were TESS discoveries). Each target system will be observed 10 times for 24 hours at a time. Starspots and other stellar activity can then be subtracted from the near-infrared readings on clouds, hazes and other atmospheric components.

Image: The Pandora observatory shown with the solar array deployed. Pandora is designed to be launched as a ride-share attached to an ESPA Grande ring. Very little customization was carried out on the major hardware components of the mission such as the telescope and spacecraft bus. This enabled the mission to minimize non-recurring engineering costs. Credit: Barclay et al.

Pandora’s telescope is a 45-centimeter aluminum Cassegrain instrument with two detector assemblies for the visible and near-infrared channels, the latter of which was originally developed for JWST. Its observations will serve as a valuable resource against which to examine JWST data, making it possible to distinguish a signal that may be from the upper layers of a star from the signature of gases in the planet’s atmosphere. The long stare will make it possible to accumulate over 200 hours of data on each of the mission’s targets. Let me quote a paper on the mission, one written as an overview developed for the 2025 IEEE Aerospace Conference:

Pandora is designed to address stellar contamination by collecting long-duration observations, with simultaneous visible and short-wave infrared wavelengths, of exoplanets and their host stars. These data will help us understand how contaminating stellar spectral signals affect our interpretation of the chemical compositions of planetary atmospheres. Over its one-year prime mission, Pandora will observe more than 200 transits from at least 20 exoplanets that range in size from Earth-size to Jupiter-size, and provide a legacy dataset of the first long-baseline visible photometry and near-infrared (NIR) spectroscopy catalog of exoplanets and their host stars.

A part of NASA’s Astrophysics Pioneers Program, Pandora comes in at under $20 million. It also has taken advantage of the rideshare concept, being launched beside two other spacecraft. The Star-Planet Activity Research CubeSat (SPARCS) is designed to study stellar flares and UV activity that can affect atmospheres and habitable conditions on target worlds. The topic is of high interest given our growing ability to analyze exoplanets around small M-dwarf stars, whose habitable zones expose them to high levels of UV. BlackCAT is an X-ray telescope designed to delve into gamma-ray bursts and other explosions of cosmic proportion from the earliest days of the cosmos.

Pandora will now go through systems checks by its primary builder, Blue Canyon Technologies, before control transitions to the University of Arizona’s Multi-Mission Operation Center in Tucson. The overview paper summarizes its place in the constellation of space observatories:

…a number of JWST observing programs aimed at detecting and characterizing atmospheres on Earthlike worlds are finding that stellar spectral contamination is plaguing their results. Typical transmission spectroscopic observations for exoplanets from large missions like JWST focus on collecting data for one or a small number of transits for a given target, with short observing durations before and after the transit event. In contrast to large flagship missions, SmallSat platform enable long-duration measurements for a given target. Pandora can thus collect an abundance of out-of-transit data that will help characterize the host star and directly address the problem of stellar contamination. The Pandora Science Team will select 20 primary science exoplanet host stars that span a range of stellar spectral types and planet sizes, and will collect a minimum of 10 transits per target, with each observation lasting about 24 hours. This results in 200 days of science observations required to meet mission requirements. With a one-year primary mission lifetime, this leaves a significant fraction of the year of science operations that can be used for spacecraft monitoring and additional science.

The paper is Barclay et al., “The Pandora SmallSat: A Low-Cost, High Impact Mission to Study Exoplanets and Their Host Stars,” prepared for the 2025 IEEE Aerospace Conference (preprint).

Explaining Cloud-9: A Celestial Object Like No Other

Some three years ago, the Five-Hundred Meter Aperture Spherical Telescope (FAST) in Guizhou, China discovered a gas agglomeration that was later dubbed Cloud-9. It’s a cute name, though unintentionally so, as this particular cloud is simply the ninth thus far identified near the spiral galaxy Messier 94 (M94). And while gas clouds don’t particularly call attention to themselves, this one is a bit of a stunner, as later research is now showing. It’s thought to be a gas-rich though starless cloud of dark matter, a holdover from early galaxy formation.

Scientists are referring to Cloud-9 as a new type of astronomical object. FAST’s detection at radio wavelengths has been confirmed by the Green Bank Telescope and the Very Large Array in the United States. The cloud has now been studied by the Hubble telescope’s Advanced Camera for Surveys, which revealed its complete lack of stars. That makes this an unusual object indeed.

Alejandro Benitez-Llambay (Milano-Bicocca University, Milan) is principal investigator of the Hubble work and lead author of the paper just published in The Astrophysical Journal Letters. The results were presented at the ongoing meeting of the American Astronomical Society in Phoenix. Says Benitez-Llambay:

“This is a tale of a failed galaxy. In science, we usually learn more from the failures than from the successes. In this case, seeing no stars is what proves the theory right. It tells us that we have found in the local Universe a primordial building block of a galaxy that hasn’t formed.”

Here there’s a bit of a parallel with our recent discoveries of interstellar objects moving through our Solar System. In both cases, we are discovering a new type of object, and in both cases we are bringing equipment online that will, in relatively short order, almost certainly find more. We get Cloud-9 through the combination of radio detection via FAST and analysis by the Hubble space telescope, which was able to demonstrate that the object does lack stars.

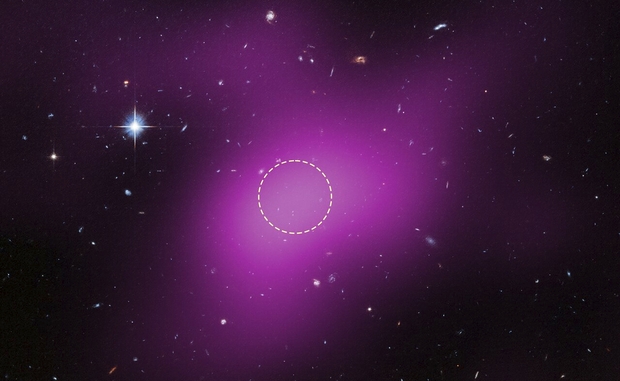

Image: This image shows the location of Cloud-9, which is 14 million light-years from Earth. The diffuse magenta is radio data from the ground-based Very Large Array (VLA) showing the presence of the cloud. The dashed circle marks the peak of radio emission, which is where researchers focused their search for stars. Follow-up observations by the Hubble Space Telescope’s Advanced Camera for Surveys found no stars within the cloud. The few objects that appear within its boundaries are background galaxies. Before the Hubble observations, scientists could argue that Cloud-9 is a faint dwarf galaxy whose stars could not be seen with ground-based telescopes due to the lack of sensitivity. Hubble’s Advanced Camera for Surveys shows that, in reality, the failed galaxy contains no stars. Credit: Science: NASA, ESA, VLA, Gagandeep IAnand (STScI), Alejandro Benitez-Llambay (University of Milano-Bicocca); Image Processing: Joseph DePasquale (STScI).

We can refer to Cloud-9 as a Reionization-Limited H Ι Cloud, or RELHIC (that one ranks rather high on my acronym cleverness scale). H I is neutral atomic hydrogen, the most abundant form of matter in the universe. The paper formally defines RELHIC as “a starless dark matter halo filled with hydrostatic gas in thermal equilibrium with the cosmic ultraviolet background.” This would be primordial hydrogen from the earliest days of the universe, the kind of cloud we would normally expect to have become a ‘conventional’ spiral galaxy.

The lack of stars here leads co-author Rachael Beaton to refer to the object as an ‘abandoned house,” one which likely has others of its kind still awaiting discovery. In comparison with the kind of hydrogen clouds we’ve identified near our own galaxy, Cloud-9 is smaller, certainly more compact, and unusually spherical. Its core of neutral hydrogen is measured at roughly 4900 light years in diameter, with the hydrogen gas itself about one million times the mass of the Sun. The amount of dark matter needed to create the gravity to balance the pressure of the gas is about five billion solar masses. While the researchers do expect to find more such objects, they point out that ram pressure stripping can deplete gas as any cloud moves through the space between galaxies. In other words, the population of objects like RELHIC is likely quite small.

The paper places the finding of Cloud-9 in context within the framework now referred to as Lambda Cold Dark Matter (ACDM), which incorporates dark energy via a cosmological constant into a schemata that includes dark matter and ordinary matter. Quoting the paper’s conclusion:

In the ΛCDM framework, the existence of a critical halo mass scale for galaxy formation naturally predicts galaxies spanning orders of magnitude in stellar mass at roughly fixed halo mass. This threshold marks a sharp transition at which galaxy formation becomes increasingly inefficient (A. Benitez-Llambay & C. Frenk 2020), yielding outcomes that range from halos entirely devoid of stars to those able to form faint dwarfs, depending sensitively on their mass assembly histories. Even if Cloud-9 were to host an undetected, extremely faint stellar component, our HST observations, together with FAST and VLA data, remain fully consistent with these theoretical expectations. Cloud-9 thus appears to be the first known system that clearly signals this predicted transition, likely placing it among the rare RELHICs that inhabit the boundary between failed and successful galaxy formation. Regardless of its ultimate nature, Cloud-9 is unlike any dark, gas-rich source detected to date.

The paper is Gagandeep S. Anand et al., “The First RELHIC? Cloud-9 is a Starless Gas Cloud,” The Astrophysical Journal Letters, Volume 993, Issue 2 (November 2025), id.L55, 7 pp. Full text.