Keeping information viable is something that has to be on the mind of a culture that continually changes its data formats. After all, preserving information is a fundamental part of what we do as a species — it’s what gives us our history. We’ve managed to preserve the accounts of battles and migrations and changes in culture through a wide range of media, from clay tablets to compact disks, but the last century has seen swift changes in everyday products like the things we use to encode music and video. How can we keep all this readable by future generations?

The question is challenging enough when we consider the short term, needing to read, for example, data tapes for our Pioneer spacecraft when we’ve all but lost the equipment needed to manage the task. But think, as we like to do in these pages, of the long-term future. You’ll recall Nick Nielsen’s recent essay Who Will Read the Encyclopedia Galactica, which looks at a future so remote that we have left the ‘stelliferous’ era itself, going beyond the time of stars collected into galaxies, which is itself, Nick points out, only a small sliver of the universe’s history.

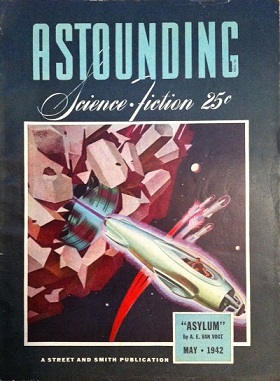

Can we create what Isaac Asimov called an Encyclopedia Galactica? If you go back and read Asimov’s Foundation books, you’ll find that the Encyclopedia Galactica appears frequently, often quoted as the author introduced new chapters. From its beginnings in a short story called “Foundation” (Astounding Science Fiction, May 1942), the Encyclopedia Galactica was seen as the entirety of knowledge throughout a widespread galactic empire. Carl Sagan introduced the idea to a new audience in his video series Cosmos as a cache of all information and knowledge.

Image: The May, 1942 issue of Astounding Science Fiction, containing the first of the short stories that would eventually be incorporated into the Foundation novels.

Now we have an interesting new paper from Stefano Mancini and Roberto Pierini (University of Camerino, Italy) and Mark Wilde (Louisiana State University) that looks at the question of information viability. An Encyclopedia Galactica is going to need to survive in whatever medium it is published in, which means preserving the information against noise or disruption. The paper, published in the New Journal of Physics, argues that even if we find a technology that allows for the perfect encoding of information, there are limitations that grow out of the evolution of the universe itself that cause information to become degraded.

Remember, we’re talking long-term here, and while an Encyclopedia Galactica might serve a galactic population robustly for millions, perhaps billions of years, what happens as we study the fate of information from the beginning to the end of time? Mancini and team looked at the question with relation to what is called a Robertson-Walker spacetime, which as Mark Wilde explains in this video abstract of the work, is a solution of Einstein’s field equations of general relativity that describes a homogeneous, isotropic, expanding or contracting universe.

In addition to Wilde’s video, let me point you to The Cosmological Limits of Information Storage, a recent entry on the Long Now Foundation’s blog. As for the team’s method using the Robertson-Walker spacetime as the background for its development of communication theory models, it is explained this way in the paper:

… we can consider any quantum state of the matter field before the expansion of the universe begins and define, without ambiguity, its particle content. We then let the universe expand and check how the state looks once the expansion is over. The overall picture can be thought of as a noisy channel into which some quantum state is fed. Once we have defined the quantum channel emerging from the physical model, we will be looking at the usual communication task as information transmission over the channel. Since we are interested in the preservation of any kind of information, we shall consider the trade-off between the different resources of classical and quantum information.

In other words, encoded information is modeled against an expanding universe to see what happens to it. The result challenges the idea that there is any such thing as perfectly stored information, for the paper finds that over time, as the universe expands and evolves, a transformation inevitably occurs in the quantum space in which it is encoded. Noise is the result, what the authors refer to as an ‘amplitude damping channel.’ Information that is encoded into a storage medium is inevitably corrupted by changes to the quantum state of the medium.

Cosmology comes into play here because the paper argues that faster expansion of the cosmos creates more noise. We can encode our information in the form of bits or we can use information stored and encoded by the quantum state of particular particles, but in both cases noise continues to mount as the universe continues to expand. Collecting all the material for our Encyclopedia Galactica may, then, be a smaller challenge than preserving it in the face of cosmic evolution. On the time scales envisioned in Nick Nielsen’s essay (and studied at length in Fred Adams’ and Greg Laughlin’s book The Five Ages of the Universe: Inside the Physics of Eternity, the keepers of the encyclopedia have their work cut out for them.

Preserving the Encyclopedia Galactica will demand, it seems, continual fixes and long-term vigilance. But consider the researchers’ thoughts on the direction for future work:

…one could also cope with the degradation of the stored information by intervening from time to time and actively correcting the contents of the memory during the evolution of the universe. In this direction, channel capacities taking into account this possibility have been introduced… In another direction, and much more speculatively, one might attempt to find a meaningful notion for entanglement assisted communication in our physical scenario by considering Einstein-Rosen bridges… or entanglement between different universe’s eras, related to dark energy.

Now we’re getting speculative indeed! I’ve left out the references to papers on each of the possibilities within that quotation — see the paper, which is available on arXiv, for more. Mark Wilde notes in the video referenced above that another step forward would be to look at more general models of the universe in which information could be encoded in such exotic scenarios as the spacetime near a black hole. The latter is an interesting thought, and my guess is that we’ll have new work from these researchers delving into such models in the near future, a time close enough to our own that the data should still be viable.

The paper is Mancini et al., “Preserving Information from the beginning to the end of time in a Robertson-Walker spacetime,” New Journal of Physics 16 (2014), 123049 (abstract / full text).

What I am not seeing is how encoding can be maintained against noise through redundancy in the data.

Our immediate problem is to find storage and coding mechanisms that are sufficiently static to prevent loss of data due to bit rot, obsolete media and encoding formats. Our desire for secrecy resulting in encryption algorithms that obsolete over time exacerbates teh problem of useful storage.

Guardians of the Galaxy becomes Guardians of the Library?

I’ve never been crazy about the idea of Encyclopedia Galactica or the idea of any Encyclopedia like Wikipedia as the sum of all human knowledge. one issue I feel is that even if an article is written by experts your only getting a small window into the subject and very much at the mercy of the authors bias. When I say bias I don’t even mean that in a malicious way, but look at Historiography the way we view history changes over time depending on many factors. we would also focus on things we all collectively deem important. I could get a ton of information on WWII but what if I wanted something on the 1955 invasion of Coasta Rica? an Encyclopedia puts to much emphasis on what the editors deem important not what you might want.

I don’t see an Encyclopedia as the best way to store all human knowledge, maybe as a window into our thinking at a moment in time but not as a record of all we know. Rather I would propose something like Vannevar Bush’s Memex or Ted Nelson’s Xanadu. Not just a summary of a topic, these systems would allow users to search for an link together relevant information on the subject. So I can’t find information on the 1955 Coasta Rican war, but I can find out who was president at the time, what where the issues society was facing, culture, relations with neighbors and soon we actually get a good picture of what was going on. in Xanadu things linked together save a copy of what they link too and everything anyone cares about has back ups.

Bush’s Memex system in action, Xanadu would be similar but run on the computer rather than use microfilm

https://www.youtube.com/watch?v=c539cK58ees

I always feel why have Encyclopedia Galactica when you can have a Akashic Record?

—–

Sorry if this is a little off topic but I have wanted to post this since the last article of the subject and I do think it relates to the topic in an important way

@Brian – Wikipedia has a Spanish entry :

http://es.wikipedia.org/wiki/Invasi%C3%B3n_de_Costa_Rica_de_1955

You could run it through Google translate for English (first para below).

“On January 7, 1955 takes place in Costa Rica an invasion attempt in order to overthrow the democratically elected government of José Figueres by forces close to his nemesis, Dr. Rafael Ángel Calderón Guardia . It came from Nicaragua and had the support of dictators Anastasio Somoza , Marcos Pérez Jiménez in Venezuela and Rafael Leonidas Trujillo in the Dominican Republic .”

Wikipedia does offer not just the distilled version, but many external and internal links to explore further.

One question I would have is this: could a theoretical information base need more bits than the physical universe could offer for storage?

Two sets of the encyclopedia, one near the black hole and one inside it, with quantum entanglement between the two. If the singularity is a wormhole, the information could end up anywhere, or anytime, or even before or after time exists. We could even add updates via the entangled set left behind.

No encyclopedia is or can be all inclusive or totally accurate. Just continue compiling, preserving, and making them available. It’s their use that matters.

People often just discard things they don’t think they need (including information), so a fully detailed record of the past may never be attainable. And a friend of mine doing genealogical research sometimes finds himself puzzling over contradictions in the documents that do survive. He thinks a couple of his ancestors outright lied about a few things, never of course imagining that someone a hundred years later would be trying to make sense of it all. Add that up over billions/trillions of years…

I echo what Brian related, and recently Vint Cerf discussed on BBC America the idea of making copies of the OS, applications, and data in the effort to prevent a digital dark age http://www.bbc.com/news/science-environment-31450389

To me, that idea is like pressing vinyl, because you don’t expend hours making an emulator. Another idea is to have an automated system look at an obsolete system and recode it in a contemporary machine independent language, like Java. If humankind is indeed moving toward Kurzweil’s singularity, perhaps Turing’s universal machine is not far off for recognizing ancient processing and making it usable to future generations.

This is not speculative at all, and it is how the EG will easily survive as long as its keepers. It will exist in a trillion copies that can be used at any time to correct any errors that might crop up in any of them.

Alex:

Obsoletion of the algorithm is not the problem so much, loss of the key is. A good way to utterly, irretrievably destroy huge amounts of information is to encrypt them and then forget the key.

Quantum entanglement cannot be used to transmit information, that is a well-established fact.

These latter are not alternatives to an encyclopedia. They are alternatives to our current Internet. That one may or may not be inferior, but it does serve the same purpose. The task of an encyclopedia is to strain out of the raw information that which can be called knowledge, as opposed to information. Which is why the fraction of kitten pictures on Wikipedia is far lower than on the Internet as a whole.

I think it is a pretty good bet that the Encyclopedia Galactica we are talking about will bear the name “Wikipedia”, and it already exists.

To be more precise, Memex and Xanadu are alternative versions of the World-Wide Web, which itself is just one of the many protocols supported by the Internet.

@Eniac From a ‘Science News’ article,

“The achievement, reported in the Feb. 26 Nature, does not bring scientists much closer to teleporting pens, puppies or people à la Star Trek. But teleporting multiple properties over large distances would enhance proposed quantum communication networks that rely on encoding information in particles’ delicate quantum properties.”

https://www.sciencenews.org/article/physicists-double-their-teleportation-power?utm_source=Society+for+Science+Newsletters&utm_campaign=c04d1bd682-Editors_picks_week_of_Feb_23_2015_2_27_2015&utm_medium=email&utm_term=0_a4c415a67f-c04d1bd682-93291961

Many facts only remain facts until they are proven wrong.

I’d like to suppose that loss of data may be a trivial problem to either any sufficiently advanced civ or, more near term, an ASI (Artificial Super-Intelligence), say 90 mins or so after the singularity. Millions of years down the line is still ridiculously premature to actually needing to employ the ‘fix’ so there may well be plenty of time to perfect the science.

@Alex

As for data storage… the Universe (let alone the Bulk, multi-verse and other possible domains)… ok, I should’ve said Hubble Volume, is only getting bigger…faster. But can a civilizations rate of data aquisition (and then reduction to knowledge) outpace the ever increasing volume to exploit for storage? That’s a valid question. However, going down the holographic principle route, if the 3d informational content of a volume can be held on the 2d surface describing that volume then what would that imply? What if an advanced civ knew of a way to access / create nested 2d membranes enclosing 3d spheres (arbitrarily closely spaced in a concentric fashion) as a means of data storage? I didn’t want to become too speculative, sorry, but I’m referring to an advanced civ afterall, no matter how niave anything I can imagine really would be.

@NS

A way of retrieving the edited or even distorted facts (info) of the past may well be to use historical simulations to fill in the missing data. I would hazard a guess that a 1 Myr civ would’ve had that ability for maybe 99% of its duration assuming Moores Law is universally average. Then the EG becomes fully replete in all those subtle and ‘minor’ facts that weren’t there earlier, no matter how judicously they were omitted from history.