Massive elements can build up in celestial catastrophes like supernovae, with the rapid-, or r-process, producing neutrons at a high rate as elements much heavier than lead or even uranium emerge. But we’re learning that such events happen not just in supernovae but also in neutron star mergers, which are thought to occur only a few times per million years in the Milky Way. A new paper looks at meteorites from the early Solar System to study what the decay of their radioactive isotopes can tell us about the period in which they were created.

Such isotopes have half-lives shorter than 100 million years, but we can determine their abundances in the early Solar System through meteorite studies like these. What Szabolcs Márka (Columbia University) and Imre Bartos (University of Florida) have done is to study how two of the short-lived r-process isotopes were produced, using simulations of neutron star mergers in the Milky Way to calculate the abundances of specific radioactive elements. The simulations show that about 100 million years before the Earth formed, a neutron star merger occurred some 1,000 light years from the gas cloud that would become the Solar System.

This would have been a spectacular event, says Márka:

“If a comparable event happened today at a similar distance from the solar system, the ensuing radiation could outshine the entire night sky.”

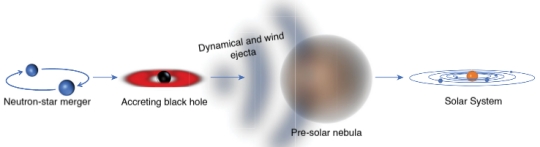

Image: This is Figure 1 from the paper, which appeared in Nature. Caption: The path of r-process elements. When neutron stars merge, they create an accreting black hole (the accretion disk is shown red). Tidal (dynamical) forces and winds from the accretion disk eject neutron-rich matter. This ejected matter (ejecta, shown grey) undergoes rapid neutron capture, producing heavy r-process elements, including actinides. The ejecta reach the pre-solar nebula and inject the heavy elements that will remain in the Solar System. Credit: Szabolcs Márka / Imre Bartos.

Because a supernova can likewise produce actinides (elements from actinium to lawrencium — atomic numbers 89-103 — all of them radioactive), the authors use the same methods to analyze these. The evidence points to a neutron star event rather than supernovae explosions near the early Solar System, for the abundance ratio found via meteorite studies “…is well below the uniform production model’s prediction…” What the authors mean by ‘uniform production model’ is the fact that supernovae are orders of magnitude more frequent in the Milky Way than neutron star mergers, and their production rate can be approximated as being uniform in time:

By comparing numerical simulations with the early Solar System abundance ratios of actinides produced exclusively through the r-process, we constrain the rate of occurrence of their Galactic production sites to within about 1?100 per million years. This is consistent with observational estimates of neutron-star merger rates, but rules out supernovae and stellar sources. We further find that there was probably a single nearby merger that produced much of the curium and a substantial fraction of the plutonium present in the early Solar System. Such an event may have occurred about 300 parsecs away from the pre-solar nebula, approximately 80 million years before the formation of the Solar System.

The authors point out that working backward to reconstruct the abundance of another of the short-lived elements would help to produce a more complete picture of the neutron star merger.

Because I was having trouble with the distinction between actinides produced by supernovae and those from neutron star mergers, I asked Dr. Bartos for a clarification. He was kind enough to provide the following, explaining how supernovae can be ruled out (and many thanks to Dr. Bartos for his quick response!):

The difference between supernovae and neutron star collisions that we take advantage of is their relative rates. Supernovae occur a thousand times more frequently than neutron star collisions in the Milky Way. This means that the shortest lived isotopes would be regularly replenished if produced by supernovae, making them certain to be present at the time of the Solar System’s formation. For the less common neutron star collisions, the shortest lived isotopes are depleted soon after a merger, and stay depleted until the next. This means that it is probable that at the time of the Solar System’s formation, this isotope will be depleted. The observed abundances of the short lived Curium-247 and Iodine-129 isotopes in the early Solar System show this depletion, ruling out supernovae.

Dr. Bartos went on to explain the steps he and co-author Márka took to clarify the result:

An extra step is that we normalize the Curium and Iodine amounts found in the early Solar System with the amount of longer-lived r-process elements (Thorium-232 and Iodine-129, respectively). This is important because this way our results don’t depend on how much r-process a single event produces. The ratios stay the same.

We’re in the early era of using gravitational wave astronomy through observations from LIGO and the Virgo collaboration to make the call on the rate of spectacular events like the merger of two neutron stars, a rate that will be tightened further with continuing observation. Thus the Abadie et al. paper I reference below, tapped by the authors, which points to the rich observational fields gravitational waves help us explore. It was a scant five years after that paper that the first gravitational wave detection was made, and in the short time since, we are now producing catalogs of black hole and neutron star mergers.

The paper is Bartos & Márka, “A nearby neutron-star merger explains the actinide abundances in the early Solar System,” Nature Vol. 569 (2 May 2019). pp. 85-87 (abstract). The Abadie paper referenced above is Abadie et al., “Topical review: predictions for the rates of compact binary coalescences observable by ground-based gravitational-wave detectors,” Classical and Quantum Gravity 27, 173001 (2010). Abstract.

Could this be a Fermi paradox answer? It seems weird that something so rare happened so near to us, and so close to when life formed (if we can call ~900m years close).

A quick google says that radon is required for life, and it’s produced by uranium decay, but that seems pretty tenuous.

It is likely that neutron star mergers do not form a black hole. Due to a mass constraint on a neutron star of ~1.4 solar masses and merger dynamics the resulting object is unlikely to mass over 2.5 solar masses, and this has not been demonstrated (yet) to be enough to form a black hole. There is little empirical or theoretical basis to say. LIGO may help with that.

The result may be one neutron star plus lots of gravitational wave radiation (carrying away some of the mass/energy of the system) and a debris cloud. But this does not mean that the metal production and ejection is necessarily out of line with what is being proposed here.

More massive neutron stars are smaller allowing a deeper fall into the gravity well so BH formation is much more likely. However the rapid rotation of the merger may allow more material to leave than if it was not spinning.

What do you consider a massive neutron star? The mass range of neutron stars is very narrow.

They range from 1 to 3 sol masses with 1.4 been about normal. We must remember if say we go from a 1 mass NS to a 2 mass NS the radius shrinks by about 25 % so the NS’s can get closer effectively quadrupling the gravity effect with the higher double mass. There must be a lower limit when two NS’s can’t form a BH due to mass loss and spinning, I am not sure what that would be.

That’s a theoretical range, and the maximum mass NS discovered so far is 2 solar masses. If my references are correct the smallest black hole discovered so far is 5 solar masses. A BH can be any mass, but stellar dynamics dictate whether one can form from a BNS merger.

Looking back at the paper on GW170817 the system total mass was about 2.7 solar masses and the gravitational wave radiation was 0.025 solar masses (I was surprised it was only 1% of system mass considering its luminosity). Follow up observations are ambiguous as to whether there was a lot of debris plus one NS or some debris plus a small BH.

Internal NS state and merger dynamics, plus unknowns about quark dynamics, make a theoretical prediction difficult. That is, how the collapse progresses and how much of the BNS is ejected.

My take is that getting a BH out of a BNS merger out of this is low. I could be wrong. Better minds than mine are thinking about it.

Are supernovae and neutron star mergers the only way to produce these high atomic number elements? Are there other “rare” events that can achieve the same ratios of elements based on their 1/2-lives?

For the very heaviest elements (U, Pu etc.) I think the answers to your two questions are yes and no respectively Alex. To back that up see the excellent periodic table of the elements shown in Wikipedia’s article on Nucleosynthesis at https://en.m.wikipedia.org/wiki/Nucleosynthesis

Thank you. A perfect answer with necessary support information.

Further research that should be done is to determine which elements can be made by the much more common supernovae process and the much rarer neutron star collision and which elements can only be produced by neutron star collisions. Then you need to determine which, if any, of the elements in the latter group are used in biology (e.g. catalysts). The results of this further research will tell us if this is a Fermi’s paradox factor or not.

The research you call for was greatly helped along by last year’s multi-messenger NS merger event. Prior to that event it was just ASSUMED that such mergers were the source of the heaviest elements, but now the proof is in. The origin of the elements periodic table included in the wiki article I linked to above was produced after those resent findings came in, so it’s very up to date. A careful examination of that chart reveals that the latest thinking is that all elements from Polonium (atomic number 84) on up are produced exclusively from (or are radioactive decay daughters of atoms produced in) NS merger events.

It may no longer be completely accurate to say that the actinides are also produced by supernova. (Of course though, it takes TWO SN in a closely orbiting binary star system to produce the two NS that will then spiral together to produce the kilonova explosions that form the actinides.)

AW, could you give me that link? All I found is radon being hazardous, and being a noble gas would hardly be involved in biochemistry.

That comment is non-sense. As you say, it’s a noble gas, so can’t be part of biomolecules. Also, its most stable isotope has a half-life of only 3.8 days.

This seems highly unlikely to me. Possibly there is yet another source of radioactive species responsible? Something that occurs much more frequently and yet is neither supernova nor neutron star merger. Either that or the rate of neutron star collisions has been variable over time. The 1,000 fold difference in the frequency of occurrence of these two types of events puts us into a statistically extremely unlikely event scenario.

The higher the atomic number, the more neutrons are needed to hold all those protons together in the heaviest elements. This is why colliding neutron stars are so productive in creating heavy elements; there is an abundant supply of neutrons with the required density to form large numbered nuclei.

It is logical to assume that the rate of NS mergers would have been higher in the distant past when it is known that many galaxies were going though starburst periods.

If a supernova event is 1000 times more likely and it produces the same elements, then why don’t we have a regular supply of them due to supernovas after the supposed neutron merger? The logic seems a bit off to me.

The idea that SN events produce the same elements as NS mergers is out of date. NS mergers are now thought to be the sole source of the heaviest elements.

This was a bit hard to follow because the important quantities are the Ratios of the short-lived isotopes to the longer lived ones and these seem to often be replaced by just the Amounts of the short-lived isotopes.

Nice NS merger video,

https://www.esa.int/spaceinvideos/Videos/2017/10/Neutron_star_merger

If the solar system formed 1,000 light years from the merger, what do systems that formed 10 light years from it look like?

We don’t know what it looked like 4.5 billion years ago, but we have to assume that the heavy elements in our solar system were seeded by Supernova’s or Kilonovas which were relatively nearby.

I don’t think we can use the depletion of short lived elements alone to figure that it rules out a super nova based on the frequency of supernova explosions since it depends on the distance from the gas cloud which gave birth of our solar system which did not take that long to form, but one hundred million years is enough time for the heavy elements to take a trip of 1000 light years here. The general astrophysical view is that the super nova had to be close or the same star cluster as our Sun.

We could have both a supernova explosion and neutron star merger since they both create heavy elements. The kilonova makes more heavy elements, but those are rarer or occur with less frequency so supernova’s still might make most of the heavy elements with the kilonova making a substantial percentage.

https://en.wikipedia.org/wiki/Extinct_radionuclide

https://iopscience.iop.org/article/10.1086/587613

https://en.wikipedia.org/wiki/Formation_and_evolution_of_the_Solar_System

We still have Iron 60 and light metals which could have come from a supernova explosion. The supernova might do more to collapse a gas cloud that a kilonova which creates high energy gamma rays for a short time and a black hole is left over. Since our galaxy has changed a lot in 4.5 billion years and there has been a lot of galactic years or revolutions, things don’t look the same now so it might be hard to find the supernova remnant today since no gas cloud is left but only a neutron star or black hole.

I like idea is that super AGB star formed and exploded first and seeded our solar systems primordial cloud afterwards and initiated the contraction of the gas cloud which formed our solar system and Sun.

Those are good links in your previous post Geoffrey. Study of how the elements were created has been a lifelong interest of mine (well, from the time I first saw a periodic table as a child). Therefore this discussion has been most interesting.

In regard to the large contribution from Asymptotic Giant Branch stars look at the green sections labeled “Dying low-mass stars” in the origins of the elements table here: https://en.m.wikipedia.org/wiki/File:Nucleosynthesis_periodic_table.svg

One repeatedly sees statements that our elements came from Supernova, and that is indeed mostly true, but low-mass stars that never erupt as SN also had a big role to play. When these stars are in the AGB phase they experience “dredge ups” that pull heavier elements up from their cores clear to their surfaces where they can then be expelled into the interstellar medium by stellar winds. Especially is this the case at the end of the AGB period by which time the star has ejected up to 70% of its mass, producing a planetary nebula on its way to becoming a white dwarf. See https://en.m.wikipedia.org/wiki/Asymptotic_giant_branch

Also, “dying low-mass stars” are much more common than the very massive stars that form core collapse SN, and they don’t wreck the neighborhood ether.

NS mergers, in addition to producing the Uranium, etc. needed to keep a rocky planet’s magnetic dynamo running over the long haul, have the advantage of being much less disruptive to their stellar neighborhoods.

Thankyou, Bruce D. Mayfield for finding that mistake in my post that asymptotic giant branch stars don’t explode into supernova. I actually read about AGB stars last year in an astrophysics book and I forgot about the dreg ups and their small size, and a star has to have enough mass to make iron in its core which all core collapsing supernovas have to have in order to become supernovas, the reason why AGB stars can’t become supernova’s . A lot more elements have to be fused through nuclear fusion to get to iron and more mass is needed to do that since the gravity is what causes the particles to collide at high velocity under greater pressure dependent on mass. The more more mass, the stronger the gravity the higher the core temperature and the more heavy the atomic number in the table of elements the core can form.

The book is called Astrophysics is Easy by Michael Ingles. He writes about the core collapse of supernovas which is interesting. The neutronization of the star causes the electrons to be combined with protons at a fast rate. Neutrons have neutral charge so there is no electron repulsion from positively charge protons in the core or electron degeneracy to balance or counteract gravity and core contraction. The iron core quickly collapses with the emission of high energy gamma rays and neutrinos.

I don’t have the page number handy. It was a library book. Elements heavier than Iron in super novas can also occur through S process in AGB stars of course and R process occur in supernovas and kilonovas.

I don’t think you actually made a mistake Geoffrey, as some AGB at the high mass end of their range might very well explode as electron capture SN. From what I’ve read this is an area of considerable remaining uncertainty though. My point was just to highlight the role of common low mass stars in creating elements even without SN blasts in many cases.

Excuse me the S process in AGB stars don’t occur in supernovas only in AGB stars. Yes, the pauli exclusion principle between the electron and protons are overcome in the core collapse into a neutron star since the Chandrasekhar mass 1.4 solar masses is passed.

Wikipedia says AGB stars rarely explode as a supernova.

https://en.wikipedia.org/wiki/Asymptotic_giant_branch

When they do, the star has to be massive enough so the Iron core passes the Chandrasekhar limit of 1.4 masses. The fusion of silicon to Iron builds up the mass or the Iron core Once the core is more than 1.4 solar masses, there is a fast neutronization process, the result of electrons being combined with protons which turn to neutrons. Neutrons have no charge so there is no electron degeneracy pressure to stop the collapse and the Iron core collapses down to 5 Km and then rebounds and blows itself apart and blows apart the outer part of the star and that is where a lot of heavy elements are formed. Ibid.

The positive charges in of the Protons in the Iron core repel each other since like charges repel and opposite charges attract to be specific.