A French company called Exotrail has been working on electric propulsion systems for small spacecraft down to the CubeSat level. As presented last week at the 13th European Space Conference in Brussels, the ExoMG Hall-effect electric propulsion system was flown in a demonstration orbital mission in November, launched to low-Earth orbit by a PSLV (Polar Satellite Launch Vehicle) rocket. A brief nod to the PSLV: These launch vehicles were developed by the Indian Space Research Organisation (ISRO), and are being used for rideshare launch services for small satellites. Among their most notable payloads have been the Indian lunar probe Chandrayaan-1, and the Mars Orbiter Mission called Mangalyaan.

Hall-effect thrusters (HET) trap electrons emitted by a cathode in a magnetic field, ionizing a propellant to create a plasma that can be accelerated via an electric field. The technology has been in use in large satellites for many years because of its high thrust-to-power ratio.

What catches my eye about ExoMG is its size, about that of a 2-liter bottle of soda as opposed to conventional Hall-effect thrusters that weigh in at refrigerator size and demand kilowatts of power. The Exotrail thruster, which was successfully fired during the November mission, runs on about 50 watts of power, and appears to be a propulsion system that can adapt to satellites in the range of 10 to 250 kg. Collision avoidance and deorbiting for satellites in the CubeSat range as well as flexible orbital operations become feasible with this technology.

Image: Satellite using Exotrail technology undergoing testing. Credit: Exotrail.

The company is calling ExoMG “the first ever Hall-effect thruster operating on a sub-100kg spacecraft,” and points to four missions slated to fly with the technology in 2021. I’m interested in how the diminutive thruster can be employed in constellations of satellites. We already rely on satellites working together as a system — GPS is a classic example. The needs of navigation and communication force the issue. Think Iridium and Globalstar in terms of telephony, or the Russian Molniya military and communications satellites. The list could be easily extended.

So what we have evolving is a set of constellation technologies with immediate application to satellites in low-Earth orbit. Exotrail talks about “high coverage telecommunication constellations or high revisit rate earth observation constellations at 600-2000km altitude,” enabled by orbit-raising via the new thrusters as well as necessary de-orbiting capabilities, but we should be looking as well at the controlling software and thinking in longer timeframes. Satellites working together is the theme.

Increasing miniaturization coupled with artificial intelligence and next-generation electric thrusters can be enablers for planetary probes flying in swarm formation in the outer system, perhaps using sail technologies as the primary propulsion to get them there. The one-size fits all purposes mission begins to give way to a fleet approach, tiny probes networking their data as they operate at targets as interesting as Neptune and Pluto. It’s also worth noting that the entire Breakthrough Starshot concept calls for not one but an armada of small sails in interstellar space, offering a hedge against catastrophic failure of any one craft, and conceivably drawing on swarm technologies that could facilitate data gathering and return.

So I keep an eye on near-term technologies that explore this space of miniaturization, efficient orbital adjustment and constellation operations. It will be intriguing to see how Exotrail’s operational software (called ExoOPS) deals with the interactions of such constellations. Likewise interesting is last fall’s firing of the European Space Agency’s Helicon Plasma Thruster, which ESA presents as a “compact, electrodeless and low voltage design” optimized for propulsion in small satellites, including “maintaining the formation of large orbital constellations.”

The HPT achieved its first test ignition back in 2015 at ESA’s Propulsion Laboratory in the Netherlands, and has been under development in terms of improved levels of ionization and acceleration efficiency ever since, using high-power radio frequency waves, as opposed to the application of an electrical current, to render propellant into a plasma for acceleration.

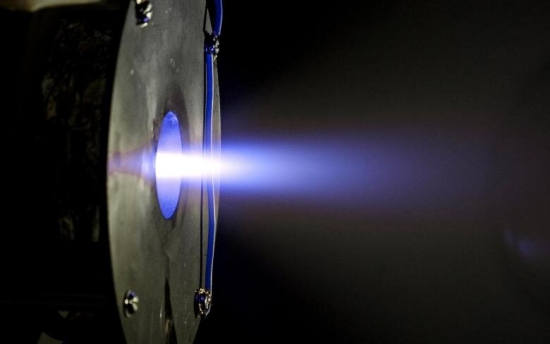

Image: A test firing of Europe’s Helicon Plasma Thruster, developed with ESA by SENER and the Universidad Carlos III’s Plasma & Space Propulsion Team (EP2-UC3M) in Spain. This compact, electrodeless and low voltage design is ideal for the propulsion of small satellites, including maintaining the formation of large orbital constellations. Credit: SENER.

Where will constellation and formation flying in Earth orbit take us as we adapt them for environments further out? Will we one day see deep space swarms of Cubesat-sized (and smaller) craft exploring the outer planets?

“Increasing miniaturization coupled with artificial intelligence and next-generation electric thrusters can be enablers for planetary probes flying in swarm formation in the outer system”

— let’s not get too excited. It takes a long, long time to develop hardened chips for deep space exploration. The current generation of space probes is running on chips that are roughly equivalent to a 1995 Pentium! The Curiosity and Perseverance rovers are technological wonders, but in terms of processing power, they’re outclassed by a ten-year-old iPhone.

This isn’t a US thing, BTW — European, Japanese and Chinese probes are all pretty stupid too. It’s simpler, cheaper and safer.

This is a solvable problem — but because deep space probes haven’t really needed that much processing power, nobody’s been throwing money at it. To produce space-hardened chips comparable in performance to what you have in your laptop right now? That would probably take at least a decade, if someone were trying to do it, which nobody is.

So, any sort of AI in space is a long way off.

Doug M.

“deep space probes haven’t really needed that much processing power”

Indeed, and they still don’t. Adding “AI” in this context doesn’t require all that much processing speed or memory. We aren’t talking about HAL-2000 here. The decision tree for most in-flight purposes will be shallow and not terribly fuzzy.

Quite a lot of hardening requirement is being achieved by redundant critical processing and interface blocks, software that can identify and route around failure, including detection of software faults due to processor and memory damage. Encasing chips in unobtanium is increasingly unnecessary.

While I agree that the power demand for AI on silicon will be low, I disagree about the type of AI that can be applied. Object detection AI for probes (flyby, orbital, and surface) will require neural network technology, trained back on Earth, to identify and characterize what the cameras and other sensors are seeing, in order to make decisions. There are many domains where pattern recognition AI would be useful to robotic probes, and it would certainly make communication far easier from Earth. Surface rovers could navigate safely to commands like: “That rock, shaped like a pyramid on the the horizon, go to it.” and the rover determines its best path to the target, planning the route to avoid obstacles.

AI isn’t just neural networks. Those are general purpose resource hogs. Algorithms targetted at a constrained problem space can be very considerate of resources. Another reason is that it is usually acceptable that image analysis for, say, rock identification, take 30 seconds rather than a few milliseconds, or use a low pixel count (low resolution image) to reduce resource requirements without negatively impacting mission operations.

I think we will have to agree to disagree.

While I still like symbolic AI (GOFAI), almost all the advanced work today is with advanced neural network models. For applications like object recognition and almost any noisy pattern recognition, symbolic AI is not good. Where it excels is in the sort of formal knowledge where algorithms and logic are paramount. But even there, they can be brittle, as the demise of 1980s-1990s period expert systems and their ilk demonstrated.

As for NN being resource hogs. Well, training is definitely not something to do on a probe. But you can deploy the trained network. Indeed we are doing so on our phones these days. In addition, specialized neuromorphic chips are able to handle these models with very low power requirements. But yes, they generally require more memory and CPU cycles than symbolic AI, but their pattern recognition performance is far superior. Even our phones have GBytes of memory and quite fast ARM (or similar low power) chips as CPUs. I don’t see computing resources as an issue, even with relatively older technology. If choosing chip technology today for probes to be launched a decade away, there is plenty of performance to run advanced AI systems. Today’s technology will look old and underpowered to users a decade hence. I believe robustness to radiation damage can be handled in a number of ways, especially with redundancy. (Those 386 class chips on 3 different computers on the shuttle could be handled by a modern multi-core CPU running multiple versions of the code on each core. A Raspbery Pi 4 using a quad-core ARMv8 chip and 8 GB RAM runs on about 15W.

It’s easy to criticise but if you’re forking out billions of dollars or even less euros ( funded by tax payers ), on a finite budget, to send decade plus lifespan probes to the hostile environs of deep space -or worse still to the harsh magnetic fiends of gas giants -give me older but bomb proof technology any day . Cassini didn’t do too bad or even Juno. Or the Voyagers.

Astrophysics and it’s related technology has always been aspirational in nature . A byproduct of the vision that makes game changing New Horizons and Kepler possible. Without that there would be no future – even if the extrapolations are a bit optimistic on occasion. But who cares ? Having a market leader 2020 processor fail after a months flight would be disastrous. There are no loss leaders in space.

NASA have just released a document highlighting the technological deficiencies you allude too and related corrective research -and many more- so there is a firm footing in reality if you know where to look.

I don’t see Musk,Bezos and Branson forking out for space missions that won’t ultimately offer a financial return. Mars colonisation as a back up to Earth is a wowser – but just half a billion dollars would fund an early warning NEO observatory that would potentially protect our civilisation for centuries. Even with old processors .

Can you post a link to that report? I would be very interested to read what NASA is thinking with regard to computer technology for deep space missions.

While it is problematic if a processor fails, I recall the Space Shuttle used 3 computers programmed independently to provide redundancy. IIRC, the avionics hardware was based on 1972 technology (I think the chips were 386 technology). Over a decade later, astronauts were bringing their own laptops up with the shuttle with chips based on later technology iterations. The only advantage of earlier chip technology was that the transistor connections were thicker and less prone to glitches from particle radiation. But we have newer technologies that allow redundancy (e.g. multiple cores) and better software languages that are more tolerant of faults.

On that last tangent, does anyone have a good update on the status on the Near-Earth Object Surveillance Mission (NEOSM)? The last press release I can find in December 2019 talks about a launch as early as 2025 “IF work begins next year.” (My emphasis, on what’s a mighty big word when it comes to funding.) And then I get nothing but crickets in my searches.

https://news.arizona.edu/story/uarizona-looks-toward-work-nasa-s-potential-asteroidhunting-space-telescope

https://en.wikipedia.org/wiki/Near-Earth_Object_Surveillance_Mission

https://neocam.ipac.caltech.edu/

“It’s easy to criticise but if you’re forking out billions of dollars”

— not a criticism. Using legacy technology makes a lot of sense. I literally just said, it’s cheaper, simpler, and safer.

(Where I get critical is when people start talking about spinoff technologies as a justification for space. Yeah no. Spinoffs do exist, but they’re modest, and have been for decades, and so are a not a strong justification. Legacy technologies are great, but by definition they mean you’re not innovating. Tang was a long time ago.)

Anyway: last I checked, there was a new chip available, but NASA hadn’t committed to using it in any probes yet. That was a year or two back, though, so maybe things have changed.

Doug M.

With the coming revolution in full reusability and on-orbit refueling—enabling far larger interplanetary payloads and dramatically reduced costs—the loss of a single spacecraft within a swarm will not be “disastrous”. Rather, loss of some % of spacecraft will be a mission design feature, dramatically relaxing per-craft reliability requirements without risking the mission. How can we usefully discuss exploration satellite swarms without including this crucial factor?

In other innovative space propulsion news…

NASA CubeSat to Demonstrate Water-Fueled Moves in Space:

https://www.nasa.gov/feature/ames/ptd-1

ThrustMe pioneers with iodine propulsion in space:

https://spacewatch.global/2021/01/thrustme-pioneers-with-iodine-propulsion-in-space/?no_cache=1611215683

Tethers Unlimited makes the water propulsion system with an info sheet at https://www.tethers.com/propulsion-system/ . It is certainly wise for cubesats to make propellant in situ from safe components … you don’t want the bright kid tinkering with one on his desk getting a whiff of hydrazine. :) The device seems like it hides considerable complexity, which I didn’t find the details of, and the 310 s impulse leaves a bit to be desired. Recalling the articles we’ve seen here about desorption from solar sails, I feel like there ought to be some even smaller, simpler, safe and efficient methods of solar powered propulsion for microscopic craft yet to be invented.

Looking at Exotrail’s product page, it appears they are pitching their HET engine as an intermediary between chemical propulsion and typical electric engines in terms of thrust and Isp. Their Isp is 800-1000s, not particularly high, but with higher thrust so that they can reach the needed orbital position faster and hence start generating revenues for the satellite. This may be why their power requirements are relatively low. This is quite an interesting idea and a valuable addition to propulsion ecosystem.

However, I suspect unsuited for deep-space applications where low thrust but high Isp is more mass efficient and where travel the long travel time needed has no relevance for a non-commercial probe/satellite.

Although Jim Benford commented to a question I raised about the mass issues of rectennas for power beaming, some Google searches indicated that rectennas could be quite lightweight for the power requirements indicated by the Exotrail HET engines. In addition, Earth orbit distances are very suited to microwave beaming, which makes me wonder if beaming power for this type of engine for Earth orbit satellites might be a possible business niche, allowing lower power PV panels for satellite operations once the propulsion is ended.

There should be a niche for miniaturized methods of control in space. Consider a toy project like KickSat ( https://www.bbc.com/future/article/20140128-the-smallest-spacecraft-in-orbit – see links). This was a contender for ‘smallest spacecraft’, but being based on 49 mm^2 CC430F5137 chips, it was still considered potentially hazardous space debris.

Imagine if we could make sub-millimeter spacecraft that would cause little concern if they went astray in orbit. A constellation of larger spacecraft could launch and land them electrically as propellant to maintain or alter formation, or use them as couriers to exchange information at higher bandwidth. They might be pressed into service as disposable tug boats for knocking space debris into storage orbits, or (alas) for military purposes, whether spying on spy satellites or converging to form a solid lump in an unexpected location.

There’s no doubt that a sub-millimeter section of chip from your laptop, or even an old Pentium, has the potential to do a lot of logic. I imagine that for some of the darker purposes the engineering problem has been considered. And there are so many odd ways to do computation, ranging from graphene to microfluidics, that I feel like there should be interesting basic research of relevance. I’d love to see more about it.

New concept for rocket thruster exploits the mechanism behind solar flares.

The new concept would accelerate the particles using magnetic reconnection, a process found throughout the universe, including the surface of the sun, in which magnetic field lines converge, suddenly separate, and then join together again, producing lots of energy. Reconnection also occurs inside doughnut-shaped fusion devices known as tokamaks.

“I’ve been cooking this concept for a while,” said PPPL Principal Research Physicist Fatima Ebrahimi, the concept’s inventor and author of a paper detailing the idea in the Journal of Plasma Physics. ”

https://phys.org/news/2021-01-concept-rocket-thruster-exploits-mechanism.html

An Alfvenic reconnecting plasmoid thruster.

A new concept for generation of thrust for space propulsion is introduced. Energetic thrust is generated in the form of plasmoids (confined plasma in closed magnetic loops) when magnetic helicity (linked magnetic field lines) is injected into an annular channel. Using a novel configuration of static electric and magnetic fields, the concept utilizes a current-sheet instability to spontaneously and continuously create plasmoids via magnetic reconnection. The generated low-temperature plasma is simulated in a global annular geometry using the extended magnetohydrodynamic model. Because the system-size plasmoid is an Alfvenic outflow from the reconnection site, its thrust is proportional to the square of the magnetic field strength and does not ideally depend on the mass of the ion species of the plasma. Exhaust velocities in the range of 20 to 500 km/s, controllable by the coil currents, are observed in the simulations.

https://arxiv.org/abs/2011.04192

500 km/s is a specific impulse 50,000 (s)