Centauri Dreams

Imagining and Planning Interstellar Exploration

Magnetic Collapse: A Spur to Evolution?

The sublime, almost fearful nature of deep time sometimes awes me even more than the kind of distances we routinely discuss in these pages. Yes, the prospect of a 13.8 billion year old cosmos strung with stars and galaxies astonishes. But so too do the constant reminders of our place in the vast chronology of our planet. Simple rocks can bring me up short, as when I consider just how they were made, how long the process took, and what they imply about life.

Consider the shifts that have occurred in continents, which we can deduce from careful study at sites with varying histories. Move into northern Quebec, for example, and you can uncover rock that was found on the continent we now call Laurentia, considered a relatively stable region of the continental crust (the term for such a region is craton). Move to the Ukraine and you can investigate the geology of the continent called Baltica. Gondwana can be studied in Brazil, an obvious reminder of how much the surface has changed.

Image: With Earth’s surface constantly changing over time, we can only do snapshots to suggest some of these changes. Here is a view of the Pannotia supercontinent, showing components as they existed about 545 million years ago. Based on: Dalziel, I. W. (1997). “Neoproterozoic-Paleozoic geography and tectonics: Review, hypothesis, environmental speculation”. Geological Society of America Bulletin 109 (1): 16–42. DOI:<0016:ONPGAT>2.3.CO;2 10.1130/0016-7606(1997)109<0016:ONPGAT>2.3.CO;2. Fig. 12, p. 31. Credit: Wikimedia Commons.

Studying these matters has something of the effect of an earthquake on me. By which I mean that in the two minor earthquakes I have experienced, the sense of something taken for granted – the deep stability of the ground under my feet – suddenly became questionable. Anyone who has gone through such events knows that the initial human response is often mystification. What’s going on? And then suddenly the big picture emerges even as the quake passes.

So it can be with scientific realizations. Let’s talk about how scientists use various methods to study rock from different eras to infer changes to Earth’s magnetic field. Tiny crystal grains a mere 50 to a few hundreds of nanometers in size can hold a single magnetic domain, meaning a place where the magnetization exists in a uniform direction. Think of this as a locking in of magnetic field conditions that can be studied at various times in the planet’s history to see what the Earth’s magnetic field was doing then.

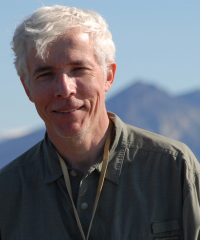

The subject is vast, and the work richly rewarding for anyone asking questions about how the planet has evolved. But now we’re learning that it also holds implications for the evolution of life. In a new feature in Physics Today, John Tarduno (University of Rochester) explains his team’s recent work on paleomagnetism, which is the study of the magnetic field throughout Earth’s history as captured in rock and sediment. For the magnetic poles move, and sometimes reverse, and within that history quite a story is emerging.

Image: The University of Rochester’s Tarduno, whose recent work explores the effects of a changing magnetic field on biological evolution. Credit: University of Rochester.

For Tarduno, the implications of some of these changes are striking. They grow out of the fact that the magnetic field, which is produced in our planet’s outer core (mostly liquid iron) continually varies, and the timescales involved can be short (mere years) or extensive (hundreds of millions of years). It’s been understood for a long time that the field reverses its polarity, but accompanying this change is the less known fact that a polarity change also decreases the field strength. Sometimes these transitions are short, but sometimes lengthy indeed.

Consider that new evidence, presented by Tarduno is this deeply researched backgrounder, shows that some 575 to 565 million years ago the Earth’s magnetic field all but collapsed, and remained collapsed for a period of tens of millions of years. If that time range piques your interest, it’s probably because this is coincident with a period known as the Avalon explosion, when macroscopic animal life, rich in complexity, begins to appear. And now we’re in the realm of evolution being spurred by magnetic field changes. The implications run to life’s own history and even as far out as SETI.

Named after the peninsula in which evidence for it was found, the Avalon explosion occurred tens of millions of years earlier than the Cambrian period which has heretofore been considered the time when complex lifeforms began to appear on Earth. It came during the Ediacaran period that preceded the Cambrian, and seems to have produced the first complex multicellular organisms. The sudden diversification of body scheme and distinct morphologies has been traced at sites in Canada as well as the UK.

Image: Archaeaspinus, a representative of phylum Proarticulata which also includes Dickinsonia, Karakhtia and numerous other organisms. They are members of the Ediacaran biota. Credit: Masahiro miyasaka / Wikimedia Commons.

So this is an ‘unexpected twist,’ as Tarduno puts it, that may relate significant evolutionary changes to the magnetic field as it reconfigured itself. Scientists studying the Ediacaran period (between 635 and 541 million years ago) have found that rocks formed then show odd magnetic directions. Some researchers concluded that the magnetic field in this period was reversing polarity, and we already knew that during a polarity reversal (the last was 800,000 years ago), the magnetic field could take on unusual shapes. Recent work shows that its strength in this period was a mere tenth of the values of today’s field.

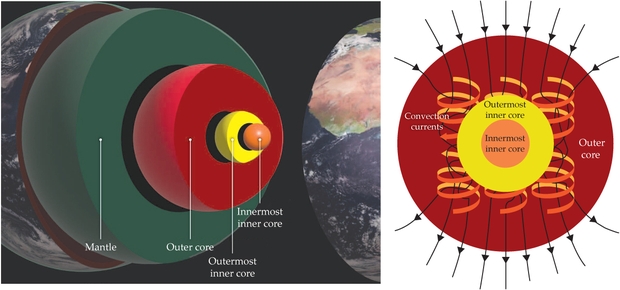

This work was done in northern Quebec (ancient Laurentia), but later work from the Ukraine (Baltica) and Brazil showed an even lower field strength. We’re talking about a long period here of ultralow magnetic activity, perhaps 26 million years or more. Events deep inside the Earth’s inner core seem to have spurred this. I won’t go into the details about all the research on the core – it’s fascinating but would take us deeply into the weeds. For now, just consider that a consensus has been building that relates core activity to the odd Ediacaran geomagnetic field, one that correlates with a profound evolutionary event.

Image: This is Figure 2 from the paper. Caption: Changes deep inside Earth have affected the behavior of the geodynamo over time. In the fluid outer core, shown at right, convection currents (orange and yellow arrows and ribbons) form into rolls because of the Coriolis effect from the planet’s rotation and generate Earth’s magnetic field (black arrows). Structures in the mantle—for example, slabs of subducted oceanic crust, mantle plumes, and regions that are anomalously hot or dense—can affect the heat flow at the core–mantle boundary and, in turn, influence the efficiency of the geodynamo. As iron freezes onto the growing solid inner core, both latent heat of crystallization and composition buoyancy from release of light elements provide power to the geodynamo. (Left: Earth layers image adapted from Rory Cottrell, Earth surface image adapted from EUMETSAT/ESA; right: image adapted from Andrew Z. Colvin/CC BY-SA 4.0.)

In the models Tarduno describes, the Cambrian explosion itself was driven by a greater incidence of energetic solar particles during periods of weak magnetic field strength. Thus we have the basis for a weak field increasing mutation rates and stimulating evolutionary processes. Tarduno cites his own work here:

Eric Blackman, David Sibeck, and I have considered whether the linkage might be found in changes to the paleomagnetosphere. Records of the strength of the time-averaged field can be derived from paleomagnetism, whereas solar-wind pressure can be estimated using data from solar analogues of different ages. My research group and collaborators have traced the history of solar–terrestrial interactions in the past by calculating the magnetopause standoff distance, where the solar-wind pressure is balanced by the magnetic field pressure… We know that the ultralow geomagnetic fields 590 million years ago would have been associated with extraordinarily small standoff distances, some 4.2 Earth radii (today it is 10–11 Earth radii) and perhaps as low as 1.6 Earth radii during coronal mass ejection events.

As Tarduno explains, all this leads to increased oxygenation during a period of magnetic field strength diminished to an all-time low, along with an accompanying boom in the complexity of animal life from the Ediacaran leading into the Cambrian.

Notice the profound shift we are talking about here. Classically, scientists have assumed that it was the shielding effects of the magnetic field that offered life the chance to survive. Indeed, we talk about a lack of magnetic fields in exoplanets like Proxima Centauri b as being a strong danger to life because of incoming radiation. This new work is saying something profound:

If our hypothesis is correct, we will have flipped the classic idea that magnetic shielding of atmospheric loss was most important for life, at least during the Ediacaran Period: The prolonged interlude when the field almost vanished was a critical spark that accelerated evolution.

Maybe we have been too simplistic in our views of how a magnetic field influences the development and growth of lifeforms. In recent decades, work has been showing linkages between these magnetic changes, which can last for millions of years, and spurts in evolutionary activity. So that it is precisely because of low magnetic field strength, rather than in spite of it, that life suddenly explodes into new forms during these periods of high activity.

Jim Benford has often commented to me that despite having mounted the most intensive SETI search with the most powerful tools ever available, we still have not a trace of a signal from another civilization. Is it possible – and Jim was the one who pointed out this paper to me – that the reason is that the magnetic field changes that so affected life on our planet are rare elsewhere? Because it now looks as though a magnetic field should be considered less a binary situation than as a variable, one whose mutability because of core activity can take the world it engulfs into periods of low to high magnetic strength, and some eras millions of years long in which there is hardly any field at all.

I mentioned Proxima Centauri b above. Whether or not it has a magnetic field has yet to be determined, which points out how little we know about such fields around exoplanets at large. Further investigation of Earth’s magnetic past will help us understand how such fields change over time, and whether Earth’s own history has been unusually kind to evolution.

The article is Tarduno, “Earth’s magnetic dipole collapses, and life explodes,” Physics Today 78 (4) (2025), 26-33 (abstract).

TOI-270 d: The Clearest Look Yet at a Sub-Neptune Atmosphere

Sub-Neptune planets are going to be occupying scientists for a long time. They’re the most common type of planet yet discovered, and they have no counterpart in our own Solar System. The media buzz about K2-18b that we looked at last time focused solely on the possibility of a biosignature detection. But this world, and another that I’ll discuss in just a moment, have a significance that can’t be confined to life. Because whether or not what is happening in the atmosphere of K2-18b is a true biosignature, the presence of a transiting sub-Neptune relatively close to the Sun offers immense advantages in studying the atmosphere and composition of this entire category.

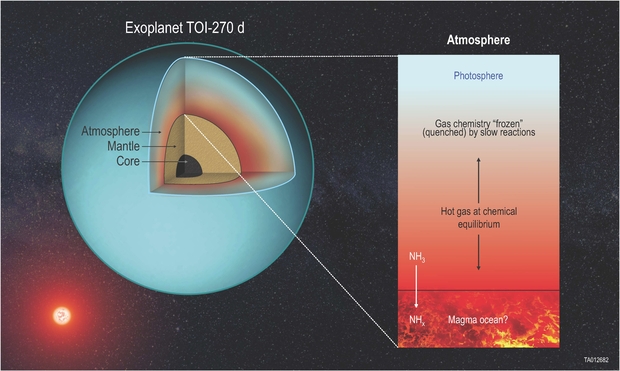

Are these ‘ hycean’ worlds with global oceans beneath an atmosphere largely made up of hydrogen? It’s a possibility, but it appears that not all sub-Neptunes are the same. Helpfully, we have another nearby transiting sub-Neptune, a world known as TOI-270 d, which at 73 light years is even closer than K2-18b, and has in recent work from the Southwest Research Institute provided us with perhaps the clearest data yet on the atmosphere of such a world. This work may prompt re-thinking of both planets, for rather than oceans, we may be dealing with rocky surfaces under a hot atmosphere.

TOI-270 d exists within its star’s habitable zone. The primary here is a red dwarf in the constellation Pictor, about 40 percent as massive as the Sun. Three planets are known in the system, discovered by the TESS satellite by detection of their transits.

SwRI’s Christopher Glein is lead author of the paper on this work. He and his team are working with data from the James Webb Space Telescope, collected by Björn Benneke and reported in a 2024 paper that was startling in its detail (citation below). Seeing TOI-270 d as a possible “archetype of the overall population of sub-Neptunes,” the Benneke paper describes it as a planet in which the atmosphere is not largely hydrogen but enriched throughout, blending hydrogen and helium with heavier elements (the term is ‘miscible’) rather than formed with a stratified hydrogen layer at the top.

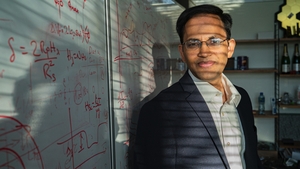

Image: SwRI’s Christopher Glein. Credit: Ian McKinney/SwRI.

Glein acknowledges the appeal of planets with the potential for life, the search for which drives much of the energy in exoplanet research. And the new data offer much to consider:

“The JWST data on TOI-270 d collected by Björn Benneke and his team are revolutionary. I was shocked by the level of detail they extracted from such a small exoplanet’s atmosphere, which provides an incredible opportunity to learn the story of a totally alien planet. With molecules like carbon dioxide, methane and water detected, we could start doing some geochemistry to learn how this unusual world formed.”

TOI-270 d, in other words, offers up plenty of detail, with carbon dioxide, methane and water readily detected, allowing as Glein notes the possibility of doing a geochemical analysis to delve into not just the atmosphere’s composition, but how this super-Earth formed in the first place. We have to begin with temperature, for the gases that showed up in the JWST data were at temperatures close to 550 degrees C. Hotter, in other words, than the surface of Venus, a fact that we need to reckon with if we’re holding out hope for global oceans. For at these temperatures gases do some interesting things.

Here the term is ‘equilibration process.’ At a certain level of the atmosphere, pressures and temperatures are high enough that gases reach chemical equilibrium – they become a stable mix. Going higher means both temperature and pressure drop, thus slowing reaction rates. But it is possible for gases to move upward faster than their chemical reactions can adjust to the change, which ‘freezes’ in the composition that was set at the equilibrium level. The mixture ‘quenches,’ in the terminology, and at that point the chemical ratios can no longer change. Finding out where this happens allows scientists to interpret what they see in data taken much higher in the atmosphere.

We are left with the chemical signature of the deep atmosphere where equilibrium occurs. We draw these inferences from the data taken by JWST from the upper atmosphere, offering a broader view of the atmosphere’s composition throughout.

The paper analyzes the balance between methane and carbon dioxide in terms of this quenching, as the relative amounts of the two gases become ‘frozen’ as they move upward. Working out where the balance would occur in a hydrogen-rich atmosphere allowed the team to work out that the freeze out occurred at temperatures between 885 K and 1112 K, with pressures ranging from one to 13 times Earth sea-level pressure. All this points to a thick, hot atmosphere, and one with a persistent conundrum.

For while models suggest that we should find ammonia in the atmosphere of temperate sub-Neptunes, it fails to appear. A nitrogen-poor atmosphere, the authors believe, is possibly the result of nitrogen being sequestered in a magma ocean. The speculation points to a world that is anything but hycean – no water oceans here! We may in fact be observing a planet with a thick atmosphere rich in hydrogen and helium that is well mixed with “metals” (elements heavier than helium), all of this over a rocky surface.

Image: An SwRI-led study modeled the chemistry of TOI-270 d, a nearby exoplanet between Earth and Neptune in size, finding evidence that it is a giant rocky world (super-Earth) surrounded by a deep, hot atmosphere. NASA’s JWST detected gases emanating from a region of the atmosphere over 530 degrees Celsius — hotter than the surface of Venus. The model illustrates a potential magma ocean removing ammonia (NH3) from the atmosphere. Hot gases then undergo an equilibration process and are lofted into the planet’s photosphere where JWST can detect them. Credit: SwRI / Christopher Glein.

The paper also notes the lack of carbon monoxide, explaining this by a model showing that CO would have frozen out even deeper in the atmosphere. Both modeling and data offer an explanation for TOI-270 d but also point to alternatives for K2-18 b. The modeling of the latter as an ocean world is but one explanation. Photochemical models show how difficult it is to produce and maintain enough methane under such conditions. Furthermore, K2-18 b likely receives too much stellar energy to maintain surface liquid water, due to greenhouse heating and limited atmospheric circulation. Thus the paper’s conclusion on K2-18b:

Because a revised deep-atmosphere scenario can accommodate depleted CO and NH3 abundances, the apparent absence of these species should no longer be taken as evidence against this type of scenario for TOI-270 d and similar planets, such as K2-18 b. Our results imply that the Hycean hypothesis is currently unnecessary to explain any data, although this does not preclude the existence of Hycean worlds.

This is a deep, rich analysis drawing plausible conclusions from clearer data than we had previously acquired from transiting sub-Neptunes. The question of water worlds under hydrogen atmospheres remains open, but the galvanizing nature of this paper is that it points to forms of analysis that until now we’ve been able to do only in our own Solar System. I think the authors are connecting dots in very useful ways here, pointing to the progress in exoplanetary science as we go ever deeper into atmospheres.

From the paper. The italics are mine:

Our overall philosophy was to develop modeling approaches that are rooted as much as possible in empirical experience. This experience includes fumaroles on Earth that constrain quench temperatures between redox species in hot gases, and making planets out of meteorites and cometary material to understand how different elements can reach different levels of enrichment in planetary atmospheres. Our approaches were simple, perhaps too simple in some cases if the goal is to accurately pinpoint the composition, present conditions, and history of the planet. If, instead, the goal is to suggest new ways of thinking about geochemistry on exoplanets that maintain focus on key variables and how they can be connected to observational data, as well as large-scale links between what we observe and how the atmosphere might have originated, then a different path to progress can be taken.. The latter is the point of view we pursued.

The paper is Glein et al., “Deciphering Sub-Neptune Atmospheres: New Insights from Geochemical Models of TOI-270 d,” accepted at the Astrophysical Journal (preprint). The Benneke et al. paper is “JWST Reveals CH4, CO2, and H2O in a Metal-rich Miscible Atmosphere on a Two-Earth-Radius Exoplanet,” currently available as a preprint.

A Possible Biosignature at K2-18b?

As teams of researchers begin to detect molecules that could indicate the presence of life in the atmospheres of exoplanets, controversies will emerge. In the early stages, the method will be transmission spectroscopy, in which light from the star passes through the planet’s atmosphere as it transits the host. From the resulting spectra various deductions may be drawn. Thus oxygen (O₂), ozone (O₃), methane (CH₄), or nitrous oxide (N₂O) would be interesting, particularly in out of equilibrium situations where a particular gas would need to be replenished to continue to exist.

While we continue with the painstaking work of identifying potential biological markers — and there will be many — new findings will invariably become provocations to find abiotic explanations for them. Thus the recent flurry over K2-18b, a large (2.6 times Earth’s radius) sub-Neptune that, if not entirely gaseous, may be an example of what we are learning to call ‘hycean’ worlds. The term stands for ‘hydrogen-ocean.’ Think of endless ocean under an atmosphere predominantly composed of hydrogen. Now put it in the habitable zone.

Thus the interest in K2-18b, which appears to orbit within the habitable zone of its red dwarf host. Astronomers have known about water vapor here for some time, while JWST results in 2023 further indicated carbon dioxide and methane. On its 33-day transiting orbit, this is a planet made to order for spectral analysis of its atmosphere. Now we have new work that leans in the direction of a biological explanation for a possible biosignature, one that is tantalizing but clearly demands further investigation.

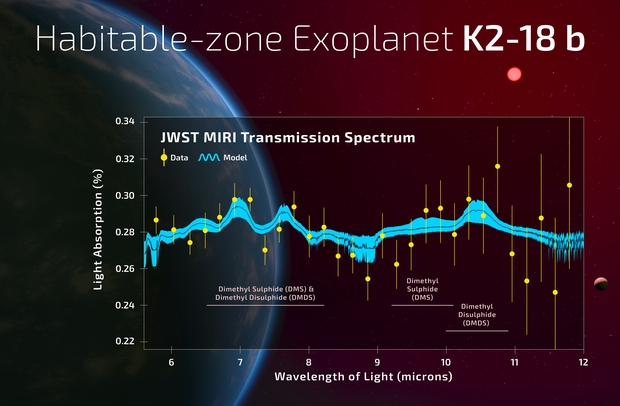

The biosignature, deduced by researchers at the University of Cambridge led by Nikku Madhusudhan, involves dimethyl sulfide (DMS) and/or dimethyl disulfide (DMDS). These are molecules that, at least on Earth, are produced only by life, most commonly by marine phytoplankton, photosynthetic organisms that play a large role in producing oxygen for our atmosphere.. The detection, say the authors, is at the three-sigma level of statistical significance, which means a 0.3% probability that these results occurred by chance. Bear in mind that five-sigma is considered the threshold for scientific discovery (below a 0.00006% probability that the results are by chance). So as I say, we can call this intriguing but not definitive, a conclusion the authors support.

Image: Nikku Madhusudhan, who led the current work on K2-18b. Credit: University of Cambridge.

What excites the researchers about this work is that they first saw hints of DMS using the James Webb Space Telescope’s NIRISS (Infrared Imager and Slitless Spectrograph) and NIRSpec (Near-Infrared Spectrograph) instruments, but found further evidence using the observatory’s MIRI (Mid-Infrared Instrument) in the mid-infrared (6-12 micron) range. That’s significant because it produces an independent line of evidence using different instruments and different wavelengths. And in the words of Madhusudhan, “The signal came through strong and clear.”

But Madhusudhan said something else that has excited commentators. Noting that the concentrations of DMS and DMDS in K2-18b’s atmosphere are thousands of times stronger than what we see on Earth, there is an implication that K2-18b may be a specific type of living planet:

“Earlier theoretical work had predicted that high levels of sulfur-based gases like DMS and DMDS are possible on Hycean worlds. And now we’ve observed it, in line with what was predicted. Given everything we know about this planet, a Hycean world with an ocean that is teeming with life is the scenario that best fits the data we have.”

Centauri Dreams readers will want to check Dave Moore’s Super-Earths/Hycean Worlds for more on this category. A hycean world is considered to be a water world with habitable surface conditions, and in earlier work, Madhusudhan and colleagues have noted that K2-18b could well maintain a habitable ocean beneath a hydrogen atmosphere. We have no analog to planets like this in our own system, but the category may be emerging as a place where conditions of temperature and atmospheric pressure may allow at least microbial life.

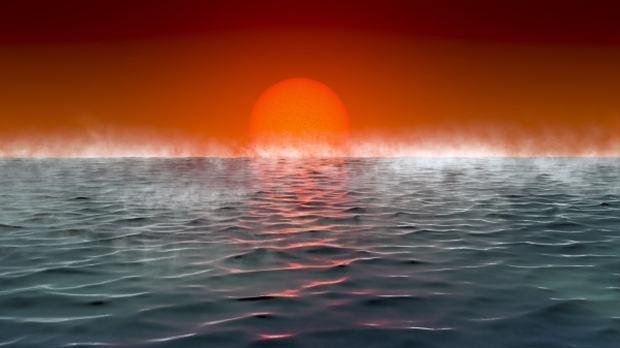

Image: Artist’s conception of the surface of a hycean planet. Credit: Amanda Smith, Nikku Madhusudhan.

So what is producing these chemical signatures? There may be reason for some optimism about a life detection but the possibility of unknown chemical processes remains alive, and thus will spawn further work both theoretical and experimental. And this is the problem for the entire landscape of remote biosignature detection. We’re going to be seeing a lot of interesting results as our instrumentation continues to improve, but at the level of uncertainty that will ensure debate and the need for further taking of data. This is going to be a long and I suspect frustrating process. Astrophysicists are going to be knocking heads at conferences for decades.

So this is an example of how the debate is going to be playing out at many levels. The evidence for biology will be sifted against possible abiotic processes. From the paper:

…both DMS and DMDS are highly reactive and have very short lifetimes in the above experiments (i.e., a few minutes) and in the Earth’s atmosphere (i.e., between a few hours to ∼1 day), due to various photochemical loss mechanisms (e.g. Seager et al. 2013b). Thus, the resulting DMS and DMDS mixing ratios in the current terrestrial atmosphere are quite small (typically ≲1 ppb), despite continual resupply by phytoplankton and other marine organisms…. sustaining DMS and/or DMDS at over 10-1000 ppm concentrations in steady state in the atmosphere of K2-18 b would be implausible without a significant biogenic flux. Moreover, the abiotic photochemical production of DMS in the above experiments requires an even greater abundance of H2S as the ultimate source of sulfur — a molecule that we do not detect — and requires relatively low levels of CO2 to curb DMS destruction (Reed et al. 2024), contrary to the high reported abundance of CO2 on K2-18 b (Madhusudhan et al. 2023b).

Image: The graph shows the observed transmission spectrum of the habitable zone exoplanet K2-18 b using the JWST MIRI spectrograph. The vertical shows the fraction of star light absorbed in the planet’s atmosphere due to molecules in the planet’s atmosphere. The data are shown in the yellow circles with the 1-sigma uncertainties. The curves show the model fits to the data, with the black curve showing the median fit and the cyan curves outlining the 1-sigma intervals of the model fits. The absorption features attributed to dimethyl sulphide and dimethyl disulphide are indicated by the horizontal lines and text. The image behind the graph is an illustration of a hycean planet orbiting a red dwarf star. Credit: A. Smith, N. Madhusudhan (University of Cambridge).

So there are reasons for optimism. We’ll keep taking such results apart, motivated by the unsparing self-criticism of the Cambridge team, which goes out of its way to scrutinize its findings for alternative explanations (a good lesson in scientific methodology here). Case in point: Madhusudhan and colleagues point out evidence for the presence of DMS on comet 67P/Churyumov-Gerasimenko, which could mean an abiotic source delivered by comets into the atmosphere. Because comets contain ices and gases that could be interpreted as biosignatures if found in a planet’s atmosphere, we’re again reminded of the need for caution. Even so, we can deflect this.

For at K2-18b, the atmosphere is massive compared to the trace gases that could be induced by cometary delivery, and the authors doubt that DMS and DMDS would survive in their present form during a hypervelocity comet impact. K2-18b just has too much DMS and DMDS, per these findings, to be accounted for by comets alone.

Detecting a biosignature will require accumulating more and more evidence to demonstrate first the actual presence of the detected molecules and second the possible abiotic photochemical ways of producing DMS and DMDS in an atmosphere like this. Madhusudhan cites this work as an opportunity for pursuing such investigations within a community of continuing research. No one is claiming we have detected life at K2-18b, but we’re getting a nudge in that direction that will be joined by quite a few other nudges as we probe alien atmospheres.

Not all these nudges point to the same things. For among papers discussing K2-18b, another is about to appear that questions whether it and another prominent sub-Neptune (TOI–270 d) are actually hycean worlds at all. This deep dive into sub-Neptune atmospherics, led by Christopher Glein at Southwest Research Institute, will be our subject next time. For before we can make the call on any hycean biosignature, we have to be sure that oceans are possible there in the first place.

The paper is Madhusudhan et al., “New Constraints on DMS and DMDS in the Atmosphere of K2-18 b from JWST MIRI,” accepted at Astrophysical Journal Letters (preprint).

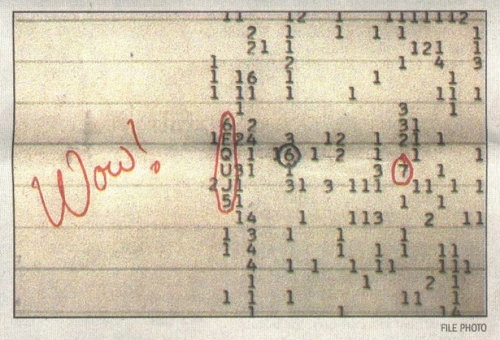

New Explanations for the Enigmatic Wow! Signal

The Wow! signal, a one-off detection at the Ohio State ‘Big Ear’ observatory in 1977, continues to perplex those scientists who refuse to stop investigating it. If the signal were terrestrial in origin, we have to explain how it appeared at 1.42 GHz, while the band from 1.4 to 1.427 GHz is protected internationally – no emissions allowed. Aircraft can be ruled out because they would not remain static in the sky; moreover, the Ohio State observatory had excellent RFI rejection. Jim Benford today discusses an idea he put forward several years ago, that the Wow signal could have originated in power beaming, which would necessarily sweep past us as it moved across the sky and never reappear. And a new candidate has emerged, as Jim explains, involving an entirely natural process. Are we ever going to be able to figure this signal out? Read on for the possibilities. A familiar figure in these pages, Jim is a highly regarded plasma physicist and CEO of Microwave Sciences, as well as being the author of High Power Microwaves, widely considered the gold standard in its field.

by James Benford

The 1977 Wow! signal had the potential of being the first signal from extraterrestrial intelligence. But searches for recurrence of the signal heard nothing. Interest continues, as two lines of thought continue to ponder it.

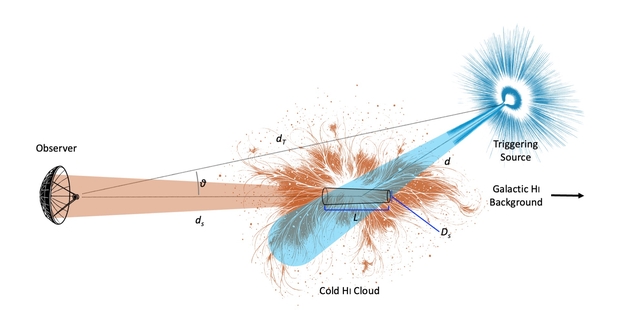

An Astronomical Maser

A recent paper proposes that the Wow! signal could be the first recorded event of an astronomical maser flare in the hydrogen line [1]. (A maser is a laser-like coherent emission at microwave frequencies. The maser was the precursor to the laser.) The Wow frequency was at the hyperfine transition line of neutral hydrogen, about 1.4 GHz. The suggestion is that the Wow was a sudden brightening from stimulated emission of the hydrogen line in interstellar gas driven by a transient radiation source behind a hydrogen cloud. The group is now going through archival data searching for other examples of abrupt brightening of the hydrogen line.

Maser Wow concept: A transient radiative source behind a cold neutral hydrogen (HI) cloud produced population inversion in the cloud near the hydrogen line, emitting a narrowband burst toward Earth [1].

Image courtesy of Abel Mendez (Planetary Habitability Laboratory, University of Puerto Rico at Arecibo).

Could aliens use the hydrogen clouds as beacons, triggered by their advanced technology? Abel Mendez has pointed out that this was suggested by Bob Dixon in a student’s thesis in 1976 [2]! From that thesis [3]:

“If it is a beacon built by astroengineering, such as an extraterrestrial civilization that is controlling the emission of a natural hydrogen cloud and using it as a beacon, then the only way that it could be ascertained as such, is by some time variation. And we are not set up to study time variation.”

How could such a beacon be built? It would require producing a population inversion in a substantial volume of ionized hydrogen. That might perhaps be done by an array of thermonuclear explosives optimized to produce a narrowband emission into such a volume [4]. Exploded simultaneously, they could produce that inversion, creating the pulse seen on Earth as the Wow.

Why does the Wow! Signal have narrow bandwidth?

In 2021, I published a suggestion that the enigmatic Wow Signal, detected in 1977, might credibly have been leakage from an interstellar power beam, perhaps from launch of an interstellar probe [5]. I used this leakage to explain the observed features of the Wow Signal: the power density received, the Signal’s duration and frequency. The power beaming explanation for the Wow accounted for all four of the Wow parameters, including the fact that the Wow observation has not recurred.

At the 2023 annual Breakthrough Discuss meeting, Mike Garrett of Jodrell Bank inquired “I was thinking about the Wow signal and your suggestion that it might be power beam leakage. But it’s not obvious to me why any technical civilization would limit their power beam to a narrow band of <= 10 kHz. Is there some kind of technical advantage to doing that or some kind of technical limitation that would produce such a narrow-band response?”

After thinking about it, I have concluded that there is ‘some kind of technical advantage’ to narrow bandwidth. In fact, it is required for high-power beaming systems.

Image: The Wow Signal was detected by Jerry Ehman at the Ohio State University Radio Observatory (known as the Big Ear). The signal, strong enough to elicit Ehman’s inscribed comment on the printout, was never repeated.

A Beamer Made of Amplifiers?

High power systems involving multiple sources are usually built using amplifiers not oscillators, for several technical reasons. For example, the Breakthrough Starshot system concept has multiple laser amplifiers driven by a master oscillator, a so-called master oscillator-power amplifier (MOPA) configuration. Amplifiers are themselves characterized by the product of amplifier gain (power out divided by power in) and bandwidth, which is fixed for a given type of device, their ‘gain-bandwidth product’. This product is due to phase and frequency desynchronization between the beam and electromagnetic field outside the frequency bandwidth [6].

Therefore, for wide bandwidth, a lower power per amplifier follows. That means many more amplifiers. Likewise, to go to high power, each amplifier will have a small bandwidth. (Then the number of amplifiers is determined by the power required.) For power beaming applications, to get high power on target is essential: higher power is required, so smaller bandwidth follows.

So why do you get narrow bandwidth? You use very high gain amplifiers to essentially “eat up” the gain-bandwidth product. For example, in a klystron, you have multiple high-Q cavities that result in high gain. The high-gain SLAC-type klystrons had gains of about 100,000. Bandwidths for high power amplifiers on Earth are about one percent of one percent, 0.0001, 10-4. The Wow! bandwidth is 10 kHz/1.41 GHz, about 10-5.

So yes, the physics of amplifiers limits bandwidth in beacons and power beams because both would be built to provide very high power. So, with very high gain in the amplifiers, small bandwidth is the result.

This fact about amplifiers is another reason I think power beaming leakage is the explanation for the Wow. Earth could have accidentally received the beam leakage. Since stars constantly move relative to each other, later launches using the Wow! beam will not be seen from Earth.

Therefore I predicted that each failed additional search for the Wow! to repeat is more evidence for this explanation.

The Wow search goes on

These two very different explanations for the origin of the Wow! have differing future possibilities. I predicted that it wouldn’t be seen again. Each failed additional search for the Wow! to repeat (and there have been many) is more evidence for this explanation. Mendez and coworkers are looking to see if their process has occurred previously. They can prove their explanation by finding such occurrences in existing data. These are two very different possibilities. Only the Mendez concept can be realized soon.

References

1. Abel Mendez,1 Kevin Ortiz, Ceballos, and Jorge I. Zuluaga, “Arecibo Wow! I: An Astrophysical Explanation for the Wow! Signal,” arXiv:2408.08513v2 [astro-ph.HE], 2024.

2. Abel Mendez, private communication.

3. Cole, D. M. (1976). “A Search for Extraterrestrial Radio Beacons at the Hydrogen Line,” Doctoral dissertation, Ohio State University, 1976.

4. Taylor, T., 1986, “Third generation nuclear weapons,” Sci. Am., 256, 4, 1986.

5. James Benford “Was the Wow Signal Due to Power Beaming Leakage?”, JBIS 74 196-200, 2021.

6. James Benford, Edl Schamiloglu, John A. Swegle, Jacob Stephens and Peng Zhang, Ch. 12 in High Power Microwaves, Fourth Edition, Taylor and Francis, Boca Raton, FL, 2024.

Into Titan’s Ocean

When it comes to oceans beneath the surface of icy moons, Europa is the usual suspect. Indeed, Europa Clipper should have much to say about the moon’s inner ocean when it arrives in 2030. But Titan, often examined for the possibility of unusual astrobiology, has an internal ocean too, beneath tens of kilometers of ice crust. The ice protects the mix of water and ammonia thought to be below, but may prove to be an impenetrable barrier for organic materials from the surface that might enrich it.

I’ve recently written about abused terms in astrobiological jargon, and in regards to Titan, the term is ‘Earth-like,’ which trips me every time I run into it. True, this is a place where there is a substance (methane) that can appear in solid, liquid or gaseous form, and so we have rivers and lakes – even clouds – that are reminiscent of our planet. But on Earth the cycle is hydrological, while Titan’s methane mixing with ethane blows up the ‘Earth-like’ description. For methane to play this role, we need surface temperatures averaging −179 °C. Titan is a truly alien place.

In Titan we have both an internal ocean world and a cloudy moon with an atmosphere thick enough to keep day/night variations to less than 2 ℃ (although the 16 Earth day long ‘day’ also stabilizes things). It’s a fascinating mix. We’ve only just begun to probe the prospects of life inside this world, a prospect driven home by new work just published in the Planetary Science Journal. Its analysis of Titan’s ocean as a possible home to biology doesn’t come up completely short, but it offers little hope that anything more than scattered microbes might exist within it.

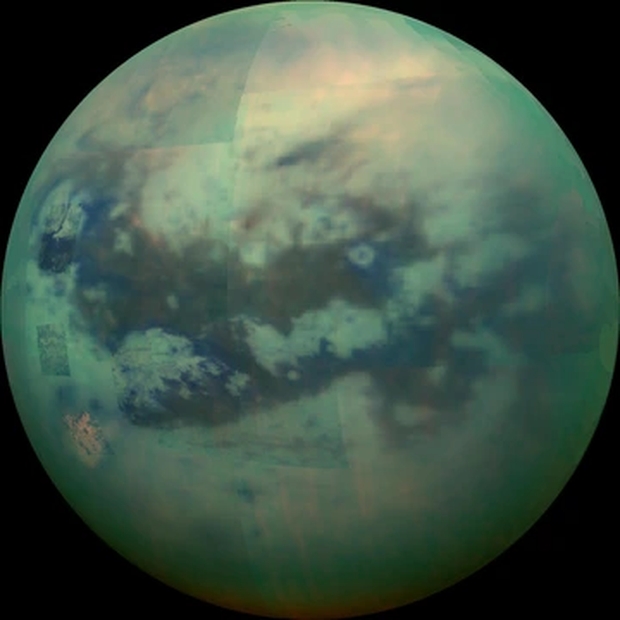

Image: This composite image shows an infrared view of Titan from NASA’s Cassini spacecraft, acquired during a high-altitude fly-by, 10,000 kilometers above the moon, on Nov. 13, 2015. The view features the parallel, dark, dune-filled regions named Fensal (to the north) and Aztlan (to the south). Credit: NASA/JPL/University of Arizona/University of Idaho.

The international team led by Antonin Affholder (University of Arizona) and Peter Higgins (Harvard University) looks at what makes Titan unique among icy moons, the high concentration of organic material. The idea here is to use bioenergetic modeling to look at Titan’s ocean. Perhaps as deep as 480 kilometers, the ocean can be modeled in terms of available chemicals and ambient conditions, factoring in energy sources (likely from chemical reactions) that could allow life to emerge. Ultimately, the models rely on what we know of the metabolism of Earth organisms, which is where we have to start.

It turns out that abundant organics – complex carbon-based molecules – may not be enough. Here’s Affholder on the matter:

“There has been this sense that because Titan has such abundant organics, there is no shortage of food sources that could sustain life. We point out that not all of these organic molecules may constitute food sources, the ocean is really big, and there’s limited exchange between the ocean and the surface, where all those organics are, so we argue for a more nuanced approach.”

Nuance is good, and one way to explore its particulars is through fermentation. The process, which demands organic molecules but does not require an oxidant, is at the core of the paper. Fermentation converts organic compounds into the energy needed to sustain life, and would seem to be suited for an anaerobic environment like Titan’s. With abiotic organic molecules abundant in Titan’s atmosphere and accumulating at the surface, a biosphere that can feed off this material in the ocean seems feasible. Glycine, the simplest of all known amino acids and an abundant constituent of matter in the early Solar System, serves as a useful proxy, as it is widely found in asteroids and comets and could sink through the icy shell, perhaps as a result of meteorite impacts.

The problem is that this supply source is likely meager. From the paper:

Sustained habitability… requires an ongoing delivery mechanism of organics to Titan’s ocean, through impacts transferring organic material from Titan’s surface or ongoing water–rock interactions from Titan’s core. The surface-to-ocean delivery rate of organics is likely too small to support a globally dense glycine-fermenting biosphere (<1 cell per kg water over the entire ocean). Thus, the prospects of detecting a biosphere at Titan would be limited if this hypothetical biosphere was based on glycine fermentation alone.

Image: This artist’s concept of a lake at the north pole of Saturn’s moon Titan illustrates raised rims and rampart-like features as seen by NASA’s Cassini spacecraft. Credit: NASA/JPL-Caltech.

More biomass may be available in local concentrations, perhaps at the interface of ocean and ice, and the authors acknowledge that other forms of metabolism may add to what glycine fermentation can produce. Thus what we have is a study using glycine as a ‘first-order approach’ to studying the habitability of Titan’s ocean, one which should be followed up by considering other fermentable molecules likely to be in the ocean and working out how they might be delivered and in what quantity. We still wind up with only approximate biomass estimates, but the early numbers are low.

The paper is Affholder et al., “The Viability of Glycine Fermentation in Titan’s Subsurface Ocean,” Planetary Science Journal Vol. 6, No. 4 (7 April 2025), 86 (full text).

On ‘Sun-like’ Stars

The thought of a planet orbiting a Sun-like star began to obsess me as a boy, when I realized how different all the planets in our Solar System were from each other. Clearly there were no civilizations on any planet but our own, at least around the Sun. But if Alpha Centauri had planets, then maybe one of them was more or less where Earth was in relation to its star. Meaning a benign climate, liquid water, and who knew, a flourishing culture of intelligent beings. So ran my thinking as a teenager, but then other questions began to arise.

Was Alpha Centauri Sun-like? Therein hangs a tale. As I began to read astronomy texts and realized how complicated the system was, the picture changed. Two stars and perhaps three, depending on how you viewed Proxima, were on offer here. ‘Sun-like’ seemed to imply a single star with stable orbits around it, but surely two stars as close as Centauri A and B would disrupt any worlds trying to form there. Later we would learn that stable orbits are indeed possible around both of these stars, and in the habitable zone, too (I still get emails from people saying no such orbits are possible, but they’re wrong).

So just how broad is the term ‘Sun-like’? One textbook told me that Centauri A was a Sun-like star, while Centauri B was sometimes described that way, and sometimes not. I thought the confusion would dissipate once I knew enough about astronomical terms, but if you look at scientific papers today, you’ll see that the term is still flexible. The problem is that by the time we move from a paper into the popular press, the term Sun-like begins to lose definition.

Image:A direct image of a planetary system around a young K-class star, located about 300 light-years away and known as TYC 8998-760-1. The news release describes this as a Sun-like star, raising questions as to how we define the term. Image credit: ESO.

G-class stars are the category into which the Sun fits, with its lifetime on the order of 10 billion years, and if we expand its parameters a bit, we can take in stars around the Sun’s mass – perhaps 0.8 to 1.1 solar masses – and in the same temperature range – 5300 to 6000 K. Strictly speaking, then, Centauri B, which is a K-class star, doesn’t fit the definition of Sun-like, although K stars seem to be good options for habitable worlds. They’re less massive (0.45 to 0.8 solar masses) and cooler (3900 to 5300 K), and they’re more orange than yellow, and they’re longer lived than the Sun, a propitious thought in terms of astrobiology.

So we don’t want to be too doctrinaire in discussing what kind of star a habitable planet might orbit, but we do need to mind our definitions, because I see the term ‘Sun-like’ in so many different contexts. When I see a statement like “one Earth-class planet around every Sun-like star,” I have to ask whether we’re talking about G-class stars or something else. Because some scientists expand ‘Sun-like’ to include not only G- and K- but F-class stars as well. Why do this? Such stars are, like the Sun, long-lived. F stars are hotter and more massive than the Sun, but like G- and K-class stars, they’re stable. Some studies, then, consider ‘Sun-like’ to mean all FGK-type stars.

Some examples. ‘COROT finds exoplanet orbiting Sun-like star’ is the title of a news release that describes a star a bit more massive than the Sun. So the comparison is to G-class stars. ‘Astronomy researchers discover new planet around a ‘Sun-like’ star’ describes a planet around an F-class star, so we are in the FGK realm. ‘First Ever Image of a Multi-Planet System around a Sun-like Star Captured by ESO Telescope’ describes a planet around TYC 8998-760-1, a K-class dwarf.

So there’s method here, but it’s not always clarified as information moves from the academy (and the observatory) into the media. Confounding the picture still further are those papers that use ‘Sun-like’ to mean all stars on the Main Sequence. This takes in the entire range of stars OBAFGKM. This is a rare usage, but there is a certain logic here as well. If you’re looking for habitable planets, it’s clear that stars in the most stable phase of their lives are the ones to examine, burning hydrogen to produce energy. No brown dwarfs here, but the category does take in M-class stars, and the jury is out on whether such stars can support life. And they take in the huge majority of stars in the galaxy.

So if we’re talking about hydrogen burning, the Main Sequence offers up everything from hot blue stars all the way down to cool red dwarfs. End the hydrogen burning and a different phase of stellar evolution begins, producing for example the kind of white dwarf that the Sun will one day become. A paper’s context usually makes it perfectly clear which of the three takes on ‘Sun-like’ it is using, but the need to clarify the term in news releases, particularly when dealing with a wide range through F-, G- and K-class stars is evident.

All of this matters to the popular perception of what exoplanet researchers do because it wildly affects the numbers. G-class stars are thought to comprise about 7 percent of the stars in the galaxy, while K-class stars take in about 12 percent, and M-class dwarfs as high as 80 percent of the stellar population. Saying there is an Earth-class planet around every Sun-like star thus could mean ‘around 7 percent of the stars in the Milky Way.’ Or it could mean ‘around 22 percent of the stars in the Milky Way, if we mean FGK host stars.

If we included red dwarf stars, it could mean ‘around about 95 percent of the stars in the galaxy,’ excluding evolved, non-Main Sequence objects like white dwarfs, neutron stars and red giants. Everything depends upon how the terms are defined. I keep getting emails about this. My colleague Andrew Le Page is a stickler for terminology in the same way I am, with his most trenchant comments being reserved for too facile use of the term ‘habitable.’

So we’ve got to be careful in this burgeoning field. Exoplanet researchers are aware of the need to establish the meaning of ‘Sun-like’ carefully. The fact that the public’s interest in exoplanets is growing means, however, that in public utterances like news releases, scientists need to clarify what they’re talking about. It’s the same thing that makes the term ‘Earth-like’ so ambiguous. A planet as massive as the Earth? A planet that is rocky as opposed to gaseous? A planet in the habitable zone of its star? Is a planet on a wildly elliptical orbit crossing in and out of the habitable zone of an F-class host Earth-like?

Let’s watch our terms so we don’t confuse everybody who is not in the business of studying exoplanets full-time. The interface between professional journals and public venues like websites and newspapers is important because it can have effects on funding, which in today’s climate is a highly charged issue. A confused public is less likely to support studies in areas it does not understand.