Centauri Dreams

Imagining and Planning Interstellar Exploration

Comet Interceptor Could Snag an Interstellar Object

It pleases me to learn that Dutch astronomer Jan Oort was among the select group of people who have seen Halley’s Comet twice. At the age of 10, he saw it with his father on the shore at Noordwijk, Netherlands. In 1986, he saw it again from an aircraft. What a fine experience that would have been for a man who brought so much to the study of comets, including the idea that the Solar System is surrounded by a massive cloud of such objects in orbits far beyond those of the outer planets.

Image: Dutch astronomer Jan Oort, a pioneer in the study of radio astronomy and a major figure in mid-20th Century science. Credit: Wikimedia Commons CC BY-SA 3.0.

Halley’s Comet is a short-period object, roughly defined as a comet with an orbit of 200 years or less, and thus not a member of the Oort Cloud. But let’s linger on it for just a moment. The most famous person associated with two appearances of Halley’s Comet is Mark Twain, who was born in 1835 with the comet in the sky, and who sensed that its approach in 1910 would also mark his demise. As Twain put it:

I came in with Halley’s Comet… It is coming again … and I expect to go out with it… The Almighty has said, no doubt: ‘Now here are these two unaccountable freaks; they came in together, they must go out together.’

And so they did.

Edging in from the Oort

The Oort Cloud is an intriguing concept because by some accounts, it may extend halfway to the nearest star, meaning that it’s conceivable that the cometary cloud around the Sun nudges into a similar cloud around Centauri A/B, assuming there is one there. We use the Oort to explain the appearance of long-period comets, assuming that among these trillions of objects, a few are occasionally nudged out of their orbits and fall toward the Sun. The concept makes sense but observational data is sparse, as these dark objects are not directly observable until one of them moves inward.

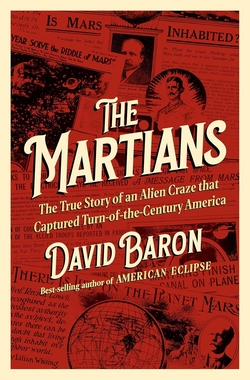

Image: The presumed distance of the Oort cloud compared to the rest of the Solar System. Credit: NASA / JPL-Caltech / R. Hurt.

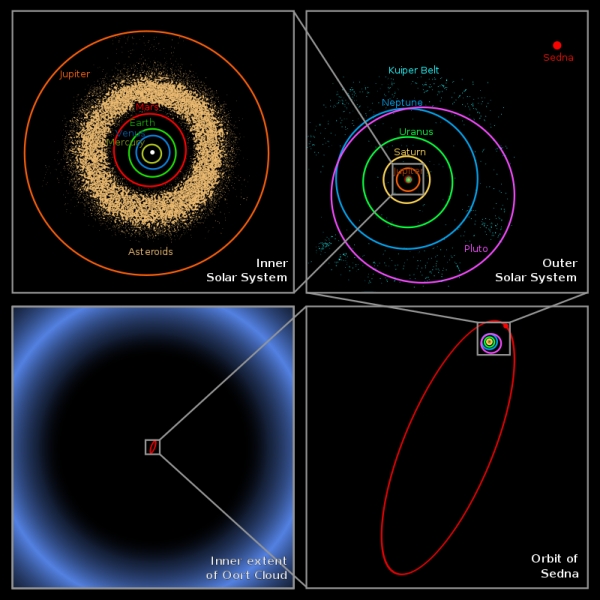

We’ve recently learned about a long-period comet with interesting properties indeed. C/2014 UN271 (Bernardinelli-Bernstein) is the object in question, named after the two astronomers who discovered it in Dark Energy Survey (DES) data at a heliocentric distance of 29 au. Recent work with the Hubble Space Telescope has determined that the object may be as much as 130 kilometers across, making it the largest nucleus ever seen in a comet. Moreover, we can assume that it’s not an aberration.

David Jewitt (UCLA) is a co-author of the paper on this work:

This comet is the tip of the iceberg for many thousands of comets that are too faint to see in the more distant parts of the solar system. We’ve always suspected this comet had to be big because it is so bright at such a large distance. Now we confirm it is.”

Getting an accurate read on an object like this was no easy matter. At this distance from the Sun, the nucleus is too faint to be resolved even by the Hubble instrument, so Jewitt and team had to rely on data showing the spike of light where the nucleus was thought to be. Lead author Man-To Hui (Macau University of Science and Technology) led the development of a computer model of the surrounding coma, adjusting it to the Hubble data and then subtracting its glow, leaving behind the nucleus. Observations from the Atacama Large Millimeter/submillimeter Array (ALMA) confirmed its size and also made it clear that the nucleus is, as Jewitt puts it, “blacker than coal.”

Image: Sequence showing how the nucleus of Comet C/2014 UN271 (Bernardinelli-Bernstein) was isolated from a vast shell of dust and gas surrounding the solid icy nucleus. Credit: NASA, ESA, Man-To Hui (Macau University of Science and Technology), David Jewitt (UCLA). Image processing: Alyssa Pagan (STScI).

Intercepting a Comet

If long period comets are difficult objects to study from Earth orbit, we may need to get up close with a spacecraft. It’s good to hear that the European Space Agency has approved the mission known as Comet Interceptor for construction, slotting it to fly in 2029 in the same launch that will carry the Ariel exoplanet finder into space. We’ve studied comets before, of course, including Halley’s, with notable success. But it’s obvious that short-period comets like the former and Rosetta target 67/P Churyumov-Gerasimenko would have been changed by their long proximity to the inner Solar System. What will we find when we study a newly arriving Oort object?

Michael Küppers is an ESA scientist working on the Comet Interceptor mission:

“A comet on its first orbit around the Sun would contain unprocessed material from the dawn of the Solar System. Studying such an object and sampling this material will help us understand not only more about comets, but also how the Solar System formed and evolved over time.”

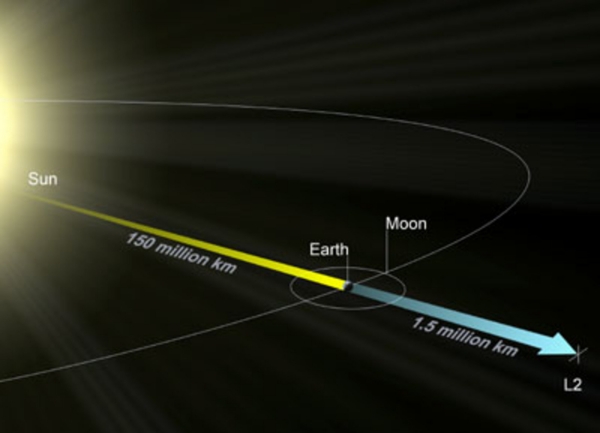

Both Ariel and Comet Interceptor will proceed to the L2 Lagrangian point 1.5 million kilometers from the Earth, where the latter will wait for a target, presumably an Oort object jostled inward by gravitational interactions. Here we rely on the fact that comets are often detected more than a year before they reach perihelion, a time too short to allow for the construction of a dedicated space mission. The plan is to make Comet Interceptor ready to move when the time comes, performing a flyby of the incoming object and releasing twin probes to build up a 3D profile of the comet.

Image: An illustration of the L2 point showing the distance between the L2 and the Sun, compared to the distance between Earth and the Sun. Credit: ESA.

ESA will build the spacecraft and one of the two probes, the other being developed by the Japanese space agency JAXA. Given that over 100 comets are known to come close to Earth in their orbit around the Sun, along with the 29,000 asteroids cataloged so far, it will likewise be useful to have a better understanding of the composition of a pristine comet in case it ever becomes necessary to take action to avert an impact on Earth.

And if the target turns out to be an interstellar new arrival like ‘Oumuamua? So much the better. We should be finding more such newcomers shortly, given the success of the Pan-STARRS observatory and the development of the Large Synoptic Survey Telescope, now known as the Vera C. Rubin Observatory, under construction in Chile. Waiting in space for an Oort object or an interstellar comet means we won’t need to know the target in advance, but can adjust the mission as data become available. In any case, ESA is optimistic, saying Comet Interceptor “is expected to complete its mission within six years of launch.”

An ESA factsheet on Comet Interceptor can be found here. The paper on C/2014 UN271 (Bernardinelli-Bernstein) is Man-To Hui et al, “Hubble Space Telescope Detection of the Nucleus of Comet C/2014 UN271 (Bernardinelli-Bernstein),” Astrophysical Journal Letters Vol. 929, No. 1 (12 April 2022) L12 (abstract).

Europa: Catching Up with the Clipper

I get an eerie feeling when I look at spacecraft before they launch (not that I get many opportunities to do that, at least in person). But seeing the Spirit and Opportunity rovers on the ground at JPL just before their shipment to Florida was an experience that has stayed with me, as I pondered how something built by human hands would soon be exploring another world. I suppose the people who do these things at the Johns Hopkins Applied Physics Laboratory and the Jet Propulsion Laboratory itself get used to the feeling. For me, though, the old-fashioned ‘sense of wonder’ kicks in long and hard, as it did when Europa Clipper arrived recently at JPL.

Not that the spacecraft is by any means complete, but its main body has been delivered to the Pasadena site, where it will see final assembly and testing over a two-year period. Here I fall back on the specs to note that this is the largest NASA spacecraft ever designed for exploration of another planet. It’s about the size of an SUV when stowed for launch, but we know from the James Webb Space Telescope how large these things can become when fully deployed. In Europa Clipper’s case, the recently delivered main body is 3 meters tall and 1.5 meters wide. Extending the solar arrays and other deployable equipment takes it up to basketball court size.

Image: The main body of NASA’s Europa Clipper spacecraft has been delivered to the agency’s Jet Propulsion Laboratory in Southern California, where, over the next two years, engineers and technicians will finish assembling the craft by hand before testing it to make sure it can withstand the journey to Jupiter’s icy moon Europa. Here it is being unwrapped in a main clean room at JPL, as engineers and technicians inspect it just after delivery in early June 2022. Credit: NASA.

Eight antennas are involved, powered by a radio frequency subsystem that will service a high-gain antenna measuring three meters wide, and as JPL notes in a recent update, the electrical wires and connectors collectively called the ‘harness’ themselves weigh 68 kilograms. Stretch all that wiring out and you get 640 meters, taking us twice around a football field. The main body will include a fuel tank and an oxidizer tank connecting to an array of 24 engines. Tim Larson is JPL deputy project manager for Europa Clipper:

“Our engines are dual purpose. We use them for big maneuvers, including when we approach Jupiter and need a large burn to be captured in Jupiter’s orbit. But they’re also designed for smaller maneuvers to manage the attitude of the spacecraft and to fine tune the precision flybys of Europa and other solar system bodies along the way.”

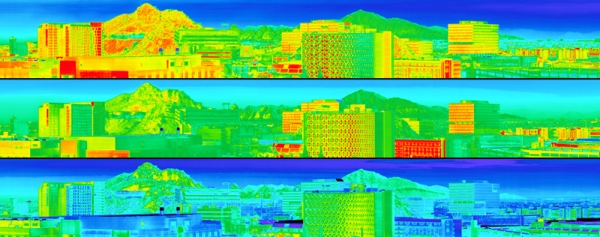

So what is arriving, or has arrived at JPL, is a spacecraft in pieces, its main body now joining key instruments like E-THEMIS, a thermal emission imaging system developed at Arizona State, and Europa-UVS, the mission’s ultraviolet spectrograph. E-THEMIS is an infrared camera that should give us insights into temperatures on the Jovian moon, and hence offer information about its geological activity. Given that we’re interested in finding places where liquid water is close to the surface, the data from this instrument should be extremely valuable during the spacecraft’s nearly fifty close passes.

The theory here is that as Europa’s surface cools after local sunset, the areas of the most solid ice will retain heat longer than areas with a looser, more granular texture. E-THEMIS will be able to map cooling rates across the surface. The infrared camera works in three heat-sensitive bands, and the warmer regions it should see may be the result of liquid water close to the surface, or possible impacts or convection activity. Not surprisingly, E-THEMIS lead project engineer Greg Mehall points to the radiation environment in Jupiter space as one of the team’s biggest issues:

“The extreme radiation environment at Europa gave far more design challenges for the ASU engineering team than on any previous instrument we’ve developed. We had to use dense shielding materials, such as copper-tungsten alloys, to provide the necessary protection from the expected radiation. And to ensure that E-THEMIS will survive during the mission, we also carried out radiation tests on the instrument’s electronic components and materials.”

Image: The thermal imager will use infrared light to distinguish warmer regions on Europa’s surface, where liquid water may be near the surface or might have erupted onto the surface. The thermal imager will also characterize surface texture to help scientists understand the small-scale properties of Europa’s surface. In the image above, we’re seeing a diurnal temperature color image from the first light test of Europa Clipper’s thermal imager (called E-THEMIS), taken from the rooftop of the Interdisciplinary Science and Technology Building 4 on the Tempe Campus of Arizona State University (ASU). The top image was acquired at 12:40 PM, the middle at 4:40 PM, and the bottom image at 6:20 PM (after sunset). Temperatures are approximations during this testing phase. Credit: ASU.

As to the Europa-UVS instrument, this ultraviolet spectrograph will search for water vapor plumes and study the composition of both the surface and the tenuous atmosphere as it uses an optical grating to spread and analyze light, identifying basic molecules like hydrogen, oxygen, hydroxide and carbon dioxide.

The spacecraft’s visible light imaging system (EIS) is going to upgrade those well-studied images from the Galileo mission enormously. The plan is to map 90 percent of the moon’s surface at 100 meters per pixel, which is six times more of Europa’s surface than Galileo, and at five times better resolution. And when Europa Clipper swings close to Europa during a flyby, it will produce images with a resolution fully 100 times better than Galileo. The Europa Imaging System includes both wide- and narrow-angle cameras, each with an eight-megapixel sensor. Both of these cameras will produce stereoscopic images and include the needed filters to acquire color images.

All told, the spacecraft’s nine science instruments should be able to extract information about the depth and salinity of the ocean under the ice and, crucially, the thickness of the ice crust (I can imagine wagers on that issue going around in certain quarters). Gathering information about the moon’s surface and interior should further illuminate the issue of plumes from the ocean below that may break through the ice.

Assembly, test and launch is a two year phase that, by the end of this year, should see assembly of most of the flight hardware and the remaining science instruments. Kudos to JHU/APL, which has just delivered a flight system that is the largest ever built by engineers and technicians there. Now we look toward bolting on the radio frequency module, radiation monitors, power converters, the propulsion electronics and those hundreds of meters of wiring. Not to mention the electronics vault that must stand up to hard radiation.

The full instrument package will include an imaging spectrometer, ice-penetrating radar, a magnetometer, a plasma instrument, a mass spectrometer and a dust analyzer. Only two years and four months before launch onto a six-year journey of 2.9 billion kilometers. Europa Clipper isn’t a life-finder, but it does have the capability of detecting whether the moon’s ocean really does allow for the possibility of life to develop. It’s our first reconnaissance of Europa since the 1990s. What surprises will it reveal?

Bear in mind, too, that we still have ESA’s JUICE (JUpiter ICy moons Explorer) in the offing, with launch planned for 2023. I note with interest that on June 19, Europa will occult a distant star, which should be useful in tweaking our knowledge of the moon’s orbit before the arrival of both missions. Destined to end its life as a Ganymede orbiter, JUICE will make only two close passes of Europa, but its period of operations will coincide with part of Europa Clipper’s numerous flybys of the moon.

Solar Sailing: The Beauties of Diffraction

Knowing of Grover Swartzlander’s pioneering work on diffractive solar sails, I was not surprised to learn that Amber Dubill, who now takes the idea into a Phase III study for NIAC, worked under Swartzlander at the Rochester Institute of Technology. The Diffractive Solar Sailing project involves an infusion of $2 million over the next two years, with Dubill (JHU/APL) heading up a team that includes experts in traditional solar sailing as well as optics and metamaterials. A potential mission to place sails into a polar orbit around the Sun is one possible outcome.

[Addendum: The original article stated that the Phase III award was for $3 million. The correct amount is $2 million, as changed above].

But let’s fall back to that phrase ‘traditional solar sailing,’ which made me wince even as I wrote it. Solar sailing relies on the fact that while solar photons have no mass, they do impart momentum, enough to nudge a sail with a force that over time results in useful acceleration. Among those of us who follow interstellar concepts, such sails are well established in the catalog of propulsion possibilities, but to the general public, the idea retains its novelty. Sails fire the imagination: I’ve found that audiences love the idea of space missions with analogies to the magnificent clipper ships of old.

We know the method works, as missions like Japan’s IKAROS and NASA’s NanoSail-D2 as well as the Planetary Society’s LightSail 2 have all demonstrated. Various sail missions – NEA Scout and Solar Cruiser stand out here – are in planning to push the technology forward. These designs are all reflective and depend upon the direction of sunlight, with sail designs that are large and as thin as possible. What the new NIAC work will examine is not reflection but diffraction, which involves how light bends or spreads as it encounters obstacles. Thus a sail can be built with small gratings embedded within the thin film of its structure, and the case Swartzlander has been making for some time now is that such sails would be more efficient.

A diffractive sail can work with incoming light at a variety of angles using new metamaterials, in this case ‘metafilms,’ that are man-made structures with properties unlike those of naturally occurring materials. Sails made of them can be essentially transparent, meaning they will not absorb large amounts of heat from the Sun, which could compromise sail substrates.

Moreover, these optical films allow for lower-mass sails that are steered by electro-optic methods as opposed to bulky mechanical systems. They can maintain more efficient positions while facing the Sun, which also makes them ideal for the use of embedded photovoltaic cells and the collection of solar power. Reflective sails need to be tilted to achieve best performance, but the inability to fly face-on in relation to the Sun reduces the solar flux upon the sail.

The Phase III work for NIAC will take Dubill and team all the way from further analyzing the properties of diffractive sails into development of an actual mission concept involving multiple spacecraft that can collectively monitor solar activity, while also demonstrating and fine-tuning the sail strategy. The description of this work on the NIAC site explains the idea:

The innovative use of diffracted rather than reflected sunlight affords a higher efficiency sun-facing sail with multiplier effects: smaller sail, less complex guidance, navigation, and attitude control schemes, reduced power, and non-spinning bus. Further, propulsion enhancements are possible by the reduction of sailcraft mass via the combined use of passive and active (e.g., switchable) diffractive elements. We propose circumnavigating the sun with a constellation of diffractive solar sails to provide full 4? (e.g., high inclination) measurements of the solar corona and surface magnetic fields. Mission data will significantly advance heliophysics science, and moreover, lengthen space weather forecast times, safeguarding world and space economies from solar anomalies.

Delightfully, a sail like this would not present the shiny silver surface of the popular imagination but would instead create a holographic effect that Dubill’s team likens to the rainbow appearance of a CD held up to the Sun. And they need not be limited to solar power. Metamaterials are under active study by Breakthrough Starshot because they can be adapted for laser-based propulsion, which Starshot wants to use to reach nearby stars through a fleet of small sails and tiny payloads. The choice of sail materials that can survive the intense beam of a ground-based laser installation and the huge acceleration involved is crucial.

The diffractive sail concept has already been through several iterations at NIAC, with the testing of different types of sail materials. Grover Swartzlander received a Phase I grant in 2018, followed by a Phase II in 2019 to pursue the work, a needed infusion of funding given that before 2017, few papers on diffractive space sails existed in the literature. In a 2021 paper, Dubill and Swartzlander went into detail on the idea of a constellation of sails monitoring solar activity. From the paper:

We have proposed launching a constellation of satellites throughout the year to build up a full-coverage solar observatory system. For example a constellation of 12 satellites could be positioned at 0.32 AU and at various inclinations about the sun within 6 years: Eight at various orbits inclined by 60 and four distributed about the solar ecliptic. We know of no conventionally powered spacecraft that can readily achieve this type of orbit in such a short time frame. Based on our analysis, we find that diffractive solar sails provide a rapid and cost-effective multi-view option for investigating heliophysics.

Image: The new Diffractive Solar Sailing concept uses light diffraction to more efficiently take advantage of sunlight for propulsion without sacrificing maneuverability. Incidentally, this approach also produces an iridescent visual effect. Credit: RIT/?MacKenzi Martin.

Dubill thinks an early mission involving diffractive sails can quickly prove their value:

“While this technology can improve a multitude of mission architectures, it is poised to significantly impact the heliophysics community’s need for unique solar observation capabilities. Through expanding the diffractive sail design and developing the overall sailcraft concept, the goal is to lay the groundwork for a future demonstration mission using diffractive lightsail technology.”

A useful backgrounder on diffractive sails and their potential use in missions to the Sun is Amber Dubill’s thesis at RIT, “Attitude Control for Circumnavigating the Sun with Diffractive Solar Sails” (2020), available through RIT Scholar Works. See also Dubill & Swartzlander, “Circumnavigating the sun with diffractive solar sails,” Acta Astronautica

Volume 187 (October 2021), pp. 190-195 (full text). Grover Swartzlander’s presentation “Diffractive Light Sails and Beam Riders,” is available on YouTube.

Venus Life Finder: Scooping Big Science

I’ve maintained for years that the first discovery of life beyond Earth, assuming we make one, will be in an extrasolar planetary system, through close and eventually unambiguous analysis of an exoplanet’s atmosphere. But Alex Tolley has other thoughts. In the essay below, he looks at a privately funded plan to send multiple probes into the clouds of Venus in search of organisms that can survive the dire conditions there. And while missions this close to home don’t usually occupy us because of Centauri Dreams’ deep space focus, Venus is emerging as a prominent exception, given recent findings about anomalous chemistry in its atmosphere. Are the clouds of Venus concealing an ecosystem this close to home?

by Alex Tolley

Introduction

The discovery of phosphine (PH3), an almost unambiguous biosignature on Earth, in the clouds of Venus in 2021 increased interest in reinvestigating the planet’s clouds for life, a scientific goal that had been on hiatus since the last atmospheric entry and lander vehicle mission, Vega-2 in 1984. While the recent primary target for life discovery has been Mars, whether extinct, or extant in the subsurface, it has taken nearly half a century since the Viking landers to once again look directly for Martian life with the Perseverance rover.

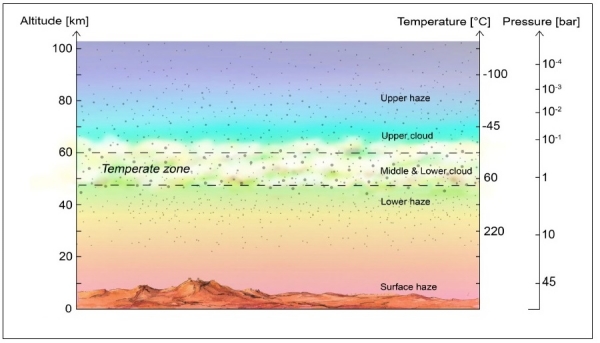

However, if the PH3 discovery is real (and it is supported by a reanalysis of the Pioneer Venus probe data), then maybe we have been looking at the wrong planet. The temperate zone in the Venusian clouds is the nearest habitable zone to Earth. If life does exist there [see Figure 1] despite the presence of concentrated sulfuric acid (H2SO4), then it is likely to be in this temperate zone layer, having evolved to live in such conditions.

Figure 1. Schematic of Venus’ atmosphere. The cloud cover on Venus is permanent and continuous, with the middle and lower cloud layers at temperatures that are suitable for life. The clouds extend from altitudes of approximately 48 km to 70 km. Credit: J Petkowska.

But why launch a private mission, rather than leave it to a well-funded, national one?

National space agencies haven’t been totally idle. There are four planned missions, two by NASA (DAVINCI+, VERITAS), one by ESA (EnVision) to investigate Venus, all due to be launched around 2030, as well a Russian one (Venera-D) to be launched at the same time:

VERITAS and DAVINCI+ are both Discovery-class missions. They are budgeted up to $500 million each. EnVision is ESA’s mission launching in the same timeframe. All three missions have target launch dates ranging from 2028 (DAVINCI+, VERITAS) to 2031 (EnVision). As with any large budget mission, these missions have taken a long time to develop. DAVINCI was proposed in 2015, the revised DAVINCI+ proposed again in 2019, and selected in 2021 for a 2028 launch. VERITAS was proposed in 2015, and selected only in 2021. Then there is the seven years of development, testing, and finally launch in 2028. EnVision was selected in 2021, and faces a decade before launch.[5,6,7].

DAVINCI+’s goals include:

1. Understanding the evolution of the atmosphere

2. Investigating the possibility of an early ocean

3. Returning high resolution images of the geology to determine if plate tectonics ever existed.

[PG note: NASA GSFC just posted a helpful overview of this mission.]

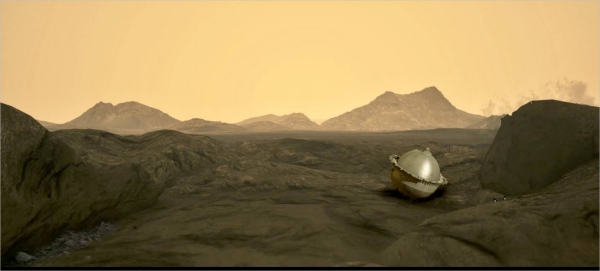

Image: The Deep Atmosphere Venus Investigation of Noble gases, Chemistry, and Imaging (DAVINCI) mission, which will descend through the layered Venus atmosphere to the surface of the planet in mid-2031. DAVINCI is the first mission to study Venus using both spacecraft flybys and a descent probe. Credit: NASA.

VERITAS’s rather similar goals involve answering these questions:

1. How has the geology of Venus evolved over time?

2. What geologic processes are currently operating on it?

3. Has water been present on or near its surface?

EnVision’s goals include:

1. Determining the level and nature of current activity

2. Determining the sequence of geological events that generated its range of surface features

3. Assessing whether Venus once had oceans or was hospitable for life

4. Understanding the organizing geodynamic framework that controls the release of internal heat over the history of the planet

In addition, Russia has the Venera-D mission planned for a 2029 launch that has a lander. One of its goals is to analyze the chemical composition of the cloud aerosols. [8]

There is considerable overlap in the science goals of the four missions, and notably none have the search for life as a science goal, although the 3rd EnVision science goal could be the preparatory “follow the water” approach before a follow-up mission to search for life if there is evidence that Venus did once have oceans.

As with the Mars missions post Viking up to Perseverance, none of these missions is intended to look directly for life itself. Given the 2021 selection date for all three missions and the end of decade launch dates, it will be somewhat frustrating for scientists interested in searching for life on Venus.

Cutting through the slow progress of the national missions, the privately funded Venus Life Finder mission aims to start the search directly. The mission to look for life is focused on small instruments and a low-cost launcher. Not just one but a series of missions is planned, each increasing in capability. The first is intended to launch in 2023, and if the three anticipated missions are successful, Venus Life Finder would scoop the big science missions in being the first to detect life in Venus should it exist.

Some history of our views about Venus

Before the space age, both Venus and Mars were thought to have life. Mars stood out because of the seasonal dark areas and Schiaparelli’s observation of channels, followed by Lowell’s interpretation of these channels as canals, which carried the implication of intelligence. Von Braun’s “Mars Projekt” (1952) inferred that the atmosphere was thin, but the astronauts would just need O2 masks, and his technical tale had the astronauts discover an advanced Martian civilization. The popular science book “The Exploration of Mars” (1956) written by Willy Ley and Wernher Von Braun and illustrated by Chesley Bonestell, supported the idea of Martian vegetation, speculating that it was likely to be something along the lines of hardy terrestrial lichens.

Unlike Mars, the surface of Venus was not observable, just the dense permanent cloud cover. It was believed that Venus was younger than Earth and that the clouds covered a primeval swamp full of animals like those in our planet’s past. With the many probes starting in 1962 with the successful flyby of Mariner 2, it was determined that the surface of Venus was a hellish 438-482 C (820-900 degrees F), by far the hottest place in the Solar System. Worse, the clouds were not water as on Earth, but H2SO4, in a concentration that would rapidly destroy terrestrial life. Seemingly Venus was lifeless.

Some scientists thought Venus was much more Earth Like in the past, and that a runaway greenhouse state accounted for its current condition. If Venus was more Earth Like, there could have been oceans, and with them, life. On Earth, bacteria are carried up from the surface by air currents and have been found living in clouds and are part of the cloud formation process. Bacteria have been found in Earth’s stratosphere too. Bacteria living in the Venusian oceans would likely have been carried up into the atmosphere and occupy a similar habitat. If so, it has been hypothesized that bacterial life may have evolved to live in the increasingly acidic Venusian clouds just as terrestrial extreme acidophiles have evolved, and that this life is the source of the detected PH3.

The First Science Instrument

Is there any other evidence for life on Venus? Using two instruments, a particle size spectrometer and a nephelometer, the Pioneer Venus probe (1978) suggested that some tiny droplets in the clouds were not spherical, as physics would predict, and therefore might be living [unicellular] organisms.

But these probes could not resolve some anomalies of the Venusian atmosphere that might as a whole, indicate life.

1. Anomalous UV Absorber – spatial and temporal variability reminiscent of algal blooms.

2. Non-spherical large droplets – possible cells

3. Non-volatile elements such as phosphorus that could reduce the H2SO4 concentration and a required element of terrestrial life

4. Gases in disequilibrium, including PH3, NH3

Enter the Venus Life Finder (VLF) team, led by Principal Investigator Sara Seager, whose team includes the noted Venus expert David Grinspoon. The project isfunded by Breakthrough Initiatives. The initial idea was to do some laboratory experiments to determine if the assumptions about possible life in the clouds were valid.

As the VLF document states up front:

The concept of life in the Venus clouds is not new, having been around for over half a century. What is new is the opportunity to search for life or signs of life directly in the Venus atmosphere with scientific instrumentation that is both significantly more technologically advanced and greatly miniaturized since the last direct in situ probes to Venus’ atmosphere in the 1980s.

The big objection to life in the Venusian clouds is their composition: extremely concentrated sulfuric acid. Any terrestrial organism subjected to the acid is dissolved. [There is a reason serial killers use this method to remove evidence of their victims!]

To check on the constraints of cloud conditions on potential life and the ability to detect organic molecules, the VLF team conducted some experiments that showed that:

1. Organic molecules will autofluoresce in up to 70% H2SO4. Therefore organic molecules are detectable in the Venusian cloud droplets.

2. Lipids will form micelles in up to 70% H2SO4 and are detectable. Cell membranes are therefore possible containers for biological processes.

3. Terrestrial macromolecules – proteins, sugars, and nucleic acids – all rapidly become denatured in H2SO4, ruling out false positives from terrestrial contamination

4. The Miller-Urey experiment will form organic molecules in H2SO4. Therefore abiogenesis of precursor molecules is also possible on Venus.

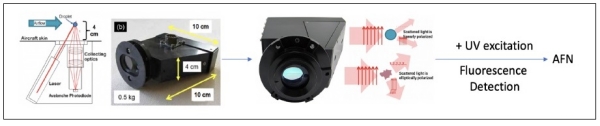

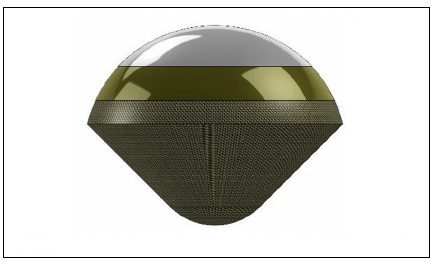

With these results, the team focused on building a single instrument to investigate both the shapes of particles and the presence of organic compounds. Non-spherical droplet shapes containing organic compounds would be a possible indication of life. This instrument, an Autofluorescing Nephelometer, is being developed from an existing instrument, as shown in figure 2.

Figure 2. Evolution of the Autofluorescing Nephelometer (AFN) from the Backscatter Cloud Probe (BCP) (left of arrow) to the Backscatter Cloud Probe with Polarization Detection (BCPD) (right of arrow). The BCPD is further evolved to the AFN by replacement of the BCPD laser with a UV source and addition of fluorescence-detection compatible optics.

All this in a package of just 1 kg to be carried in the atmosphere entry vehicle.

Reaching Venus

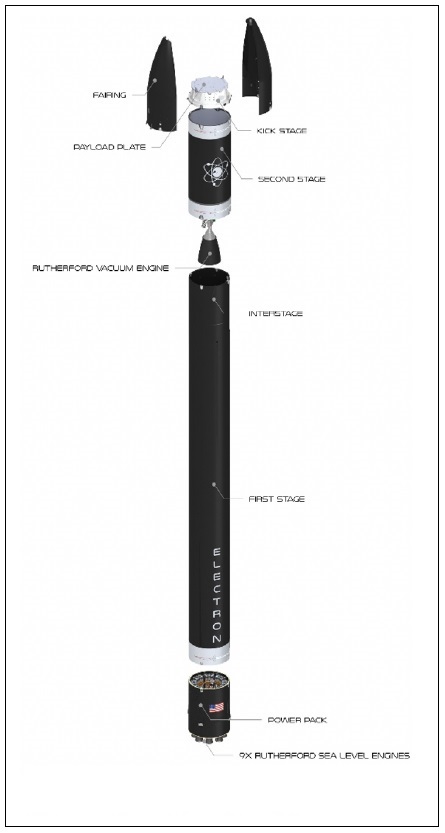

The VLF team has partnered with the New Space company Rocket Lab which is developing its Venus mission. The company has small launchers that are marketed to orbit tiny satellites for organizations that don’t want to use piggy-backed rides with other satellites as part of a large payload. Its Electron rocket launcher has so far racked up successes. The Electron can place up to 300kg in LEO.

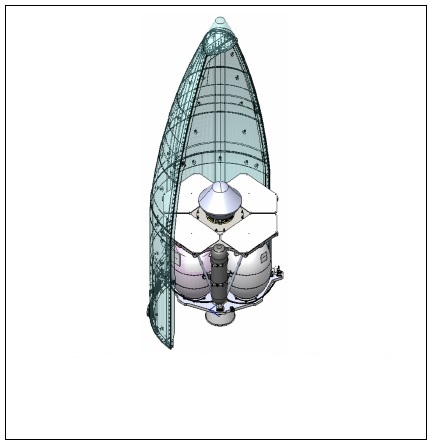

For the Venus mission, the payload includes the Photon rocket to make the interplanetary flight and deliver a 20 kg Venus atmosphere entry probe that includes the 1 kg AFN science package. To reach Venus, the Photon rocket using bi-propellant generates the needed 4 km/s delta V. It employs multiple Oberth maneuvers in LEO to most efficiently raise the orbit’s apogee until it is on an escape trajectory to Venus. Travel time is several months.

The Electron rocket, the Photon rocket, and the entry probe are shown in the next three figures.

The photon rocket powers the cruise phase from LEO to Venus intercept. This rocket uses an unspecified hypergolic fuel and will carry the entry probe across the 60 million km trajectory of its 3-month Venus mission.

Figure 3a. Electron small launch vehicle. The Electron ELV has successfully launched 146 satellite missions to date for a low per launch cost. A recent test of a helicopter retrieval of the 1st stage indicates that reusability is possible using this in-flight capture approach, therefore potentially saving costs. The kick stage in the image is replaced by the Photon rocket for interplanetary flight.

Figure 3b. High-energy Photon rocket and Venus entry probe.

Figure 3c. The small Venus probe is a 45-degree half-angle cone approximately 0.2m in diameter. Credit: NASA ARC.

Fast and Cheap

The cost of the mission to Venus is not publicized, but we know the cost of a launch of the Electron rocket to LEO is $7.5m [12]. Add the photon cruise stage, the entry probe, the science instruments, the operations and science teams. All in, a fraction of the Discovery mission costs, but with a faster payback and more focussed science. Rocket Lab has not launched an interplanetary mission before, so there is risk of failure. The company does have other interplanetary plans, including a Mars mission using two Photon cruise stage rockets for a Mars orbiter mission in 2024.

Is the Past the Future?

The small probe and dedicated instrument package, while contrasting with the big science missions of the national programs, harkens back to the early scientific exploration of space at the beginning of the space age. The smaller experimental rockets had limited launch capacity and the scientific payloads had to be small. Some examples include the Pioneer 4 lunar probe [11] and the Explorer series [10].

These relatively simple early experiments resulted in some very important discoveries. The lunar flyby Pioneer 4, launched in 1958, massed just 6.67 kg, with a diameter of just 0.23 m, a size comparable to the VLF’s first mission [11]. These early missions could be launched with some frequency, each probe or satellite containing specific instruments for the scientific goal. Today with miniaturization, instruments can be made smaller and controlled with computers, allowing more sophisticated measurements and onboard data analysis. Miniaturization continues, especially in electronics.

Breakthrough StarShot’s interstellar concept aims at have a 1 gm sail with onboard computer, sensors, and communications, increasing capabilities, reducing costs, and multiplying the numbers of such probes. With private funding now equaling that of the early space age experiments, and the lower costs of access to space, there has been a flowering of the technology and range of such private space experiments. The VLF mission is an exemplar of the possibilities of dedicated scientific interplanetary missions bypassing the need to be part of “big science” missions.

Just possibly, this VLF series of missions will return results from Venus’ atmosphere that show the first evidence of extraterrestrial life in our system. Such a success would be a scoop with significant ramifications.

References

1. Seager S, et al “Venus Life Finder Study” (2021) Web accessed 02/18/2022

https://venuscloudlife.com/venus-life-finder-mission-study/

2. Clarke A The Exploration of Space (1951), Temple Press Ltd

3. Ley, W, Von Braun W, Bonestell C The Exploration of Mars (1956), Sidgwick & Jackson

4. RocketLab “Electron Rocket: web accessed 02/18/2022 https://www.rocketlabusa.com/launch/electron/

5. Wikipedia “List of missions to Venus” en.wikipedia.org/wiki/List_of_missions_to_Venus

6. Wikipedia “DAVINCI” en.wikipedia.org/wiki/List_of_missions_to_Venus

7. Wikipedia “VERITAS” en.wikipedia.org/wiki/VERITAS_(spacecraft)

8. Wikipedia “EnVision” en.wikipedia.org/wiki/EnVision

9. Wikipedia “Venera-D” en.wikipedia.org/wiki/Venera-D

10. LePage, A, “Vintage Micro: The Second-Generation Explorer Satellites” (2015) www.drewexmachina.com/2015/09/03/vintage-micro-the-second-generation-explorer-satellites/

11. LePage, A, “Vintage Micro: The Pioneer 4 Lunar Probe” (2014)

www.drewexmachina.com/2014/08/02/vintage-micro-the-pioneer-4-lunar-probe/

12. Wikipedia “Rocket Lab Electron”, en.wikipedia.org/wiki/Rocket_Lab_Electron

A Radium Age Take on the ‘Wait Equation’

If you’ll check Project Gutenberg, you’ll find Bernhard Kellermann’s novel The Tunnel. Published in 1913 by the German house S. Fischer Verlag and available on Gutenberg only in its native tongue (finding it in English is a bit more problematic, although I’ve seen it on offer from online booksellers occasionally), the novel comes from an era when the ‘scientific romance’ was yielding to an engineering-fueled uneasiness with what technology was doing to social norms.

Kellermann was a poet and novelist whose improbable literary hit in 1913, one of several in his career, was a science fiction tale about a tunnel so long and deep that it linked the United States with Europe. It was written at a time when his name was well established among readers throughout central Europe. His 1908 novel Ingeborg saw 131 printings in its first thirty years, so this was a man often discussed in the coffee houses of Berlin and Vienna.

Image: Author Bernhard Kellermann, author of The Tunnel and other popular novels as well as poetry and journalistic essays. Credit: Deutsche Fotothek of the Saxon State Library / State and University Library Dresden (SLUB).

The Tunnel sold 10,000 copies in its first four weeks, and by six months later had hit 100,000, becoming the biggest bestseller in Germany in 1913. It would eventually appear in 25 languages and sell over a million copies. By way of comparison – and a note about the vagaries of fame and fortune – Thoman Mann’s Death in Venice, also published that year, sold 18,000 copies for the whole year, and needed until the 1930s to reach the 100,000 mark. Short-term advantage: Kellermann.

I mention this now obscure novel for a couple of reasons. For one thing, it’s science fiction in an era before popular magazines filled with the stuff had begun to emerge to fuel the public imagination. This is the so-called ‘radium age,’ recently designated as such by Joshua Glenn, whose series for MIT press reprints works from the period.

We might define an earlier era of science fiction, one beginning with the work of, say, Mary Wollstonecraft Shelley and on through H. G. Wells, and a later one maybe dating from Hugo Gernsback’s creation of Amazing Stories in 1926 (Glenn prefers to start the later period at 1934, which is a few years before the beginning of the Campbell era at Astounding, where Heinlein, Asimov and others would find a home), but in between is the radium age. Here’s Glenn, from a 2012 article in Nature:

[Radium age novels] depict a human condition subverted or perverted by science and technology, not improved or redeemed. Aldous Huxley’s 1932 Brave New World, with its devastating satire on corporate tyranny, behavioral conditioning and the advancement of biotechnology, is far from unique. Radium-age sci-fi tends towards the prophetic and uncanny, reflecting an era that saw the rise of nuclear physics and the revelation that the familiar — matter itself — is strange, even alien. The 1896 discovery of radioactivity, which led to the early twentieth-century insight that the atom is, at least in part, a state of energy, constantly in movement, is the perfect metaphor for an era in which life itself seemed out of control.

All of which is interesting to those of us of a historical bent, but The Tunnel struck me forcibly because of the year in which it was published. Radiotelegraphy, as it was then called, had just been deployed across the Atlantic on the run from New York to Germany, a distance (reported in Telefunken Zeitschrift in April of that year) of about 6,500 kilometers. Communicating across oceans was beginning to happen, and it is in this milieu that The Tunnel emerged to give us a century-old take on what we in the interstellar flight field often call the ‘wait equation.’

How long do we wait to launch a mission given that new technology may become available in the future? Kellermann’s plot involved the construction of the tunnel, a tale peppered with social criticism and what German author Florian Illies calls ‘wearily apocalyptic fantasy.’ Illies is, in fact, where I encountered Kellermann, for his 2013 title 1913: The Year Before the Storm, now available in a deft new translation, delves among many other things into the literary and artistic scene of that fraught year before the guns of August. This is a time of Marcel Duchamp, of Picasso, of Robert Musil. The Illies book is a spritely read that I can’t recommend too highly if you like this sort of thing (I do).

In The Tunnel, it takes Kellermann’s crews 24 years of agonizing labor, but eventually the twin teams boring through the seafloor manage to link up under the Atlantic, and two years later the first train makes the journey. It’s a 24 hour trip instead of the week-long crossing of the average ocean liner, a miracle of technology. But it soon becomes apparent that nobody wants to take it. For even as work on the tunnel has proceeded, aircraft have accelerated their development and people now fly between New York and Berlin in less than a day.

The ‘wait equation’ is hardly new, and Kellermann uses it to bring all his skepticism about technological change to the fore. Here’s how Florian Illies describes the novel:

…Kellermann succeeds in creating a great novel – he understands the passion for progress that characterizes the era he lives in, the faith in the technically feasible, and at the same time, with delicate irony and a sense for what is really possible, he has it all come to nothing. An immense utopian project that is actually realized – but then becomes nothing but a source of ridicule for the public, who end up ordering their tomato juice from the stewardess many thousand meters not under but over the Atlantic. According to Kellermann’s wise message, we would be wise not to put our utopian dreams to the test.

Here I’ll take issue with Illies, and I suppose Kellermann himself. Is the real message that utopian dreams come to nothing? If so, then a great many worthwhile projects from our past and our future are abandoned in service of a judgment call based on human attitudes toward time and generational change. I wonder how we go about making that ‘wait equation’ decision. Not long ago, Jeff Greason told Bruce Dorminey that it would be easier to produce a mission to the nearest star that took 20 years than to figure out how to fund, much less to build, a mission that would take 200 years.

He’s got a point. Those of us who advocate long-term approaches to deep space also have an obligation to reckon with the hard practicalities of mission support over time, which is not only a technical but a sociological issue that makes us ask who will see the mission home. But I think we can also see philosophical purpose in a different class of missions that our species may one day choose to deploy. Missions like these:

1) Advanced AI will at some point negate the question of how long to wait if we assume spacecraft that can seamlessly acquire knowledge and return it to a network of growing information, a nascent Encyclopedia Galactica of our own devising that is not reliant on Earth. Ever moving outward, it would produce a wavefront of knowledge that theoretically would be useful not just to ourselves but whatever species come after us.

2) Human missions intended as generational, with no prospect of return to the home world, also operate without lingering connections to controllers left behind. Their purpose may be colonization of exoplanets, or perhaps simple exploration, with no intention of returning to planetary surfaces at all. Indeed, some may choose to exploit resources, as in the Oort Cloud, far from inner systems, separating from Earth in the service of particular research themes or ideologies.

3) Missions designed to spread life have no necessary connection with Earth once launched. If life is rare in the galaxy, it may be within our power to spread simple organisms or even revive/assemble complex beings, a melding of human and robotics. An AI crewed ship that raises human embryos on a distant world would be an example, or a far simpler fleet of craft carrying a cargo of microorganisms. Such journeys might take millennia to reach their varied targets and still achieve their purpose. I make no statement here about the wisdom of doing this, only noting it as a possibility.

In such cases, creating a ‘wait equation’ to figure out when to launch loses force, for the times involved do not matter. We are not waiting for data in our lifetimes but are acting through an imperative that operates on geological timeframes. That is to say, we are creating conditions that will outlast us and perhaps our civilizations, that will operate over stellar eras to realize an ambition that transcends humankind. I’m just brainstorming here, and readers may want to wrangle over other mission types that fit this description.

But we can’t yet launch missions like these, and until we can, I would want any mission to have the strongest possible support, financial and political, here on the home world if we are talking about many decades for data return. It’s hard to forget the scene in Robert Forward’s Rocheworld where at least one political faction actively debates turning off the laser array that the crew of a starship approaching Barnard’s Star will use to brake into the planetary system there. Political or social change on the home world has to be reckoned into the equation when we are discussing projects that demand human participation from future generations.

These things can’t be guaranteed, but they can be projected to the best of our ability, and concepts chosen that will maintain scientific and public interest for the duration needed. You can see why mission design is also partly a selling job to the relevant entities as well as to the public, something the team working on a probe beyond the heliopause at the Johns Hopkins University Applied Physics Lab knows all too well.

Back to Bernhard Kellermann, who would soon begin to run afoul of the Nazis (his novel The Ninth November was publicly burned in Germany). He would later be locked out of the West German book trade because of his close ties with the East German government and his pro-Soviet views. He died in Potsdam in 1951.

Image: A movie poster showing Richard Dix and C. Aubrey Smith discussing plans for the gigantic project in Transatlantic Tunnel (1935). Credit: IMDB.

The Tunnel became a curiosity, and spawned an even more curious British movie by the same name (although sometimes found with the title Transatlantic Tunnel) starring Richard Dix and Leslie Banks. In the 1935 film, which is readily available on YouTube or various streaming platforms, the emphasis is on a turgid romance, pulp-style dangers overcome and international cooperation, with little reflection, if any, on the value of technology and how it can be superseded.

The interstellar ‘wait equation’ could use a movie of its own. I for one would like to see a director do something with van Vogt’s “Far Centaurus,” the epitome of the idea.

The Glenn paper is “Science Fiction: The Radium Age,” Nature 489 (2012), 204-205 (full text).

Microlensing: Expect Thousands of Exoplanet Detections

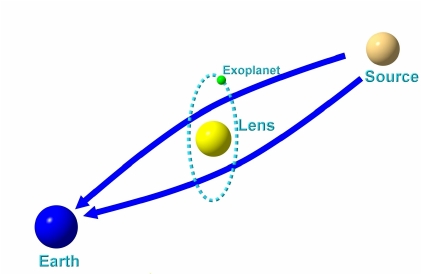

We just looked at how gravitational microlensing can be used to analyze the mass of a star, giving us a method beyond the mass-luminosity relationship to make the call. And we’re going to be hearing a lot more about microlensing, especially in exoplanet research, as we move into the era of the Nancy Grace Roman Space Telescope (formerly WFIRST), which is scheduled to launch in 2027. A major goal for the instrument is the expected discovery of exoplanets by the thousands using microlensing. That’s quite a jump – I believe the current number is less than 100.

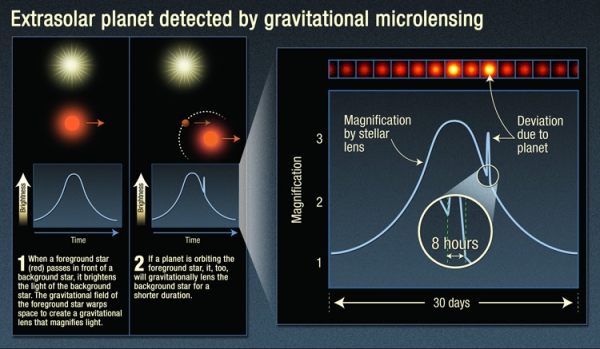

For while radial velocity and transit methods have served us well in establishing a catalog of exoplanets that now tops 5000, gravitational microlensing has advantages over both. When a stellar system occludes a background star, the lensing of the latter’s light can tell us much about the planets that orbit the foreground object. Whereas radial velocity and transits work best when a planet is in an orbit close to its star, microlensing can detect planets in orbits equivalent to the gas giants in our system.

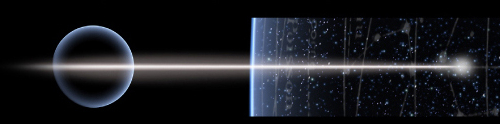

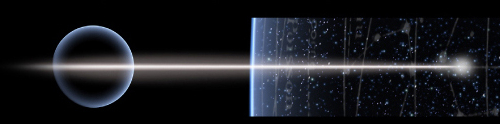

Image: This infographic explains the light curve astronomers detect when viewing a microlensing event, and the signature of an exoplanet: an additional uptick in brightness when the exoplanet lenses the background star. Credit: NASA / ESA / K. Sahu / STScI).

Moreover, we can use the technique to detect lower-mass planets in such orbits, planets far enough, for example, from a G-class star to be in its habitable zone, and small enough that the radial velocity signature would be tricky to tease out of the data. Not to mention the fact that microlensing opens up vast new areas for searching. Consider: TESS deliberately works with targets nearby, in the range of 100 light years. Kepler’s stars averaged about 1000 light years. Microlensing as planned for the Roman instrument will track stars, 200 million of them, at distances around 10,000 light years away as it looks toward the center of the Milky Way.

So this is a method that lets us see planets at a wide range of orbital distances and in a wide range of sizes. The trick is to work out the mass of both star and planet when doing a microlensing observation, which brings machine learning into the mix. Artificial intelligence algorithms can parse these data to speed the analysis, a major plus when we consider how many events the Roman instrument is likely to detect in its work.

The key is to find the right algorithms, for the analysis is by no means straightforward. Microlensing signals involve the brightening of the light from the background star over time. We can call this a ‘light curve,’ which it is, making sure to distinguish what’s going on here from the transit light curve dips that help identify exoplanets with that method. With microlensing, we are seeing light bent by the gravity of the foreground star, so that we observe brightening, but also splitting of the light, perhaps into various point sources, or even distorting its shape into what is called an Einstein ring, named of course after the work the great physicist did in 1936 in identifying the phenomenon. More broadly, microlensing is implied by all his work on the curvature of spacetime.

However the light presents itself, untangling what is actually present at the foreground object is complicated because more than one planetary orbit can explain the result. Astronomers refer to such solutions as degeneracies, a term I most often see used in quantum mechanics, where it refers to the fact that multiple quantum states can emerge with the same energy, as happens, for example, when an electron orbits one or the other way around a nucleus. How to untangle what is happening?

A new paper in Nature Astronomy moves the ball forward. It describes an AI algorithm developed by graduate student Keming Zhang at UC-Berkeley, one that presents what the researchers involved consider a broader theory that incorporates how such degeneracies emerge. Here is the university’s Joshua Bloom, in a blog post from last December, when the paper was first posted to the arXiv site:

“A machine learning inference algorithm we previously developed led us to discover something new and fundamental about the equations that govern the general relativistic effect of light- bending by two massive bodies… Furthermore, we found that the new degeneracy better explains some of the subtle yet pervasive inconsistencies seen [in] real events from the past. This discovery was hiding in plain sight. We suggest that our method can be used to study other degeneracies in systems where inverse-problem inference is intractable computationally.”

What an intriguing result. The AI work grows out of a two-year effort to analyze microlensing data more swiftly, allowing the fast determination of the mass of planet and star in a microlensing event, as well as the separation between the two. We’re dealing with not one but two brightness peaks in the brightness of the background star, and trying to deduce from this an orbital configuration that produced the signal. Thus far, the different solutions produced by such degeneracies have been ambiguous.

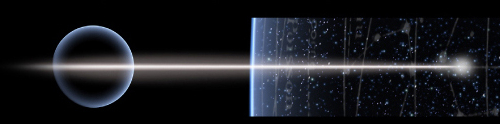

Image: Seen from Earth (left), a planetary system moving in front of a background star (source, right) distorts the light from that star, making it brighten as much as 10 or 100 times. Because both the star and exoplanet in the system bend the light from the background star, the masses and orbital parameters of the system can be ambiguous. An AI algorithm developed by UC Berkeley astronomers got around that problem, but it also pointed out errors in how astronomers have been interpreting the mathematics of gravitational microlensing. Credit: Research Gate.

Zhang and his fellow researchers applied microlensing data from a wide variety of orbital configurations to run the new AI algorithm through its paces. Let’s hear from Zhang himself:

“The two previous theories of degeneracy deal with cases where the background star appears to pass close to the foreground star or the foreground planet. The AI algorithm showed us hundreds of examples from not only these two cases, but also situations where the star doesn’t pass close to either the star or planet and cannot be explained by either previous theory. That was key to us proposing the new unifying theory.”

Thus to existing known degeneracies labeled ‘close-wide’ and ‘inner-outer,’ Zhang’s work adds the discovery of what the team calls an ‘offset’ degeneracy. An underlying order emerges, as the paper notes, from the offset degeneracy. In the passage from the paper below, a ‘caustic’ defines the boundary of the curved light signal. Italics mine:

…the offset degeneracy concerns a magnification-matching behaviour on the lens-axis and is formulated independent of caustics. This offset degeneracy unifies the close-wide and inner-outer degeneracies, generalises to resonant topologies, and upon re- analysis, not only appears ubiquitous in previously published planetary events with 2-fold degenerate solutions, but also resolves prior inconsistencies. Our analysis demonstrates that degenerate caustics do not strictly result in degenerate magnifications and that the commonly invoked close-wide degeneracy essentially never arises in actual events. Moreover, it is shown that parameters in offset degenerate configurations are related by a simple expression. This suggests the existence of a deeper symmetry in the equations governing 2-body lenses than previously recognised.

The math in this paper is well beyond my pay grade, but what’s important to note about the passage above is the emergence of a broad pattern that will be used to speed the analysis of microlensing lightcurves for future space-and ground-based observations. The algorithm provides a fit to the data from previous papers better than prior methods of untangling the degeneracies, but it took the power of machine learning to work this out.

It’s also noteworthy that this work delves deeply into the mathematics of general relativity to explore microlensing situations where stellar systems include more than one exoplanet, which could involve many of them. And it turns out that observations from both Earth and the Roman telescope – two vantage points – will make the determination of orbits and masses a good deal more accurate. We can expect great things from Roman.

The paper is Zhang et al., “A ubiquitous unifying degeneracy in two-body microlensing systems,” Nature Astronomy 23 May 2022 (abstract / preprint).