I’ve learned that you can’t assume anything when giving a public talk about the challenge of interstellar flight. For a lot of people, the kind of distances we’re talking about are unknown. I always start with the kind of distances we’ve reached with spacecraft thus far, which is measured in the hundreds of AUs. With Voyager 1 now almost 156 AU out, I can get a rise out of the audience by showing a slide of the Earth at 1 AU, and I can mention a speed: 17.1 kilometers per second. We can then come around to Proxima Centauri at 260,000 AU. A sense of scale begins to emerge.

But what about propulsion? I’ve been thinking about this in relation to a fundamental gap in our aspirations, moving from today’s rocketry to what may become tomorrow’s relativistic technologies. One thing to get across to an audience is just how little certain things have changed. It was exhilarating, for example, to watch the Arianne booster carry the James Webb Space Telescope aloft, but we’re still using chemical (and solid state) engines that carry steep limitations. Rockets using fission and fusion engines could ramp up performance, with fusion in particular being attractive if we can master it. But finding ways to leave the fuel behind may be the most attractive option of all.

I was corresponding with Philip Lubin (UC-Santa Barbara) about this in relation to a new paper we’ll be looking at over the next few days. Dr. Lubin makes a strong point on where rocketry has taken us. Let me quote him from a recent email:

…when you look at space propulsion over the past 80 years, we are still using the same rocket design as the V2 only larger But NOT faster. Hence in 80 years we have made incredible strides in exploring our solar system and the universe but our propulsion system is like that of internal combustion engine cars. No real change. Just bigger cars. So for space exploration to date – “just bigger rockets” but “not faster rockets”. [SpaceX’s] Starship is incredible and I love what it will do for humanity but it is fundamentally a large V2 using LOX and CH4 instead of LOX and Alcohol.

The point is that we have to do a lot better if we’re going to talk about practical missions to the stars. Interstellar flight is feasible today if we accept mission durations measured in thousands of years (well over 70,000 years at Voyager 1 speeds to travel the distance to Proxima Centauri). But taking instrumented probes, much less ships with human crews, to the nearest star demands a completely different approach, one that Lubin and team have been exploring at UC-SB. Beamed or ‘directed energy’ systems may do the trick one day if we can master both the technology and the economics.

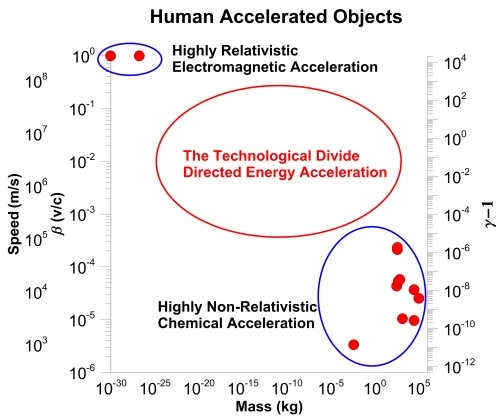

Let’s ponder what we’re trying to do. Lubin likes to show the diagram below, which brings out some fundamental issues about how we bring things up to speed. On the one hand we have chemical propulsion, which as the figure hardly needs to note, is not remotely relativistic. At the high end, we have the aspirational goal of highly relativistic acceleration enabled by directed energy – a powerful beam pushing a sail.

Image: This is Figure 1 from “The Economics of Interstellar Flight,” by Philip Lubin and colleague Alexander Cohen (citation below). Caption: Speed and fractional speed of light achieved by human accelerated objects vs. mass of object from sub-atomic to large macroscopic objects. Right side y-axis shows ? ? 1 where ? is the relativistic “gamma factor.” ? ? 1 times the rest mass energy is the kinetic energy of the object.

Thinking again of how I might get this across to an audience, I fall back on the energies involved, for as Lubin and Cohen’s paper explains, the energy available in chemical bonds is simply not sufficient for our purposes. It is mind-boggling to follow this through, as the authors do. Take the entire mass of the universe and turn it into chemical propellant. Your goal is to accelerate a single proton with this unimaginable rocket. The final speed you achieve is in the range of 300 to 600 kilometers per second.

That’s fast by Voyager standards, of course, but it’s also just a fraction of light speed (let’s give this a little play and say you might get as high as 0.3 percent), and the payload is no more than a single proton! We need energy levels a billion times that of chemical reactions. We do know how to accelerate elementary particles to relativistic velocities, but as the universe-sized ‘rocket’ analogy makes clear, we can’t dream of doing this through chemical energy. Particle accelerators reach these velocities with electromagnetic means, but we can’t yet do it beyond the particle level.

Directed energy offers us a way forward but only if we can master the trends in photonics and electronics that can empower this new kind of propulsion in realistic missions. In their new paper, to be published in a special issue of Acta Astronautica, Lubin and Cohen are exploring how we might leverage the power of growing economies and potentially exponential growth in enough key areas to make directed energy work as an economically viable, incrementally growing capability.

Beaming energy to sails should be familiar territory for Centauri Dreams readers. For the past eighteen years, we’ve been looking at solar sails and sails pushed by microwave or laser, concepts that take us back to the mid-20th Century. The contribution of Robert Forward to the idea of sail propulsion was enormous, particularly in spreading the notion within the space community, but sails have been championed by numerous scientists and science fiction authors for decades. Jim Benford, who along with brother Greg performed the first laboratory work on beamed sails, offers a helpful Photon Beam Propulsion Timeline, available in these pages.

In the Lubin and Cohen paper, the authors make the case that two fundamental types of mission spaces exist for beamed energy. What they call Direct Drive Mode (DDM) uses a highly reflective sail that receives energy via momentum transfer. This is the fundamental mechanism for achieving relativistic flight. Some of Bob Forward’s mission concepts could make an interstellar crossing within the lifetime of human crews. In fact, he even developed braking methods using segmented sails that could decelerate at destination for exploration at the target star and eventual return.

Lubin and Cohen also see an Indirect Drive Mode (IDM), which relies on beamed energy to power up an onboard ion engine that then provides the thrust. My friend Al Jackson, working with Daniel Whitmire, did an early analysis of such a system (see Rocketry on a Beam of Light), The difference is sharp: A system like this carries fuel onboard, unlike its Direct Drive Mode cousin, and thus has limits that make it best suited to work within the Solar System. While ruling out high mass missions to the stars, this mode offers huge advantages for reaching deep into the system, carrying high mass payloads to the outer planets and beyond. From the paper:

…for the same mission thrust desired, an IDM approach uses much lower power BUT achieves much lower final speed. For solar system missions with high mass, the final speeds are typically of order 100 km/s and hence an IDM approach is generally economically preferred. Another way to think of this is that a system designed for a low mass relativistic mission can also be used in an IDM approach for a high mass, low speed mission.

We shouldn’t play down IDM because it isn’t suited for interstellar missions. Fast missions to Mars are a powerful early incentive, while projecting power to spacecraft and eventual human outposts deeper in the Solar System is a major step forward. Beamed propulsion is not a case of a specific technology for a single deep space mission, but rather a series of developing systems that advance our reach. The fact that such systems can play a role in planetary defense is a not inconsiderable benefit.

Image: Beamed propulsion leaves propellant behind, a key advantage. It could provide a path for missions to the nearest stars. Credit: Adrian Mann.

If we’re going to analyze how we go from here, where we’re at the level of lab experiments, to there, with functioning directed energy missions, we have to examine these trends in terms of their likely staging points. What I mean is that we’re looking not at a single breakthrough that we immediately turn into a mission, but a series of incremental steps that ride the economic wave that can drive down costs. Each incremental step offers scientific payoff as our technological prowess develops.

Getting to interstellar flight demands patience. In economic terms, we’re dealing with moving targets, making the assessment at each stage complicated. Think of photovoltaic arrays of the kind we use to feed power to our spacecraft. As Lubin and Cohen point out, until recently the cost of solar panels was the dominant economic fact about implementing this technology. Today, this is no longer true. Now it’s background factors – installation, wiring, etc. – that dominate the cost. We’ll get into this more in the next post, but the point is that when looking at a long-term outcome, we have a number of changing factors that must be considered.

Some parts of a directed energy system show exponential growth, such as photonics and electronics. And some do not. The cost of metals, concrete and glass move at anything but exponential rates. What “The Economics of Interstellar Flight” considers is developing a cost model that minimizes the cost for a specific outcome.

To do this, the authors have to consider the system parameters, such things as the power array that will feed the spacecraft, its diameter, the wavelength in use. And you can see the complication: When some key technologies are growing at exponential rates, time becomes a major issue. A longer wait means lower costs, while the cost of labor, land and launch may well increase with time. We can also see a ‘knowledge cost’: Wait time delays knowledge acquisition. As the authors note in relation to lasers:

The other complication is that many system parameters are interconnected and there is the severe issue that we do not currently have the capacity to produce the required laser power levels we will need and hence industrial capacity will have to catch up, but we do not want to be the sole customer. Hence, finding technologies that are driven by other sectors or adopting technologies produced in mass quantity for other sectors may be required to get to the desired economic price point.

System costs, in other words, are dynamic, given that some technologies are seeing exponential growth and others are not, making a calculation of what the authors call ‘time of entry’ for any given space milestone a challenging goal. I want to carry this discussion of how the burgeoning electronics and photonics industries – driven by power trends in consumer spending – factor into our space ambitions into the next post. We’ll look at how dreams of Centauri may eventually be achieved through a series of steps that demand a long-term, deliberate approach relying on economic growth.

The paper is Lubin & Cohen, “The Economics of Interstellar Flight,” to be published in Acta Astronautica (preprint).

Probes to the unexplored dwarf planets of the solar system would seem like perfect goals for pathfinding of these technologies.

The numerous gravity assists and orbit mechanics voodoo that go into the chemically-powered missions could be avoided, and time spent in these intense regions of the solar system minimized.

Also, in a few years when the Rubin observatory comes online, there could be an even more convenient target if Planet Nine is discovered. The hunger to know more about that would be novel and intense, and could stir up funding that the dwarf planet menagerie would not.

It’s also at a distance that is super-challenging but far closer to being practical than interstellar.

That critical chart in the article has the wrong variable for the y-axis. Few people care about velocity. What really matters is mission duration. What seems like an amazingly high velocity will look a little ludicrous when it must be admitted that the mission duration is measured in centuries. That mission is unlikely to ever happen, no matter the feasibility.

Until we can get to the upper right of the chart — high mass and high velocity (short mission time) — the payback of the investment is too low to motivate more than a small minority of enthusiasts. Getting a “spacecraft on a chip” to Alpha Centauri in 20 years has essentially zero payback because it can’t do anything: it’s too small for instrumentation and communication, or for ISM survival!

The upper left of the chart isn’t interesting to me. The upper right of the chart might as well be labelled “hope and pray”. Unfortunately.

IDK if “hope and pray” is appropriate, but the huge energy requirements will mean that a lot of constituencies will argue that this is a huge waste of energy that could be put to better uses.

I recently watched de Grasse-Tyson talk at a conference in Dubai in 2018. He made the interesting historical observation that huge investments are only done for 2 reasons – military defense and war preparedness and economic returns. He effectively pooh-poohed the idea that anyone will fund settlements on Mars or even the Moon, let alone further out in the solar system as these projects will satisfy neither requirement. The 1950’s argument that the Moon would be the new “high ground” for the military (and put into the movie “Destination Moon”) has long been debunked. The military uses of beamed energy as weapons may well get Lubin’s phased laser arrays built, but whether they can ever be turned into plowshares is another issue. [I recall a Monty Python sketch about the absurd amounts of money needed to send food supplies to a space colony at Alpha Centauri, with billions of dollars for each cookie.]

If NDG-T is right, then any interstellar flight cannot involve huge funding commitments for scientific research, at least not assuming the current dominant political-financial systems we live with. Any hope for this means that resources, both energy and capital, must be reduced relative to national or global GDP until they no longer constitute a large outlay. That is the economics that must be addressed if we hope to send anything to the stars beyond em messages.

“IDK if “hope and pray” is appropriate…”

Then in a later comment you wrote:

“We can fantasize about reactionless drives, warp drives, etc., but so far it is fantasy and nothing more.”

I realize we are not really disagreeing, however I still think that “hope and pray” is not an unreasonable description of the chart’s upper right area. Fundamental breakthroughs cannot be scheduled.

“The 1950’s argument that the Moon would be the new “high ground” for the military (and put into the movie “Destination Moon”) has long been debunked.”

What is the argument? Is it something like you couldn’t throw rocks big enough and with enough energy that they wouldn’t just burn up in the atmosphere?

The argument was to have a nuclear missile base on teh Moon. [Not throwing rocks like the rebellion in The Moon is a Harsh Mistress.] It was debunked when it was made very clear that the travel time from Moon to earth would allow a lot of time to intercept the missiles. Keeping the missiles on earth’s surface and launching when needed was a far better solution. If they can be stealthed, then putting them in orbit would work too. However, the “Star Wars” program favored putting different weapons in orbit, like “Rods from the Gods”, X-ray lasers to kill other satellites, etc.

That’s why the key enabling technology for interstellar travel is self-reproducing machinery. It’s the only way we’ll grow our industrial capacity enough relative to population to be able to afford the energy expenditures.

We need to be able to deploy literally astronomical amounts of energy to do interstellar travel in a reasonable timeframe. We should be working on how to become a K-2 civilization, or a reasonable approximation. Once we have energy to squander, interstellar travel won’t look so hard.

“He made the interesting historical observation that huge investments are only done for 2 reasons – military defense and war preparedness and economic returns.”

Solar Power Satellites have potential for both.

True. IIRC, the US military were looking into small-scale SPSs to power their foreign operations to avoid the logistical costs of supplying fuel for generators. A niche that allows for relatively high cost compared to industrial use and could drive experience and reduced costs. They might well prove a viable option compared to small nuclear reactors for remote civilian outposts too. But for military use, they are vulnerable to attack from nations with launch capabilities.

I see SPSs becoming useful as renewables occupy ever-greater land area as we decarbonize energy. At some point, it is going to be better to transmit SPS power to the ground as energy demand increases and we become a KI civilization and move forward towards a KII over the coming millennia.

While I see the military wanting DE-STAR lasers as weapons, it would be a travesty if that happened, and civilian populations were subject to attacks from space. Those attacks needn’t even be high intensity, just enough beam strength to cause widespread crop failures and facilities disablement. They might even be used for some forms of weather control, both helpful and damaging.

Absolutely couldn’t agree with you more ! This entire miniaturization of spacecraft to permit travels at high speed is going to be astronomically costly and probably for very little return as you mentioned. I’d conservatively estimate that if they were aiming for the nearest star then they are going to have to build the powering complex (nuclear reactors?) somewhere at the 60° southern latitude to permit the laser array to blast through the least amount of atmosphere to accelerate this Star Wisp to 20% the speed of light or more.

As a result it’s conceivable that the utility of this tremendous laser array/power stations combination is going to be of limited utility especially with regards to other targets of opportunity with in our solar system or even other stellar systems. I would bet that given the fact that they have spoken of having the need for at least five nuclear reactors of large output will drive the cost somewhere into the market of billions of dollars to construct and bring up to speed for this project and at this respectively isolated location.

Add to all this that if you go ahead and decide to put this laser array into a orbital arrangement around the sun then the cost is going to escalate even into the trillions of dollars to provide you with the requisite infrastructure that you can utilize to send a microscopic probe to some other distant locality. You can talk all you want about how you will a scale the economies gradually to provide the monies for these kinds of projects but I do wonder how practical and cost-effective this is all going to be.

@Ron S.

I hope you noticed my reply to your commentary above before the others added their own commentary. I agree with you completely about whether or not these light driven sails have any practicality given the fact they have ridiculously light payloads that can be delivered. Personally, I put my faith in these new developments by Eric Lentz who has been working in a theoretical constraints toward the creation of a positive energy warp drive. We’ll see how these pan out

We’ve already discussed Lentz, and you hopefully recall my thoughts on the matter.

Please do not neglect the particle beam alternative to lasers. I believe it was Geoff Landis who published on this approach and showed it to be a superior alternative under reasonable system parameter assumptions.

Indeed, it may well be the case that a judiciously optimised ratio of laser and particle beams simultaneously applied produces a superior outcome than only using one or the other. Has anyone done the maths?

Anent this, has Jim Benford continued to maintain that microwaves are superior to lasers for this task from a systems cost perspective?

There is admitted amusement in the ironic idea that chucking rocks may be our best path to the stars. Indeed the term “ironic” may be on point if we wish to use electromagnetic accelerators to propel ferrous projectiles!

Particle beams gram for gram can deliver much more momentum but are influenced by magnetic fields which would affect the direction. More massive charged carbon bucky balls could do the trick better but only really in a very low atmospheric pressure enviroment. The low atmospheric pressure may actually help in that the particles burn up but expel matter from the top of the atmosphere to act as extra mass. Perhaps a laser power transfer with a particle accelerator in orbit would help i.e. convert the laser light into particle momentum.

I take it as a given that neutral particle beams are the only realistic option for beamed propulsion, charged particle beams would diverge too rapidly from self-repulsion. Various forms of optical cooling can reduce the ‘temperature’ of the beam to the point where you’ll be able to use them out as far as maybe a light year.

The real game changer is the proposal to mix a particle beam with a laser, where each focuses the other, and the difference in speeds causes pointing averaging, reducing jitter. It’s also possible such a beam could be used to slow down, too, because you could have the craft transfer energy from the EM component to the mass component, which could at least in theory allow for reverse thrust. Though I tend to think nobody on an interstellar voyage would want to rely on home for slowing down, so you’d probably be looking at beamed propulsion for acceleration, and some shipboard system for stopping.

This sort of system would be huge, though, and very energy intensive. As I said above, I don’t see this sort of thing as feasible without self-reproducing technology to allow us to exponentially increase our manufacturing and power generation capacity without regard to population.

Jim Benford has done a lot of work on neutral particle beams. He may chime in here on this. If not, check this:

Ultra-High Acceleration Neutral Particle Beam-Driven Sails

https://centauri-dreams.org/2019/01/03/ultrahigh-acceleration-neutral-particle-beam-driven-sails/

A Response to Comments on ‘Sails Driven by Diverging Neutral Particle Beams’

https://centauri-dreams.org/2014/08/27/a-response-to-comments-on-sails-driven-by-diverging-neutral-particle-beams/

I suppose we could send out a stream of positive charge particles and then a negative stream beam so they recombine again as weakly held together material.

British readers of the venerable Eagle Comics in the 1950s/1960s will recall that artist Frank Hampson assumed beamed power for Dan Dare’s Space Fleet ships. While looking like a cross between V-2s and WWII aircraft (and very much like SpaceX’s early Starship designs), with vanes to deflect rocket exhausts, the spaceships were almost empty of propulsion components, being charged with omnidirectional [?] impulse beams that filled onboard “batteries” for propulsion. The drives were like a cross between the DDM and IDM drives. There is no propellant, but rather the impulse beams are stored and can be released on demand. If Lubin’s laser light could be directly captured in a bottle and released when needed, this would be a very similar concept. The fun fictional book of cutaway drawings shows the design and interiors of the various spacecraft:

Space Fleet Operations Manual

Sadly my parents wouldn’t spring for The Eagle, so I had to content myself with a lesser bird from the same publishing house – Swift. Fortunately a friend down the road let me read his back issues of The Eagle, and this was my first exposure, in the mid 50s in England, to science fiction in any form.

When thinking about IDM, i.e. beaming energy to the craft to be then used to accelerate propellant, it is important to look at the comparative power density of the power receiver vs. a fission reactor. If you can’t make the power beam receiver lighter than a reactor of comparable power, power beaming makes no sense. It’s a high hurdle, especially if shielding can be omitted from a high temperature reactor.

Reading the paper I see that Lubin uses wall-plug efficiency of 42% for his lasers. If I understand this correctly, he means that the electrical energy has a 42% conversion efficiency into laser light. This conversion value is very important when comparing laser vs microwave transmitters. I used to assume a 3% efficiency for lasers, but this has been upended suggesting lasers are now comparable to microwaves, with better dispersion properties.

What I still need to discover is the conversion efficiency of laser light to electrical energy to power ion drives and other electric engines. I am guessing high tens of percent efficiency for monochromatic light.

As a fan of beamed power, especially when powered by solar energy I am very interested in the economics. The economics of locating solar power sats in space with the laser arrays is highly dependent on launch costs. Low costs and high launch cadence could change the equation from using ground-based lasers using local power generation (nuclear?) to space-based systems using solar power. While we usually assume orbiting SPS and laser arrays, I see no reason why they could not be located on the lunar farside, especially the laser arrays, and therefore having a very stable platform to fire from, as well as mitigating concerns that the lasers could be used as weapons for Earth targets.

What I like about the IDM model with potentially relatively short beam periods is that we escape from the concern of centuries-long firing times for large interstellar vehicles. Solar system travel would be fast, and onboard propellant means that deceleration at targets is made possible, so that fast vehicles do not have to be only flybys.

As Lubin states, there are multiple uses for phased laser arrays, and planetary defense from asteroids and comets could justify military level spending even if they were not useful as directed energy weapons against satellites and other military targets.

I look forward to the following posts on his paper.

NB. I am not sure about Lubin’s calculation for the velocity of a proton using all the mass of the universe as chemical energy. There are about 10^80 protons and neutrons in teh universe, so the rocket equation for 10^80/1 as M0/M1 gives an exhaust multiplier of 184. For a LH2/LOX engine with a vacuum exhaust velocity of 4.5 km/s, and assuming the craft weighed nothing, this would give a final velocity of 829 km/s. A little better than his figure, but does not detract in any way from the power of his example. Exhaust velocity and avoiding the tyranny of the rocket equation for low exhaust velocities is paramount to getting the needed velocities to reach the stars unless one can live with journey times of tens of millennia.

This comment may be a “Pipe Dream”, but I wonder if AI could help with Rocket Propulsion? I’m not sure how you could feed all the variables into a computer programme, but recently the Deep Mind computer was tasked with the game of Go – It beat a human for the first time, by using techniques/moves never seen before, as I said, just an idea.

There is a fundamental energy limit with chemical fuels. All AI can do is help design smaller, lighter engines and superstructures to allow the rocket/spaceship to attain a higher proportion of its theoretical velocity. You may have read early examples of the structure deadweight that limited V-2 class rockets to velocity multiples of exhaust and the number of stages needed to reach orbit. Clarke does this well in his early non-fiction books on space travel. The other thing you can do is use more energetic molecules, most of which are extremely toxic. But again, chemical bonds have an energy limit that restricts the energy that can be applied to accelerate the reaction mass. At this point, it is just tinkering at the margins of a mature technology. SpaceX abandoned maximum efficiency in favor of low cost – hence reusability, use of cheaper liquid methane, steel hulls. What is needed is a rocket technology with more power (e.g. fission, fusion, anti matter), a different form of propulsion (solar sails, beamed sails, electric engines, magsails, etc.). For interstellar travel, we really need some “new physics” approach that bypasses the limitations current physics imposes. We can fantasize about reactionless drives, warp drives, etc., but so far it is fantasy and nothing more.

Beaming energy overcomes the limits of the rocket equation, and that is what makes it attractive. But as Eniac comments above, the collector mass and efficiency are very important, as beaming can be worse than taking along a power source. If we could keep beaming energy to a Voyager class probe rather than relying on an RTG, there would potentially be no end to its operation until it physically decayed, rather than the RTG limiting its lifetime.

Glass itself can handle enormous gravities if thin enough. Perhaps a space bourne laser recycler would do it, i.e the laser light is bounced back and forth. I thought about a design in which a frenel lens was used, the light hit the craft and bounced back through the len onto a heavy disposable, or slower observer mirror craft and then back through the lens onto the craft again and so on. The craft and heavy reflector take away the momentum leaving the laser and lens system stable. If we hit huge gravities and we can we don’t need a largish expense system, recycling of laser light can compactify the system 10 to thousands of times.

IIUC, you are using the 2 mirrors to store the laser light rather than allowing them to separate in the photonic drive design.

2 questions:

1. Can the mirrors withstand the energy from the compounded laser light bouncing between them? The phased laser arrays for Breakthrough Starshot already have to be insanely reflective to prevent melting. Now intensify that beam energy 1000s of times in the 2 mirror bottle…

2. To contain the beams and prevent melting, the mirrors need to be perfectly reflective. What imperfection would allow, for example, 1000x the trapped beam energy to not melt the mirrors, and secondly what is the loss rate if the beam slowly leaked out even without the mirrors melting? 99.999…*?

We really need some sort of non-material bottle, similar to that for storing antimatter. Light would be trapped by some force like gravity. Would a micro-black hole work (although the mass penalty would be high)? How would the BH be forced to release its energy on demand rather than via Hawking radiation? Would metamaterials or holographic traps possible solutions?

1 The Starshot mirrors don’t have to be insanely reflective just insanely low absorbant to survive which optical glass can be. The beam bounces back and forth between the mirrors as they move apart, the lighter sail much faster, and it eventually leaks through the sail and heavy reflector with very little absorbance and temperature rises. Silicon can be made insanely reflective at designed wavelengths and insanely low absorbance and they can take a surprising laser beating !

https://www.osapublishing.org/ome/fulltext.cfm?uri=ome-10-10-2706&id=440175

The leakage does not matter, what is desirable is the reuse of the reflective beams to provide momentum and lower the capital costs. What matters is to provide maximum acceleration and nanotechnology can handle this with ease.

Here is some damage threshold info, upto around 400 MW per cm2 is pretty good !

https://www.osapublishing.org/ome/fulltext.cfm?uri=ome-10-10-2706&id=440175

Dang, It did not copy as intended.

https://www.google.com/url?sa=t&rct=j&q=&esrc=s&source=web&cd=&ved=2ahUKEwj1rIaasq71AhULTcAKHcuwCLYQFnoECBAQAQ&url=https%3A%2F%2Fwww.laserdamage.co.uk%2Fincludes%2Fdownloads%2FBRL_CW_white_paper.pdf&usg=AOvVaw2qiHP0wt7tIB23okq9o9-r

In essence the idea is to have the probe sail, lens and heavy reflector in space and a phase array ground based laser pumping energy into it. The lens remains stationary as much as possible and the laser light gets bounced between the sail and heavy reflector through the lens which is there to guide and focus the laser light between them.

Perhaps a ground system based phased array near the equator with the lens and sail system moved to a geostationary orbit before launch would be an idea, keeps everything a lot stiller and less expense movement control of the ground based laser system. They could also lower the laser wavelength to around 1.6 micron which has a lot of benefits. May need a pre-lens system to collimate the incoming beam, could be an idea to improve the quality of the beam.

The separation in foci between this Centauri Dreams essay (solar system travel) and the paper referred to (interstellar travel) suggests that bridging that distance may well invoke the greatest efforts of mankind.

For human economics to support such an effort, vast multitudes of educated, able and motivated humans will be needed, functioning in a social-cultural-political-economic system that has eliminated conflict and corruption. Developing the technology is a small part of it.

IOW, a society that has never existed in the past and is unlikely to exist in the future without modifying human behavior in a way that only eliminates the bad features without harming the good features. How to do that without ending up in a Brave New World dystopia?

I think it is best to accept human behavior with all its faults, design a society to minimize the ability of individuals and groups to parasitically game the system for themselves, while developing a much larger economy that doesn’t destroy the biosphere and in turn makes many of the costly elements of fast spaceflight far cheaper. Miniaturization, reducing propulsion costs, and developing smarter robots are 3 options to achieve such a goal. For human flight, the only possible approaches are sending just the brain and reconstituting a body on arrival, brain downloading and full-body reconstruction at arrival, sending frozen embryos or even just DNA, and culturing and educating those individuals at the destination. For my money, robots will always prove the cheapest option as they require no deadweight life support at the destination, nor the difficulties of terraforming should that be desired.

Breakthrough Starshot’s 1 sq. meter sail and computer payload massing 1 gm is possibly the first ancestor craft that will evolve and speciate into much larger, much smarter, and more capable craft in the future.

Tho different, I didn’t notice a reference to this paper by Paul Krugman

THE THEORY OF INTERSTELLAR TRADE

Here is the link:

http://www.princeton.edu/~pkrugman/interstellar.pdf

Noticed sometime back Krugman was quite a science fiction fan, as far as I know never was in SF fandom (but I know a lot of readers who are like that , well a lot! . ) He even had a few of his columns in the NY Times commenting on Asimov’s Foundation , he even mentioned The Expanse.

There is a Wiki entry on this subject:

https://en.wikipedia.org/wiki/The_Theory_of_Interstellar_Trade

Al

Al, I was surprised by Krugman’s interest when I first ran across it. I suppose you may have been the person who first pointed it out to me!

Krugman is a Charlie Stross fan. In an interview with both of them some years ago, Krugman was clearly in awe of Stross.

Stross’ “Neptune’s Brood” included a whole background about how interstellar trade could be financed, and IIRC was partially influenced by Krugman’s paper.

The 100km/s delivery speed for IDM within the solar system would still need to cut over 95% of that to go into orbit of the target world. I hope you address the mechanisms needed to achieve that in this week’s Lubin directed energy special.

The paper doesn’t discuss deceleration for orbital operations, though the high mass capability of an IDM system should allow propulsive options at the target. I think that high mass aspect is the key.

If the main sail can be used as a second reflector to slow down a smaller craft as in Forwards designs that could do the trick.

Forgettomori had an article called “Laser Stars, Laser Planets” on dropping asteroids in the Sun to project such a laser. Now I want two sails to bounce light back and forth, with center sub-sail disks being staged somehow.

This reminds me of the movie 2001 and using AI and the significant factor of the monolith. We have had Monoliths since 12,900 years ago and the reason for them has always been for the heavens. Now that we are reaching for the real physical heavens we find indications of terrestrial contact. The elephant in the room has all the markings to indicate a superior technology and the military industrial complex has kept the lid on it for some 75 years. Hopefully in the next year to ten years we will be enlightened to a major revolution in mankind’s understanding. Then the lasers will only be used to vaporize the dusty path to the heavens.

Someone said: there are more things between the Sky and the earth than your philosophy could ever Imagine…

The simulation operators are laughing at humanity’s feeble attempts to bridge interstellar distances using various means of physical propulsion. We’ll truly achieve interstellar capability once we learn to instantaneously modify the spacetime coordinates (x,y,z,t) of every particle of the space vehicle within the simulation mainframe’s memory core, thus teleporting the vehicle instantaneously to its destination.

Very much in the vein of Abbott’s Flatland and its 3D->4D space version. Cixin Liu’s “Three-Body Problem” (v3 Death’s End) has humans encountering space where higher dimensions can be accessed and used to destroy the Trisolarians weapons. Being able to translate physical coordinates as simply as manipulating matrices would be a stunning advance in engineering, even if constrained to 3D space and c-speed limitations. Reactionless drives come to mind.

“Chiang spoke slowly and watched the younger gull ever so carefully. “To fly as fast as thought, to anywhere that is,” he said, “you must begin by knowing that you have already arrived . .

The trick, according to Chiang, was for Jonathan to stop seeing himself as trapped inside a limited body.”

Jonathan Livingston Seagull

When giving public talks, I found the following helps. I have a yellow marble, about 1 cm across: that’s the Sun. Earth’s orbital radius is one metre, which one can trace out in the air by hand, and one can do the same for trips to the Moon and Mars. On the same scale, nearby stars are located in cities some hundreds of km away, and I had a slide showing some examples. I think this works nicely because the audience are well familiar with the difference of scale between distances they can trace out in their sitting-rooms, and distances that require a few hours journey by car or train.

Good method! Thanks, Astronist. Very useful!

I was imagining something similar: a billion-to-one (1000 km = 1mm) solar system model could be scattered throughout a small town. The sun the size of a bean bag chair, Mercury a 1mm ball bearing in the far end of the neighbor’s yard, Jupiter a ping pong ball 6 blocks away, and Neptune a pea 4.5 km distant.

But at this scale, putting the Sun in my home in Minnesota would put Alpha Centauri in Alaska!

So much for working from memory, got the diameters and interstellar wrong:

Sun would be a desk (1.4m)

Mercury would be a pea (5mm) 46 m away

Jupiter would be a softball (140mm) 747m distant

Neptune would be a billiard ball (49mm) 4.5km distant.

So that all fits in a small town, but leaves Alpha Centauri outside a geosynchronous orbit at 40,000km lol

Hi Stephen,

Curious near coincidence is the ratio of 1 AU to 1 lightyear (63,241) is close to 1 inch to 1 mile (63,360).

Is anybody reading this able to refer me to a good explanation of Lubin’s directed energy system? I’ve been wrestling with two papers of his in JBIS (May/June 2015, and Feb./March 2016), and they make no sense at all. For example, Fig.2 in the first paper clearly shows the beam spot equal in size to the transmitter array as it leaves the transmitter and converging to a smaller size as it travels through space (as do the artist’s impressions based on it), yet the text talks about the spot size doing the opposite, increasing with increasing distance from the transmitter. All examples where sizes are mentioned have transmitter array size greater than sail size, yet the sail is fully filled despite there being no mention at all of any focusing device, or any mention of what its focal length might be. His baseline Yb laser amplifier (Fig.11 of the second paper) is tiny and could easily give a power density at the array of 200 kW/m^2, yet the actual power density of his DE-STAR designs is only 500 W/m^2. Nothing seems to make sense.

Check out this youtube video by Lubin.

He definitely talks about generating a small spot (500m) using phase-locking of a 1-10km laser array.

This point in the video shows the method to create a colimated beam.

So it seems to me that the phase-locking puts most of the combined laser power into the central lobe which has a smaller radius than the emitting array. The beam will spread out again. The arrays can also point to a common focal point at the target, using multiple sub arrays.

I am sure experts in this sort of technology, whether laser or microwaves can explain this better.

Alex, thanks. That video is mostly about asteroids, but rechecking it now I see there’s a helpful chart from 24:30 on. But the basic confusion is still there. Lubin’s figures work out, but only if one assumes that the beam firstly converges to a focus, and that he measures distance from the focal point, not from the laser array. Thus if the array size = the spot size = 550 metres, then the distance from the array to the focus = that from the focus to the spot (the beam must converge at the same angle at which it then diverges). So where he has the spot size at 1 AU distance, that is actually only 1 AU from the focus, not the laser array, and in the case that the spot size is 550 metres then the spot is actually 2 AU from the array. I can work with this geometrical picture, but it’s confusing that he makes no mention of either how the beam is focused to a point (actually a narrow “waist”, but the geometry works with a mathematical point), or what the focal distance is, and thus gives the wrong distance from the array to the spot.

Of course, if you measure distance in this way and want to find the distance over which the spot size is smaller than a given light sail, you get only half the distance, and so miss half the acceleration imparted to the sail!

Stephen.

Sorry, I should have mentioned the first half of the video is a justification of the need for a powerful laser array, his DE-STAR proposal. It is only the second half where he loosely describes the system.

If one ignores all the sub-arrays and just looks at one of them, the phased laser output creates an interference pattern where the central lobe has most of the energy according to the video. (The output seems rather like the interference of the 2-slit experiment for photons.) The central lobe is formed close to the array. Thereafter the beam will spread out. It seems to me one can ignore the array to spot (lobe distance) and just consider the distance to the focus at the target.

Maybe I am missing the meaning of your analysis, but I don’t see why the beam convergence-divergence angles (the beam must converge at the same angle at which it then diverges) should be the same.

Look at some of the animation examples on the Wikipedia page:

Phased array for the central lobe having most of the power and where the lobe forms.

I am wondering if the confusion lies with the assumption that the sub-arrays are as though they are sitting on a concave surface, and that their beams collimated to point (e.g. above the Earth’s atmosphere) are acting like incoherent light and diverging again, just a light does on a concave mirror.

In this case, however, the beams are phase-locked so that their single wavelength is locked to the same phase as all the beams in the sub array. When they reach the collimation point, they then interfere with the other beams coming from the other sub-arrays, creating an interference pattern with the majority of the energy in the central lobe with the highest combined in-phase light beams. This lobe will not diverge again with the same angle as the collimation angle, but with a much-reduced divergence.

For a beam collimated to just a few hundred km away, but targeted at an asteroid 1 AU away, one can effectively ignore the collimation distance.

Does this make sense, or am I talking BS because this is not my area of expertise?

Alex, thanks. I’ve copied down your responses, and am thinking about them.

Thank you for this article, Paul.

“well over 70,000 years at Voyager 1 speeds to travel the distance to Proxima Centauri” I am glad that you use this exact wording, because in fact, Proxima is a moving target, if I remember correctly it is heading past the Solar system at around 32km/s and will pass its closest point to us at 3.2 LY 28000 years from now, at which point Voyager 1 or anything we launch now which ends up leaving the Solar system at around 17km/s, will be less than 1 LY from us and will never catch up with Proxima, no matter in which direction you aim it.

In order to actually travel to the Alpha Centauri system, you will need to at least double the speed at which your probe escapes the Solar system, and then you will have to aim it at a point which the Alpha Centauri system will pass sometime far in the future so that that system’s gravity can capture your probe.

Now I am not an academic, I have to work to put food on the table, so my math skills are very very rusty, but using the online rocket equation calculator, it seems to me that you will net at least 137 times as much fuel as the Titan 3E rocket carried when it launched Voyager 1, to add another 17km/s… Am I at least in the right ballpark with that?

So, if you want to get a Voyager 1 mass probe to the Alpha Centauri system you will have to launch at leat 100 Titan 3E rockets with a 15t fuel tank to LEO, somehow strap all 100 tanks together, and use that fuel to get your Voyager 1 sized probe to escape the Solar system at a measly 34km/s, and maybe hit the Alpha Centauri system in 100k or 200k years.

Which just goes to illustrate how preposterous an idea it is to attempt interstellar travel with chemical rockets.

Of course most of that fuel is used to get the extra fuel you have to carry out of Earth’s gravity well.

So if, maybe 20 years from now, someone has started mining operations, producing methalox fuel on a Near Earth asteroid, and you could maybe get one of Elon’s 6 engine Starships with a Voyager sized probe on board to that asteroid to refuel, I’m quite sure, maybe someone could check the math for me, that that Starship could propel your probe to more than 34km/s.

What I’m getting at, is that we can shove any interstellar attempts until we have people (broadly speaking) living and working and doing business and manufacturing things off Earth.

Maybe it will start with some billionaire space cowboy buying his own personal Starship from Elon so he can live and entertain on it in LEO and land and take off as he pleases.

Maybe the first beamed sails will be developed in order to enable a fast (hours instead of days) courier service between LEO and the Moon for small packages, with laser arrays on both sides to accelerate and decelerate the sail.

Maybe industries on the Moon will overcome the 2 week long lunar night by having solar arrays beam power down to them from orbit.

And so we can gain experience and knowledge and learn by doing, venturing first to the asteroids and Mars, and then further and further from Earth as economics makes it feasable to do so.

The current thinking on rocket propulsion to get to Alpha Centauri is deuterium fusion, with or without some admixture of helium-3, lithium-6 and/or boron-10 to mop up the excess neutrons. Check out the BIS Daedalus Report (published in 1978), and Robert Freeland’s JBIS papers on fission/fusion propulsion with lithium deuteride (2013), and Icarus Firefly (2015). When using your rocket equation calculator, the exhaust velocity to use should be in the ballpark of 10,000 km/s (or one million seconds, if you prefer specific impulse). Hope this helps.

A fission implosion drive would do well i.e. small spheres of say plutonium are laser compressed to fission. Dirty but effective and very compact.

As I see it, space based solar power enables beam production…so one day out of the year Earth’s solar grid gives a starwisp a free ride. Space must needs enter the energy sector and OWN it. Lightsails need a heavy sail with large payloads that don’t move fast to stay and bounce beams to thin sails that go fast as the heavy sail takes heavy payloads to Titan. Earth being without power for a day keeps Carrington away.

Many of these mission concepts are quite okay for exploring the outer solar system, but we need to be much more ambitious if we really want to reach the stars with human exploration. If we cannot get there and back within a reasonable amount of time (say, 10-20 years) we are just spinning our wheels. Solar sails and similar tech lack the potential of reaching the necessary speeds to bridge the interstellar gap. We really must develop three technologies to do it: near light speed propulsion that requires little on-board fuel; radiation and solid particle shielding to protect the spacecraft’s occupants and critical system; and artificial gravity to help the human crew’s physiological health during the long trip. Finding a way to communicate quickly over long distances (i.e., faster that light speed transmission) would also aid the crew in staying in touch with Earth and communicating back scientific and health data that could greatly increase the mission’s productivity and knowledge base. Recent developments in the study of quantum mechanics suggest such long-distance communication is theoretically possible. Regardless, I think humans will reach the nearest stars long before either of the Voyager spacecraft.

Your thinking seems to be perhaps among the most sound that I heard so far as to what is required for such a trip (minus the propulsion, which is still a unknown) but I do agree with your assessments of what is needed for any kind of practical approach. The light sails don’t seem to be anything but a virtual dead-end in terms of return for investment. Larger ships are simply going to be needed to do all that’s needed if you want to have a serious interstellar mission. The only thing I would quibble with is that the occupants have to be human; robots would seem to have, if sufficiently developed, the upper hand in terms of the ability to deliver.

Faster than light communications? Any links? I want to believe…

Technology will improve. The Wright Brothers’ first airplane was considered to be fairly useless initially, and real air travel for a few decades relied on airships. But today we fly comfortably around the planet in jet airliners. Similarly, Goddard’s first rocket was interesting, but it was the V-2 that really allowed rockets to be used, and we haven’t even started building true space liners. Solar and beamed sails are really just at the start of their development cycle, and we also have a “lot of

room at the bottom” for miniaturization as Feynman once said. (Just this week MIT announced a lens the size of a grain of sal made with printed waveguides[?] that is far smaller and less massive than current tiny lenses, but with equivalent resolution.

Given the energy, we could beam huge sails to the stars as Robert Forward proposed.

As for time scales, this is purely a feature of human life spans. We can send robots and machines instead of humans to the stars, or we can put humans into some sort of cryosleep either on the ship, or if they are the science team, back on earth, to awaken when the ship reaches its target. Or perhaps humans will be able to upload and download minds to observe or wait out the journey times. There are lots of options depending on where technology takes us.

My personal bet is that robots will the “crew” or the sailship itself, and that their industry will drive the development of such ships and the exploration of the galaxy. This seems the easiest option with the least surprises and almost no magic technology needed – just scaled up sails and beamers, and more intelligent artificial minds to cope with novel situations. The technology path is fairly clear from here. Humanity, almost entirely Earth-based, may have little, if any, involvement in the exploration.

Take the entire mass of the universe and turn it into chemical propellant. Your goal is to accelerate a single proton with this unimaginable rocket. The final speed you achieve is in the range of 300 to 600 kilometers per second.

The above is probably the case for a single stage rocket. However, if we multistage, the prospects are much much better. A rough estimate suggests by clustering 1 trillion rocket modules (each 20 tons with a 5 mile per second ?V and a one ton payload) with 50 billion modules in the 2nd stage, 2,50 000,000 modules in the third stage and so on until the final stage is a single module with a one ton payload moving at 200 mile per second.

If the ?V can be increased to 10 mps, the 1st stage array only needs about 1,000,000 modules.

OK, enough silliness. Wait one more idea. Transfer momentum to the spacecraft with macroscopic particles with the velocity of the launched particles adjusted to exceed to current velocity of the spacecraft by a relatively small amount. Momentum transfer could be accomplished by electromagnetic deceleration. A “particle” could be captured by the craft if it has some useful such as food or water for a manned craft. But usually the mass would be discarded after absorbing the momentum. The required amount of energy should be orders of magnitude less than lasers. Accelerating macroscopic particles would involve mass drivers discussed way back i the 70’s. I would imagine that the highest practical velocities would be in the order of a few hundred miles per second but the spacecraft mass could be in the hundreds of tons range. Lots of extreme engineering but everything discussed so far is extreme to the extreme.

One could imagine a “pusher plate” as proposed for Project Orion. Perhaps a “shover plate” is a better term. The kinetic energy of the spacecraft would not be much higher than the sum of the total kinetic energy of the propulsive particles if the ?V between the particle and spacecraft are kept small. Arbitrarily high propulsive efficiencies can be obtained at the cost of a huge number of particles. There is a sweet spot somewhere.

Hi Patient Observer

The maximum efficiency is attained when the pushing particle is reflected by the pusher plate and left dead in space. Total energy transfer has occurred.

Wouldn’t the momentum transfer be very small and the time to reach the craft very long? At the limit, when object and craft are traveling at the same speed, there is no momentum transfer and the craft and object never meet. Isn’t it like throwing rocks at an accelerating vehicle, at some point the vehicle is traveling faster than you can throw the rocks, and therefore the rock will fail to reach the vehicle (ignoring gravity and air resistance)?

A similar problem is apparent with electric and magnetic sails running before the solar wind. The maximum velocity the sail can attain is that of the solar wind, about 400 km/s on average. These sails cannot run “downwind faster than the wind” as they can using wind on the Earth.

The aiming problems of even fast object acceleration and velocities to be destructive like small meteors are beautifully depicted in the space battles of the tv series, “The Expanse”. [This mimics the problem of conventional machine-gun fire at aircraft – where tracers show the path of the bullets from each burst.] Now consider that to magnetically decelerate an object to transfer momentum requires the object to be controlled by magnetic fields, which means that over large distances, the solar and planetary magnetic fields will alter the objects’ trajectories, sufficiently perhaps to miss the vehicle. The ship has to try to stay in the beam, because it would take too long for the rail gun to effectively respond to any drift as the objects would be slow to reach the ship.

Even em beams start to look slow when the size of the solar system is considered. It takes over 8 minutes for light to travel just 1 AU. It takes hours to reach Pluto, 3+ days to reach the solar gravitational focus at 550 AU, and obviously 1 year to reach 1 light year. Objects traveling at a mere 300 km/s (10x faster than most meteors) multiply these times by 1000x.

I would think we have to get used to extremely small payloads accelerated to a fraction of the speed of light using large (many giga watts) laser arrays and trip times to Alpha Proxima of several decades. This is the future for a century or more to come, barring some unforeseen and extreme breakthrough in propulsion. It might happen but the engineers of today and the next few decades will have to work within the constraints we have been discussing on here for years. Is enough money available? Probably not to make a full scale effort (many hundreds of billions of dollars would be required especially if governments are doing it). Some work will continue to be done but none of us is holding our breath I wouldn’t think.