At the top of my list of people I’d someday like to have a long conversation with is Nick Bostrom, a philosopher and director of Oxford’s Future of Humanity Institute. As Centauri Dreams readers will likely know, Bostrom has been thinking about the issue of human extinction for a long time, his ideas playing interestingly against questions not only about our own past but about our future possibilities if we can leave the Solar System. And as Ross Andersen demonstrates in Omens, a superb feature on Bostrom’s ideas in Aeon Magazine, this is one philosopher whose notions may make even the most optimistic futurist think twice.

I suppose there is such a thing as a ‘philosophical mind.’ How else to explain someone who, at the age of 16, runs across an anthology of 19th Century German philosophy and finds himself utterly at home in the world of Schopenhauer and Nietzsche? Not one but three undergraduate degrees at the University of Gothenburg in Sweden followed. Now Bostrom applies his philosophical background, along with training in mathematics, to questions that are literally larger than life. As Andersen reminds us, ninety-nine percent of all species that have lived on our planet are now extinct, including more than five tool-using hominids. Extinctions paved the way for the emergence of new species, but for the species du jour, survival is the imperative.

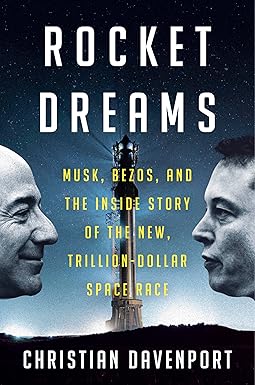

Image: Philosopher Nick Bostrom at a 2006 summit at Stanford University. Credit: Wikimedia Commons.

Colonizing Waves or Individual Explorers?

You’ll want to read Andersen’s essay in its entirety (helpfully, there’s a Kindle download link) to see how Bostrom sizes up existential risks like asteroid impacts and supervolcanoes. One of the latter, the Toba super-eruption about 70,000 years ago, seems to have pumped enough ash into the atmosphere to destroy the food chain of our distant ancestors, leaving a scant few thousand alive to move into and populate the rest of the planet. We do seem to be a resilient species. Bostrom likes the long view, which means he sees a 100,000 year hiatus as humans bounce back from a possible future catastrophe as little more than a pause in cosmic time. That perspective has interesting consequences, as Andersen notes:

It might not take that long. The history of our species demonstrates that small groups of humans can multiply rapidly, spreading over enormous volumes of territory in quick, colonising spasms. There is research suggesting that both the Polynesian archipelago and the New World — each a forbidding frontier in its own way — were settled by less than 100 human beings.

This is a point worth remembering as we contemplate the possibility of interstellar flight. We sometimes think of enormous colonies of humans moving to nearby stars, but early human settlements may be the result of tiny groups who, for reasons we can only guess at, decide to cross these fantastic distances. Maybe rather than a planned program of expansion, our species will see sudden departures of groups heading out for adventure or ideology, small bands who leave the problems of Earth behind and create entirely new societies outside of any central planning or control. Meanwhile, the great bulk of humans choose to stay at home.

Andersen’s essay is so rich that I will, for today at least, pass over his discussions with Bostrom about artificial intelligence and the dangers it represents — you should read this in its entirety. Let’s focus in on the Fermi paradox and why Bostrom hopes that the Curiosity rover finds no signs of life on Mars. For the consensus at Bostrom’s Future of Humanity Institute, shared by several of the thinkers there, is that the Milky Way could be colonized in a million years or less, leading to the question of why we don’t see this happening.

Filters Past and Future

Are we looking at an omen of the human future? Robin Hanson, another familiar name to Centauri Dreams readers, works with Bostrom at the Institute. He tells Andersen that there appears to be some kind of filter that keeps civilizations from developing to the point where they build starships and fill the galaxy. The filter would exist somewhere between inert matter and cosmic transcendence, and thus could be somewhere in our past or in our future.

In other words, what if we have somehow survived a filter that keeps life from developing on most planets? Or perhaps it’s a filter that acts to screen out intelligent life-forms, and we have somehow made our way through it. If the ‘great filter’ is in our past, then we can hope to expand into a cosmos that may be largely devoid of intelligent life. If it is in our future, then we can’t predict what it will be, but the ominous silence of the stars bodes ill for our survival.

But let Andersen tell it, in one of his conversations with Bostrom:

That’s why Bostrom hopes the Curiosity rover fails. ‘Any discovery of life that didn’t originate on Earth makes it less likely the great filter is in our past, and more likely it’s in our future,’ he told me. If life is a cosmic fluke, then we’ve already beaten the odds, and our future is undetermined — the galaxy is there for the taking. If we discover that life arises everywhere, we lose a prime suspect in our hunt for the great filter. The more advanced life we find, the worse the implications. If Curiosity spots a vertebrate fossil embedded in Martian rock, it would mean that a Cambrian explosion occurred twice in the same solar system. It would give us reason to suspect that nature is very good at knitting atoms into complex animal life, but very bad at nurturing star-hopping civilisations. It would make it less likely that humans have already slipped through the trap whose jaws keep our skies lifeless. It would be an omen.

This essay will take up half an hour of your day, but I suspect that, like me, you’ll go back and read it again, reflecting on its themes for days to come. Are there questions of philosophy that are more urgent than others? Ponder a moral issue that is much in play at the Future of Humanity Institute, the idea that existential threats to our species may outweigh our obligations to serve those who are suffering today. “The casualties of human extinction,” Andersen writes, ” would include not only the corpses of the final generation, but also all of our potential descendants, a number that could reach into the trillions.”

In my view, that’s an argument for, among other things, a robust space program going forward, one that is capable of securing our planet from impact threats and establishing off-world colonies that would survive any other forms of planetary catastrophe, from runaway artificial intelligence to the weaponization of microbes. It’s also an argument for taking the kind of long-term perspective so lacking in modern culture, the case for which has never been made more clearly than in this elegant and illuminating essay.

I was long itching to counter argument James Jason Wentworth posting at March 6, 2013 at 0:05 especially on calling people working on artilects as (potential) terrorists.

“As well-intentioned as they may be, I fear that those who are working on artilects may be sowing the seeds of humanity’s destruction. I too have watched “The Forbin Project,” and while it is fiction, I don’t consider the attitude of Colossus toward humanity to be at all implausible for a real artilect. Those who assume that artilects and human-machine hybrids will share human values (or even human interests, such as wanting to explore the universe, for example) are frighteningly naïve. Because of these risks, I consider such researchers to be as dangerous as terrorists.”

I don’t want to argument on the statement James Jason Wentworth made but we are making assumptions and conclusions on current understanding of brain functions, AI’s supposedly same traits, and it’s capability being sentient. Brain as far as we know has many other functions which we call as non-computable. The biggest of it is conciousness beside sentientness and this is a crucial difference in having AI and functioning brain. They are two different entities mainly due to natural brain’s conciousness which AI will lack as far as we won’t understand it itself.

The best description of this approach, and the part on which Stuart Hameroff and Roger Penrose collaborate which is not lightly taken by peers, is discussed on this month’s Discovery Magazine Blog – http://blogs.discovermagazine.com/fire-in-the-mind/2013/03/07/the-case-of-the-quantum-brain

Instead on labelling people involved into something / someone which represent the biggest threat for one’s existence I would rather let people substantiate their work and results and expand our current technological capabilities.

There is no way we can evolve further w/o AI. There are just too much computantional and assistant assignments which is best to let a superbrain to resolve rather than involve a myriad on people for several decade. I would expect answers from AI for questions as:

* What is the closes wormhole to the Solar System?

* What’s below a Plank’s length?

* If Dark Energy is so abundant why we can’t see it in the Standard Model?

* Why CMB has clumps and does it point to a feature from past eons?

I just can’t see why oh why should bunch of superbrains be interested in wiping out humanity if we can feed them with useful information to ponder with.