Centauri Dreams

Imagining and Planning Interstellar Exploration

Stability of Interstellar ‘Big Dumb Objects’

‘Big dumb objects’ (BDOs) appear to great effect in science fiction. They come in all manner of sizes and shapes and they fulfill a wide range of functions. An early favorite of mine was Cordwainer Smith’s “Golden the Ships Were Oh! Oh! Oh!,” which I snagged on a long ago trip to a Chicago newsstand, where it appeared in an issue of Amazing Stories. It’s probably found most easily these days in The Rediscovery of Man: The Complete Short Science Fiction of Cordwainer Smith (NESFA Press, 1993), a collection that should be on every science fiction fan’s shelf.

Smith (a pseudonym for Paul Myron Anthony Linebarger, whose life was as remarkable as his fiction) goes to work on structures that are millions of miles long. I won’t say more for fear of spoiling the story for newcomers. More recent BDOs are better known, Dyson spheres and Dyson swarms are no strangers to these pages, and have been the subject of intense scrutiny by Jason Wright and his colleagues at Pennsylvania State University. The G-HAT (Glimpsing Heat from Alien Technologies) project scanned data from the Wide-field Infrared Survey Explorer satellite looking at tens of thousands of galaxies for the waste heat signature of possible Dyson spheres. The idea that megastructures might interest a hugely advanced civilization is reasonable, but we have yet to find evidence that Dyson spheres exist.

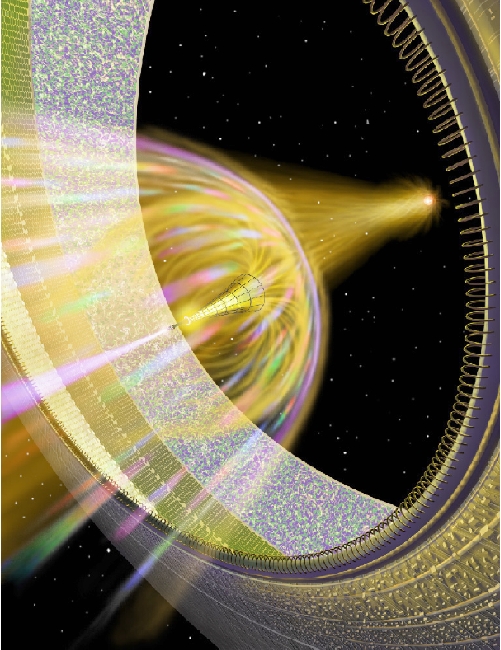

Larry Niven’s Ringworld posits a structure that circles an entire star but does not encompass it. A transit signature might give this one away if ever found; imagine the lightcurve. Niven and Gregory Benford later come up with the ‘shipstar’ concept that Greg described some years back on Centauri Dreams. This was an unusual re-thinking of the original ‘Shkadov Thruster,’ a device that could be used to move an entire star. See the Bowl of Heaven trilogy for more.

The work of Russian physicist Leonid Shkadov in 1987, the thruster design used asymmetric light pressure from a huge mirror to move an entire planetary system to a new destination. The physics works, but we’re moving at slow speeds, on the order of 20 meters per second after a million years. On the other hand, a truly long-lived species might find waiting a billion years to reach 20 kilometers per second, with a whopping 34,000 light years shift in position, to be plausible. Shipstar would be able to move considerably faster.

Image: An artist’s conception of the Benford/Niven ‘shipstar’ concept. Think of the ‘bowl’ as half of a Dyson sphere curved around a star whose energies flow into a propulsive plasma jet that moves the entire structure on its journey. Here the notion of living space may remind you of Niven’s Ringworld, that vast structure completely encircling a star, though not enclosing it. The difference is that in the ShipStar scenario, most of the ‘bowl’ is made up of mirrors, with living space just on the rim. Credit: Don Davis.

In conversations with Benford about his shipstar concept a few years ago, I learned that a solid Dyson sphere is unstable, and would need constant adjustment to maintain its position. Concerns over stability plague BDOs. Colin McInnes (University of Glasgow) looks at the problem in a recent paper, noting this about the Shkadov design:

In its simplest form a stellar engine can be considered as a single ideal ultra-large rigid reflective disc in static equilibrium above a central star… As the disc accelerates due to radiation pressure from the star, the centre-of-mass of the gravitationally coupled star-reflector system accelerates, leading to a displacement of the star.

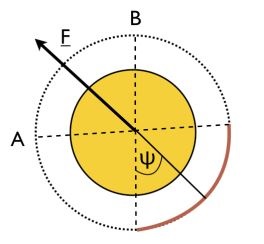

Image: This is Figure 1 from a paper by Duncan Forgan (citation below). Caption: Diagram of a Class A Stellar Engine, or Shkadov thruster. The star is viewed from the pole – the thruster is a spherical arc mirror (solid line), spanning a sector of total angular extent 2ψ. This produces an imbalance in the radiation pressure force produced by the star, resulting in a net thrust in the direction of the arrow. Credit: Duncan Forgan.

That seems straightforward, assuming a civilization so advanced that it could build mirror structures of the needed size. Here too, though, we have stability problems. The McInnes paper is highly interesting, examining megastructure concepts and the possible ways of stabilizing them. While a uniform, rigid reflective disk proves unstable as a star-moving engine, a disk with its mass concentrated at the edges can be stable. Instead of a flat disk, we are looking at something much closer to the shape of a ring. Here passive stability is what we want – i.e., the object does not need continual adjustment by other technologies to maintain its position and function.

In the case of the Schkadov engine, we have this consideration:

…for an ideal reflector subject to gravitational and radiation pressure forces the gradient of these forces across the reflector will induce stresses. While the direction of the radiation pressure force is always normal to the reflector, the direction of the gravitational force will vary across the reflector moving from the centre to the edge. Therefore, while the component of the gravitational force normal to the reflector can in principle be balanced by the radiation pressure force, there will be an in-plane component of the gravitational force which will generate a compressive stress. A thin reflector will clearly be unable to support such compression. However, in principle a zero-stress reflector can be configured for a non-homogeneous, partially reflecting rotating reflector…

The math for a stellar reflector and a stellar ring are laid out in the paper’s appendices.

McInnes thinks that stability is useful as we investigate possible technosignatures in our SETI work, whether they be star-moving thrusters or energy-gathering Dyson objects. The assumption is that passive stability will be sought after because it is efficient and economical, not requiring control systems that must continually adjust position. Remember, too, that in searching for technosignatures, we have the possibility of finding megastructures like these that have survived the demise of their creators. Passive stability is essential for these objects to remain intact and detectable.

What McInnes calls a ‘Dyson bubble’ can likewise be stabilized. Here we’re talking not about a solid Dyson sphere but a constellation of discs, a ‘power swarm’ that allows a civilization to exploit most of the output of its star. The terminology can be confusing but bear with me. The author distinguishes between a cloud of small reflectors in orbit around the central star – huge in number, these form a so-called ‘Dyson swarm’ – and a ‘Dyson bubble,’ by which he means a smaller number of large reflectors in ‘statite’ configuration, so that instead of orbiting, radiation pressure exactly balances gravity. In other words, the ‘bubble’’ components stay stationary relative to the star.

Self-stabilizing techniques are challenged not only by gravitational and radiation pressure but also collisions between the myriad orbiting disks as well as outside perturbing forces. Over large timeframes, passing stars can disrupt the gravitational dance, while interstellar comets, whose numbers are likely to be huge, present a similar risk of disruption. Even so, there are ways around this:

…the Dyson bubble can remain stable when its self-gravity and a simple model of a diffuse background of scattered radiation are included in the dynamics defined in Section 6.4. However, there are now regions of the parameter space where instability can occur, primarily at the edge of the Dyson bubble driven by the diffuse background radiation. In addition, it has been shown that the self-gravity of the Dyson bubble is in itself sufficient to ensure passive stability in the absence of the diffuse background radiation, and indeed it enhances the stability of the Dyson bubble when the diffuse background of scattered radiation is included.

A Dyson swarm if properly implemented can also ensure passive stability. Reflectors must always be configured ‘normal’ (perpendicular) to the central star “…using slighting conical reflectors with the centre-of-pressure displaced behind the centre-of-mass.”

So there are ways of doing these things as long as we abandon the Shkadov concept of a uniform reflector disc in favor of a ring supporting the reflector, or in the case of the two Dyson options McInnes looks at, a dense cloud of reflectors stabilized through orbital mechanics, or a smaller assembly of reflectors in static equilibrium with radiation pressure from the star exactly balancing gravity. But here I’m more interested in the consequences in terms of hunting for technosignatures:

A Dyson swarm can be expected to generate a different technosignature to a passively stable Dyson bubble discussed above. For example, the motion of the discs in a swarm would imply a flickering of the observed luminosity of the central star, with a larger variation expected from a small number of ultra-large discs relative to a large number of small discs. Finally, while an orbiting swarm of reflectors will be susceptible to collisions (B. C. Laki 2025), collisions within a Dyson swarm could in principle be minimised using families of displaced non-Keplerian orbits, where the orbit planes of the reflectors can be stacked in parallel rather than being inclined relative to each other (C. R. McInnes & J. F. L. Simmons 1992).

And what of Shipstar? A recent conversation with Jim Benford reminded me that his brother Greg had worked out a way to stabilize the induced flare on the central star through intense magnetic fields, but as far as I know, this concept has never been rigorously investigated. From the technosignature standpoint, McInnes’ paper reminds us that stability problems can be overcome should an advanced civilization choose to build Dyson-class structures, or undertake star-moving of the Shkadov variety. How to engineer the stability of BDOs should continue to provide insight into possible technosignatures, even if the lack of any trace of Dyson structures despite intensive work at G-HAT remains puzzling. Next week I want to look at an even more recent stellar engine concept as presented by Illinois State University’s Michael Caplan.

The paper is McInnes, “Stellar engines and Dyson bubbles can be stable,” Monthly Notices of the Royal Astronomical Society 546 (2026), 1-18 (full text). The Shkadov paper is “Possibility of Controlling Solar System Motion in the Galaxy,” presented at the 38th Congress of the International Astronautical Federation (IAF) in Brighton, UK. An English translation of the original paper was published in the Journal of Solar System Research Volume 22, Issue 4, pp 210–214 under the title “Possibility of Control of Galactic Motion of the Solar System.” The Forgan paper mentioned above is “On the Possibility of Detecting Class A Stellar Engines Using Exoplanet Transit Curves,” Journal of the British Interplanetary Society, Vol. 66, no. 5/6, 2013 pp. 144–154. Preprint.

No Signs of Atmosphere on TRAPPIST-1 b or c

Waiting to learn what next generation telescopes will reveal is tantalizing in the extreme. In terms of space-based instruments, we’re getting close to launch of the Nancy Grace Roman Space Telescope, which has been the subject of many posts here under its former name WFIRST (Wide-Field Infrared Survey Telescope). Part of its remit will be to image nearby planetary systems, assuming it can survive NASA budget battles that have threatened to cancel it. Launch could occur late this year if these issues are resolved.

Needless to say, the European Space Agency’s PLATO mission (Planetary Transits and Oscillations of Stars), with a 2026 launch expected, has my full attention. Here we have a focus on terrestrial exoplanets in the habitable zones of their stars, to be followed up with ESA’s Ariel (Atmospheric Remote-sensing Infrared Exoplanet Large-survey), designed within a few years to be launched for the study of planetary atmospheres. On the ground, the European Southern Observatory’s work on its 39-metre instrument continues, with first light projected for 2029 and regular observations beginning the following year.

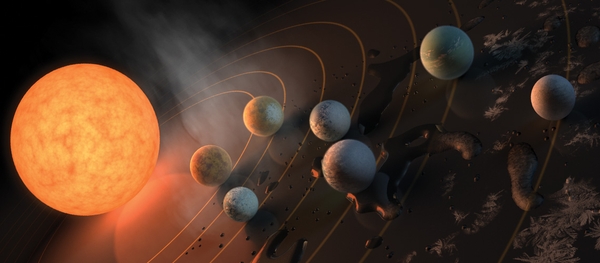

Meanwhile, the James Webb Space Telescope continues to deliver outstanding results. The latest to catch my eye involve the TRAPPIST-1 system, with its seven terrestrial-sized planets orbiting an M-dwarf in Aquarius. At about 40 light years out, this system is close enough to reward intense scrutiny, especially since all seven planets transit the star. In new work just published in Nature Astronomy, we get our first look at planetary atmospheres – or the lack of same – on the two inner worlds, TRAPPIST-1b and TRAPPIST-1c.

Image: This artist’s impression displays TRAPPIST-1 and its planets reflected in a surface. The potential for water on each of the worlds is also represented by the frost, water pools, and steam surrounding the scene. Credit: © NASA/R. Hurt/T. Pyle.

The question of atmospheres is a fraught one given that the tight habitable zones around an M-dwarf mean that planets there are subject to violent flare activity that can potentially strip an atmosphere entirely. The two inner worlds are not in the habitable zone (TRAPPIST 1-e, f and g are, but are not part of this study). We learn in the paper that no atmospheres can be detected here, but the question of the other planets remains open. This is the first time that astronomers have mapped climate features on Earth-sized planets.

We can continue to speculate on tidal lock, which will be a factor on planets in the habitable zone of any red dwarf star. A permanent day on one side, permanent night on the other are the result, but there are mechanisms that could keep a planet like this able to sustain life. Brice-Oliver Demory (University of Bern), a co-author of the study, comments on the importance of the work:

“The presence of an atmosphere around these tidally locked planets could allow for energy transfer between the day and night sides, resulting in more moderate temperatures across the planet, which would have a significant impact on their potential habitability. Successfully detecting the atmosphere of one of these planets has therefore become a key objective for our community, highlighting the importance of the TRAPPIST-1 system with the JWST.”

Sixty hours of observation with JWST tracked the two inner planets in the infrared through a full orbit, allowing readings of surface temperature to a high degree of precision. What tells the story is the marked temperature contrast between night and day sides, with the inner TRAPPIST-1b at 200 degrees C on the dayside, while planet c comes in at 100 degrees C. The night side of each registers at below -200 degrees C, indicating that thermal energy is not being transferred, a likely consequence of early atmospheres being stripped away.

How far out from the star do we have to go to find a surviving atmosphere? Emeline Belmont (University of Geneva) points to our own Solar System as reason for optimism. Whereas Mercury has been stripped of any atmosphere, both Venus and Earth clearly had no problem forming and keeping their own. That would leave the three TRAPPIST-1 worlds in the habitable zone continuing candidates for follow-up, and eventually spectroscopic study of atmospheric components. Will a future telescope register a biosignature on one of these?

We can expect the investigation of TRAPPIST-1 to accelerate. Out of curiosity I ran a quick check on the Astrophysics Data System (ADS), requesting papers with TRAPPIST-1 in their abstracts published since the beginning of this year. 36 entries came up, some of them only referencing the system, but most homing in on various issues involving it. Today’s paper particularly caught my eye given lead author Michaël Gillon (University of Liège), who led the international team that discovered the system in 2016 and subsequently identified its full extent.

The paper is Gillon et al., “No thick atmosphere around TRAPPIST-1 b and c from JWST thermal phase curves,” Nature Astronomy 3 April 2026 (abstract).

Interstellar Probes: Moving Beyond Bracewell

Lately we’ve been discussing interstellar probes, the kind that an extraterrestrial civilization might use to explore the galaxy. Ronald Bracewell’s analysis of such probes dates back to 1960 and was all but coterminous with the emergence of SETI. The problem with Bracewell probes is that we would expect to have one in our Solar System if they exist. Rather than using that notion to add stress to the Fermi question, I’m going to point out that there is a lot of real estate waiting to be searched.

Case in point: What might our ongoing study of the lunar surface through images from the Lunar Reconnaissance Orbiter pick up as we use AI models that have already identified human-made space debris from various missions? A closer look at this project reminds us that while the Moon is an obvious place to look for a ‘lurker’ probe, we can’t discount other locations even though earlier work on the various Lagrange points, a good place for long-term observation of our planet, came up empty (see below). Our capabilities are so much more advanced not only in terms of instrumentation but analytical tools that a continued hunt for artifacts is reasonable.

I’m getting picky here given the wide variety of possible probes, tapping the definition that Bracewell used in his original article. That’s a probe we probably would have noticed by now if it were active. In 1960, Bracewell was offering an alternative to the SETI goal of detecting an interstellar radio signal aimed at Earth. His physical probe would arrive in a planetary system to look for signs of life and technology, duplicating any radio signals it heard so as to re-transmit them to the originators, thus establishing contact. Sagan uses the notion in his novel Contact (1979), where Adolf Hitler’s opening speech from the 1936 Berlin Olympics is found embedded within the message, along with much else.

How would we respond to hearing a signal sent back to us from space? Bracewell thinks we would experiment with it to see what would happen next:

To notify the probe that we had heard it, we would repeat back to it once a:gain. It would then know that it was in touch with us. After some routine tests to guard against accident, and to test our sensitivity and band-width, it would begin its message, with further occasional interrogation to ensure that it had not set below our horizon. Should we be surprised if the beginning of its message were a television image of a constellation?

Bracewell’s notions of dispatching a physical object as opposed to sending a radio signal take advantage of the ‘information density’ available to a physical probe. This is the familiar notion that a box of DVDs in a truck moves information at a far higher rate than fiber-optic cable. But of course you have to get the truck to its destination, and in the case of interstellar flight the latency is huge – perhaps thousands of years or more. A long-lived civilization, thought Bracewell, may nonetheless see purpose in seeding nearby stars if the travel time is a small fraction of its likely civilizational life.

Swarming and Reproducing

Bracewell’s ideas jibe nicely with the Breakthrough Starshot concept of swarms of sails investigating nearby stars. We might imagine the descendants of such tiny flyby probes scattered to all interesting stellar systems within, say, 100 light years. With concepts like Bracewell’s entering the literature, it was left to Robert Freitas to run the first scientific search I am aware of for such probes (citation below). Freitas made a series of visual observations of the various LaGrange points in the early 1980s. But in the early days of SETI (and Bracewell was writing even before the Green Bank meeting in 1961 that produced the Drake Equation), other ideas about how interstellar probes might operate had begun to surface. Ancient probes sent by civilizations far more advanced than ours might still be live, waiting and reporting on our activities (Clarke’s sentinel ‘slabs’ from 2001: A Space Odyssey come to mind . Or they might be long-dead relics.

When Michael Hart went to work on this in 1975, he amplified the probe concept and changed the game. He produced, in fact, what Jason Wright (Pennsylvania State) has dubbed “The most influential formulation of the Fermi Paradox…,” one that compresses the conundrum by homing in on the fact that we observe no intelligent beings on our planet, something Hart called Fact A. The fact that they are not observed tells us that despite the amount of time available for long-lived cultures to have colonized the galaxy, none evidently have. This is no small problem, for as Wright calculates in his new textbook on SETI, even a ‘wavefront’ of probes moving outwards from star to star at Voyager-like speeds would have been able to reach every star within 2 billion years.

Move the dial up in terms of speed to, say, 0.5 c and the numbers get considerably shortened. Imagine relativistic ships that close on lightspeed and we find exponential growth saturating the galaxy in 150,000 years, all contrasting with an Earth that is 4.5 billion years old. Hart saw nothing in the laws of physics that prohibited starflight, and he found the idea that ETI was uninterested in Earth to be unconvincing. What David Brin coined the ‘Principle of non-Exclusiveness’ boils down to the idea that alien species will not all behave the same way. All that is needed is for one civilization to decide to send out probes, and by now such probes should have reached every star.

Image: How quickly would a single civilization using self-replicating probes spread through a galaxy like this one (M 74)? Moreover, what sort of factors might govern this ‘percolation’ of intelligence through the spiral? The answers affect our view of the Fermi question, and thus our own place in the cosmos. Image credit: NASA, ESA, and the Hubble Heritage (STScI/AURA)-ESA/Hubble Collaboration.

Advances in computing led Frank Tipler to push Hart’s views even more strenuously, bringing John von Neumann’s work on self-replicating machines to bear. His insight was to ask what would happen if an extraterrestrial culture began seeding stars with self-reproducing probes, each capable of not only studying a new world but building another probe that could reach yet another star, and so on. Here the numbers become even more telling. Such probes could use local resources in each system to build their next generation, thus nullifying the resource problem. Here’s Tipler on the matter:

…if the motivation for communication is to exchange information with another intelligent species, then as Bracewell has pointed out, contact via space probe has several advantages over radio waves. One does not have to guess the frequency used by the other species, for instance. In fact, if the probe has a von Neumann machine payload, then the machine could construct an artifact in the solar system of the species to be contacted, an artifact so noticeable that it could not possibly be overlooked. If nothing else, the machine could construct a “Drink Coca-Cola” sign a thousand miles across and put it in orbit around the planet of the other species. Once the existence of the probe has been noted by the species to be contacted, information exchange can begin in a variety of ways.

As to the cost of such a vast exploration program, Tipler has this to say:

Using a von Neumann machine as a payload obviates the main objection to interstellar probes as a method of contact, namely the expense of putting a probe around each of an enormous number of stars. One need only construct a few probes, enough to make sure that at least one will succeed in making copies of itself in another solar system. Probes will then be sent to the other stars of the galaxy automatically, with no further expense to the original species.

A ‘Catastrophic’ Answer to Fermi?

Tipler suggested a timeframe of 300 million years to fill the galaxy with these devices, in an argument that drew fire from Carl Sagan and William Newman, who argued in 1983 that his approach was ‘solipsistic’ because the idea that we were alone in producing a technological civilization was anti-Copernican. And here we need to pause on a concept that has surfaced repeatedly in SETI studies not just in the western nations but also the Soviet Union. The idea of ‘mediocrity’ troubled attendees at the Soviet SETI meeting at the Byurakan Astrophysical Observatory in 1964, to be discussed again in a second meeting (with American scientists as participants) in 1971.

Do we just take the Copernican principle as a given? Sagan clearly thought so. His ‘co-author’ on Intelligent Life in the Universe, Iosif S. Shklovskii was far less sanguine on the matter:

Since we do not adequately understand the factors leading to the evolution of intelligence and technical civilizations, we cannot reliably estimate the probability that intelligence and technical civilizations will emerge.

Here I’m drawing on Mark Sheridan in his 2023 book SETI’s Scope (How The Search For Extraterrestrial Intelligence Became Disconnected From New Ideas About Extraterrestrials). Sheridan homes in on the philosophical disagreement between emerging Soviet SETI and the ideas in the Drake Equation. At Byurakan, Soviet mathematician A. V. Gladkii challenged the idea, accepted by Sagan, that mathematics could be a recognizable common ground between all intelligences across the stars. And Sheridan quotes Theodosius Dobzhansky, a Ukrainian-born geneticist later working in the U.S., who in a 1972 paper cast doubt on Sagan’s insistence that because intelligence had arisen on our planet, it must arise everywhere life exists. In his view, the principle of mediocrity was being taken several steps too far. Quoting Dobzhansky:

“Natural scientists have been loathe, for at least a century, to assume that there is anything radically unique or special about the planet Earth or about the human species. This is an understandable reaction against the traditional view that Earth, and indeed the whole universe, was created specifically for man. The reaction may have gone too far. It is possible that there is, after all, something unique about man and the planet he inhabits.”

In a fascinating 2009 paper, Milan Ćirković examines the Fermi question in the context of our basic premises about science. As amplified in his later book The Great Silence: Science and Philosophy of Fermi’s Paradox (Oxford University Press, 2018), the Serbian astronomer points to the focus the ‘where are they’ question places upon both Copernicanism and gradualism. In the former, as clearly stated by Sagan as by many other of the early SETI practitioners, the assumption is that we occupy no privileged place in the cosmos, and thus should expect other civilizations to exist, some of which would be far more advanced than ourselves. Yet we do not observe them.

Many answers can be offered to Fermi’s question, of course, but as we continue probing the cosmos, the silence takes on escalating significance. Must we envision a future in which we abandon Copernicanism and assume that we do not, in fact, occupy a relatively common niche in the cosmos, but rather a rather special one?

Or should we give up on gradualism, the idea that geophysical processes proceed in the future more or less as they did in the past? The concept is foundational to 18th Century geology and remains a commonplace in current thinking. But ‘catastrophism’ is an obvious factor in the development of life, as extreme ruptures like the K–T extinction event that ended the era of the dinosaurs make clear. Are there common factors that could affect planets throughout what is thought of as the Milky Way’s habitable zone?

The question is the focus of recent work on gamma ray bursts and implies, as Ćirković notes, a ‘reset’ of the clock. That could explain our lack of detections, as it would imply that living worlds, no matter their geological age, have had only about the same amount of time we have had to develop intelligence. The Fermi question highlights both of these key assumptions, while our lack of a solution keeps the tension tight.

The Bracewell paper is “Communications from Superior Galactic Communities,” Nature Volume 186, Issue 4726 (1960), pp. 670-671. Abstract. On the LaGrange search, see Freitas, “A search for natural or artificial objects located at the Earth-Moon libration points,” Icarus, Volume 42, Issue 3 (June, 1980) p. 442-447 (abstract). Michael Hart’s paper on galactic expansion is “Explanation for the Absence of Extraterrestrials on Earth,” Quarterly Journal of the Royal Astronomical Society, Vol. 16, p.128 (full text). Frank Tipler’s paper on self-reproducing probes is “Explanation for the Absence of Extraterrestrials on Earth,” Royal Astronomical Society, Quarterly Journal, vol. 21 (Sept. 1980), p. 267-281 (full text). Milan Ćirković’s paper on Fermi and Copernicanism is “Fermi’s Paradox – The Last Challenge for Copernicanism?” Serbian Astronomical Journal 178 (2009), 1–20. Preprint.

On Artemis and Starshot

Watching Artemis lofting skyward I relived the Apollo launches, experiencing feelings that no subsequent missions ever engendered. Artemis involves taking humans back into exploration mode with our spacecraft. Getting people out of low-Earth orbit again is a thrill despite the astonishing cost of the SLS launch vehicle. Obviously finding alternatives that would make more frequent flights possible has a major place on the agenda if we are to contemplate a continuous presence on the Moon, not to mention Mars. But for now, what a kick to see that big bird climb.

The distance between actual goals and dreams sometimes shrinks, and we saw recently that Breakthrough Starshot has made serious progress in developing the engineering concepts for an interstellar flyby. Both Artemis and the evolving Starshot design remind me that while most of the population in any era does not venture far from home, there are always a few who do, and those few change the shape of their civilization. Spaceflight obviously demands hardware and missions. Just as obviously, it demands scientists working on ways to push the envelope to attain still more distant goals. And it demands informing the public about where we are.

Be aware that Jim Benford’s recent interview on the matter is now available online. It’s part of a series of presentations offered by Paul Davies, Sara Walker and Maulik Parikh from the Beyond Center for Fundamental Concepts in Science at Arizona State University. This interview powerfully makes the case for funding Phase 2 of the Starshot program and developing early prototypes. The public needs to know about what has been accomplished and what steps lie ahead.

Thinking about Bracewell Probes

Sometimes I jog my perspective on thorny physics issues by going back to earlier work. At our all too infrequent dinners together, Claudio Maccone used to tease me about this, saying that older scientific papers had inevitably been superseded by recent work which would, in any case, incorporate the early documents. But I find that looking at an idea afresh sometimes means re-living its inception, which puts things in context. It was in that spirit that I recently revisited a key paper by Ronald Bracewell.

The name Bracewell holds a certain magic, invoking as it does the era when SETI was just beginning and speculations about extraterrestrial civilizations were getting wider circulation outside the science fiction magazines. Bracewell (1921-2007) was Australian by birth, acquiring degrees in mathematics and engineering and joining in work on World War II era radar. Following completion of a PhD in physics at Cambridge, he continued his work in the 1950s with a position as senior research officer at the Radiophysics Laboratory of the Commonwealth Scientific and Industrial Research Organisation (CSIRO).

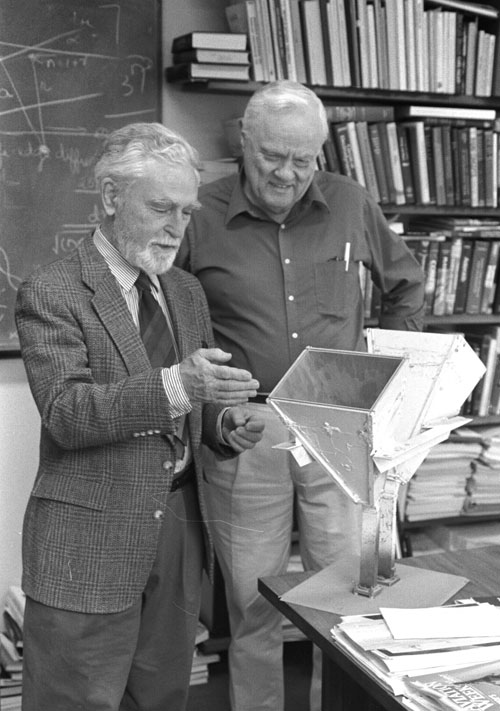

Image: Ronald N. Bracewell, Stanford, CA, March 1983. Credit: NRAO/AUI Archives, Sullivan Collection. Located through Wikimedia Commons.

Bracewell came to the U.S. in 1954 to lecture on radio astronomy at UC-Berkeley before joining the Electrical Engineering department at Stanford University. His contributions to interferometry and the calibration of radiotelescope instruments to achieve breakthrough results are substantial, as a quick look through NASA’s Astrophysics Data System under his name reveals. I’ve noticed in scanning through this body of work that his interest in interstellar probes was persistent as he continued to contribute to the science of exoplanet discovery.

Nestled within the ADS results from 1960 is the unusual paper titled “Communications from Superior Galactic Communities,” which ran in Nature in 1960. In this early era, we had just had the famous paper from Giuseppe Cocconi and Philip Morrison (citation below) that is widely regarded as the beginning of modern attempts to find extraterrestrial civilizations. Given that this paper ran in Nature, which Bracewell obviously knew well because he was writing for it, we can assume that Cocconi and Morrison triggered his decision to write about the SETI question.

I call SETI a ‘question’ in this case because what struck Bracewell about it was its impracticability. Remember, at this same time, Frank Drake had begun planning (in 1959) for the project that would become Ozma, listening to Tau Ceti and Epsilon Eridani in 1960, and it’s evident that Cocconi and Morrison spurred the conference at Green Bank in 1961 that led to the creation of the famous Drake Equation. So we are witnessing western (as opposed to Soviet, with its somewhat different perspectives) SETI beginning to emerge, and it seemed to Bracewell that its approach was off-center.

This comes across in the “Communications from Superior Galactic Communities” paper loud and clear. Going through the suggestion from Cocconi and Morrison that 1420 MHz was the ‘waterhole’ frequency around which radio-using civilizations in search of an audience would gather, Bracewell then mentions Drake’s plans, and points out how unlikely it is that ETI would find us. After all, we were at that time looking for a radio beacon singling us out:

Let us assume that there are one thousand likely stars within the same range as the nearest superior community. This makes it hard for us to select the right one. Furthermore, if this advanced society is looking for us, we can only expect to find them expending such effort as they could afford to expend on the thousand likely stars within the same range of them. It does not seem likely that they would maintain a thousand transmitters at powers well above the megawatt estimated by Drake as a minimum for spanning only 10 light years, and run them for many years, and we could scarcely count on them paying special attention to us. Remember that throughout most of tho thousands of millions of years of the Earth’s existence such attention would have been fruitless.

The alternative? Send probes to nearby stars designed to attract the attention of technological beings on any planets there. It is indicative of the optimism of the early space era that Bracewell should describe interstellar flight as “…what we ourselves are now discussing and are on the point of doing, probably during this century…” We now look to the possibility of an interstellar probe by the end of this century, but the physics says the idea is doable.

Unlike SETI, where we cope with the inverse-square problem of attenuation of the signal, we would be talking about a probe within no more than a few minutes or hours of communications time from its target. Travel times are obviously lengthy, but with an eye toward science delivery for coming generations Bracewell suggests ‘swarm’ strategies that would deliver probes to perhaps the thousand stars near enough to us to be of interest. Each probe could quickly learn key facts about life and technology on these worlds.

Image: Ronald Bracewell (left), with Stanford’s Von Eshleman, a major figure in early research into gravitational lensing. Here the two are examining the horn antennae that Bracewell used in 1969 to determine that the Sun is moving relative to the cosmic background radiation. Credit: Linda Cicero/Stanford University.

In 1974, Bracewell would investigate the prospect of a galactic ‘network’ of civilizations, one that we could perhaps join, but even here in 1960 he homes in on the idea. He imagines our world joining a perhaps galaxy-spanning ‘chain of communication,’ and thus dealing with civilizations that have been through the contact scenario many times on many worlds. These would, obviously, be superior technologies from which we could learn new science.

Bracewell’s probes, then, are designed for contact, and meant to be identified by ETI. He would expand these ideas in his 1974 book The Galactic Club: Intelligent Life in Outer Space. The version of this title most likely to be available in used book stores is the 1976 printing from the San Francisco Book Company, and it’s a good thing for any interstellar enthusiast to track down.

Here the method reminds us that not long after the time Bracewell was writing, Carl Sagan was negotiating with Russian astronomer Iosif S. Shklovskii to reprint the book that would become in its western edition Intelligent Life in the Universe (Holden-Day, 1966). The story of that collaboration is itself interesting, as Shklovskii didn’t realize Sagan would not just publish his book Universe, Life, Mind in the west, but would also heavily annotate it with his own brand of science popularization. That disharmony apart, Sagan’s awareness of Bracewell becomes apparent given the method of communications that ETI uses with Earth to announce their presence in the novel Contact, the re-broadcast of radio messages from our past.

Bracewell had suggested something similar, though using radio:

Such a probe may be here now, in our solar system, trying to make its presence known to us. For this purpose a radio transmitter would seem essential. On what wave-length would it transmit, and how should we decode its signal ? To ensure use of a wave-length that could both penetrate our ionosphere and be in a band certain to be in use, the probe could first listen for our signals and then repeat them back. To us, its signals would have the appearance of echoes having delays of seconds or minutes such as were reported thirty years ago by Størmer and van der Pol and never explained.

I don’t want to get caught up in the famous delayed-echo story of the 1920s, but the short version is that amateur radio operator Jørgen Hals observed echoes of a Dutch shortwave station in 1927 and took the matter to Norwegian physicist Carl Størmer and Dutch physicist Balthasar van der Pol. The echoes became the subject of work by Scottish writer Duncan Lunan, who explored them as possible signs of a Bracewell probe operating in the Solar System. The claim became controversial, to say the least, and has since been refuted, although Lunan continued to investigate it. And it is also true that long-delayed echoes have been attributed to various natural sources but remain enigmatic.

In any case, Bracewell advocated remaining alert to a possible interstellar origin for signals that are unusual, for the benefits of joining in an interstellar conversation would be immense. He calculated that even if there were few civilizations that outlived their adolescence (remember, this was in the Cold War era, with nuclear destruction always on our minds), there might still be a few that survived and went on to long lifetimes. The paper continues:

Presumably such an ancient association would be very able indeed technically, and might seek us out by special means that we cannot guess. Whether they would be interested in rudimentary societies which, in their experience, would usually have burnt themselves out before they could be located and reached, is hard to say. Such communities would be collapsing at the rate of two a year (103 in 500 years), and they might already have satisfied the!r curiosity by archreological inspection made at leisure on sites nearer home. On the other hand, the prospect of catching a technology near its peak might be a strong incentive for them to reach us.

Bracewell’s place in the early SETI literature, including Michael Hart and Frank Tipler, can’t be examined without bringing in John von Neumann, whose self-reproducing machines would likewise have spurred Bracewell’s imagination, though his own concept did not include this capability. I want to try to fit some of these pieces together and likewise bring back Sagan and Shklovskii in the next essay. What we’re juggling here is the very concept of what Sagan called ‘mediocrity,’ which he described as ‘the idea that we are not unique.’ Do we sometimes stretch our Copernican understanding of the cosmos too far?

The paper is Bracewell, “Communications from Superior Galactic Communities,” Nature Volume 186, Issue 4726 (1960), pp. 670-671. Abstract. The Cocconi & Morrison paper is “Searching for Interstellar Communications,” Nature 184 (4690) (1959), pp. 844–846. Full text.

Interstellar Choices: Where to Look for Habitability?

A recent conversation with a friend who works the futures markets has me thinking about the nature of daydreaming. This is a guy who tracks fast-breaking numbers all day long so as to avoid getting a freight-car’s worth of coffee beans or some other commodity delivered to his condo. His numbers, he says, are all business, and allow no time for daydreaming. Whereas the numbers I study have no deadline, and give me plenty of time for reflection, moments of gazing off into the distance and just letting thoughts run. Today, for example, I’m troubled about what we know about the age of the galaxy.

If daydreaming sounds abstract, consider that this is an issue that has a bearing on our own standing in the cosmos. We have a pretty good read on the age of the Earth, and can peg it at around 4.5 billion years. Various sources tell me the Big Bang occurred some 13.8 billion years ago, with the formation of the Milky Way beginning not terribly long thereafter. Let’s say for the sake of argument that our galaxy is 13.6 billion years old, a figure that NASA recently cited.

So when did worlds like the Earth – terrestrial planets – began to appear? I think I’ve been writing about this question since Centauri Dreams first appeared, as it draws upon the work of Charles Lineweaver (Australian National University), who in 2001 landed on the figure of 9 billion years ago. The problem is immediately apparent: The galaxy seems to be stuffed with many a planet that is older than our own, and in many cases considerably so. Lineweaver’s work found that the median age of terrestrial planets is on the order of 6.4 billion years.

Here we tug again at the Fermi question – ‘Where are they?’ – since these numbers suggest that the opportunity for civilizations to emerge was robust long before our planet began to coalesce. Since that seminal 2001 paper, which I’m surprised is not cited more than it is, Lineweaver has continued to explore the numbers, and they are likewise massaged in other subsequent papers, but rather than going into the details, let’s just say that we’re still left with a galaxy far older than our planet. Give an extraterrestrial civilization a 2 billion year head start and you might think they would be visible to us in some way, or maybe not. Maybe civilizations don’t live all that long?

See Stephen Webb’s wonderfully readable If the Universe is Teeming with Aliens, Where is Everybody? (Springer 2015), the latest edition of which offers 75 answers to Fermi that range from the preposterous to the ingenious. I also send you to Milan Ćirković’s absorbing The Great Silence: Science and Philosophy of Fermi’s Paradox (Oxford, 2018), which mines the depths of a question that many do not consider a paradox, and others find deeply troubling no matter what the name. And Paul Davies is also a reminder of how rich the literature on Fermi is. See his The Eerie Silence (Mariner, 2010) for still further insights.

Thinking about a culture that was around in the days when the first signs of life began to appear on Earth is indeed cause for daydreaming. I notice this morning that Avi Loeb, in his lively publishing venture on Medium, is looking at how long-lived civilizations might cope with the problems raised by their longevity. It’s one thing to consider our own fate when the billion years or so we have before the Sun gets too hot to deal with completely dwarfs our species’ scant time on Earth. But what would we do if we actually survived for that billion years? Would we go elsewhere, or find a way to move the Earth to an orbit that would provide habitable conditions for millions, even billions of years more?

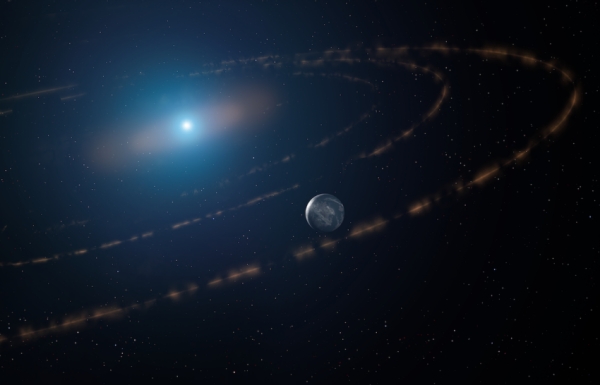

This is pretty lively stuff, for it opens up the possibility of terrestrial-class planets orbiting far outside what was once their habitable zone. It also brings into question the matter of white dwarfs, which could still sustain life for a species that insisted on staying within its natal stellar system. An ETI that can move planets might move one again, this time back in toward the Earth-sized remnant of its former red giant star. I would assume interstellar relocation would make more sense, but no one can know what alien minds might think of this.

Loeb has worked on these issues before:

In 2013, I co-authored a paper with Dani Maoz… which showed that during a transit by an Earth-mass planet across a white dwarf, the transmission spectrum of the planet’s atmosphere would show prominent bio-markers such as molecular oxygen absorption at a wavelength of ∼ 0.76 micrometers. We calculated that a potentially life-sustaining Earth-like planet transiting a white dwarf would be detectable by the Webb telescope in about 5 hours of total exposure time, integrated over 160 two-minute transits.

The method is familiar, one that we’ve discussed here often ever since the first transmission spectroscopy results began showing us what could be found in a hot Jupiter’s atmosphere. I love the idea of expanding the search for habitable worlds into environments as seemingly bizarre as these, although the limitations on telescope time (demand is high!) would make such searches lower priority than, say, a close look at a nearby red dwarf’s habitable zone planet. Here again we have more SF story material, though. All the possible planets around white and red dwarf stars make for fertile hunting for story crafters.

Image: Artist’s impression of a still unconfirmed planet around the white dwarf star WD1054-226 orbited by clouds of planetary debris. Credit Mark A. Garlick / markgarlick.com. License type Attribution (CC BY 4.0).

Loeb also mentions a paper I had missed in earlier discussions of stellar ages. In 2019, Nicholas Fantin (University of Victoria, BC) and colleagues extended the Lineweaver work I led this post with to include white dwarfs, considering them as age markers that help us trace the development of the galaxy. The bare bones of this method are described here:

We develop a new white dwarf population synthesis code that returns mock observations of the Galactic field white dwarf population for a given star formation history, while simultaneously taking into account the geometry of the Milky Way (MW), survey parameters, and selection effects. We use this model to derive the star formation histories of the thin disk, thick disk, and stellar halo.

Skipping the details, I just want to cite a few results that back up the interesting point about the relative youth of the Sun. According to this model, the Milky Way’s thick disk began forming stars 11.3 ± 0.5 billion years ago. The growth rate peaked at 9.8 ± 0.3 billion years ago. A slow decline in starbirth is traced that eventually became a constant rate that persists until now. Heavily reliant on results from the Gaia mission, the data set is dominated by disk stars in the solar neighborhood. A larger sample size will eventuate through surveys like Pan-STARRS DR2, the LSST, as well as data from WFIRST and Euclid.

Again we face what Tennyson called ‘the long result of time.’ So much time, in fact, that civilizations in their multitudes would have had the chance to form. Cirkovic notes in The Great Silence just how much deeper the Fermi question becomes when we consider it in light of such findings. He points out that the original Fermi statement (WeakFP) could be taken to ask why we have seen no evidence of extraterrestrials on Earth or in the Solar System. Keep extending the search outward, though, and the issue gets more and more puzzling. Take the entirety of our past light cone as your canvas and the lack of signs of extraterrestrial activity despite the billions of years civilizations could have existed escalates in impact. This is why Webb’s book is as long as it is.

All this is occurring even as we continue to rack up exoplanets of all descriptions including those of terrestrial mass, and even as the prospect of interstellar travel is now under serious investigation, as we’ve just been reminded by Jim Benford’s work with Breakthrough Starshot. We have developed, says Cirkovic:

Improved understanding of the feasibility of interstellar travel in the classical sense and in the more efficient form of sending inscribed matter packages over interstellar distances. The latter result is particularly important since it shows that contrary to the conventional skeptical wisdom shared by some of the SETI pioneers, it makes good sense to send (presumably extremely miniaturized) interstellar probes, even if only for the sake of communication.

Just where to send such probes? The nearest stars are obvious candidates, with Proxima Centauri b leading the list, but fleshing out a target roster – today an exercise in theory more than planning – may take in destinations we have only begun to consider. That’s assuming our early work on interstellar probe technologies continues to develop options for ever more distant targets. Imagine ‘swarm’ flybys of interesting systems, a capability we may well be able to deploy some time late in this century.

The nearest white dwarf to the Sun, by the way, is Sirius B, some 8.6 light-years out. The closest solitary white dwarf is van Maanen’s Star, about 14 light years distant. The closest red giant is Pollux in Gemini, at about 34 light years distance

The paper is Fantin et al., “The Canada-France Imaging Survey: Reconstructing the Milky Way Star Formation History from Its White Dwarf Population,” The Astrophysical Journal Vol. 887, No. 2 (17 December 2019), 148. Full text. Charles Lineweaver’s 2001 paper is “The Galactic Habitable Zone and the Age Distribution of Complex Life in the Milky Way,” Science Vol. 303, No. 5654 (2 January 2004), pp. 59-62, with abstract here.