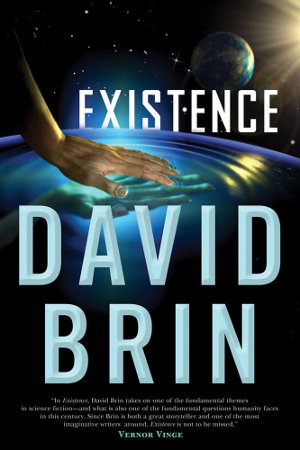

As we’ve had increasing reason to speculate, travel to the stars may not involve biological life forms but robotics and artificial intelligence. David Brin’s new novel Existence (Tor, 2012) cartwheels through many an interstellar travel scenario — including a biological option involving building the colonists upon arrival out of preserved genetic materials — but the real fascination is in a post-biological solution. I don’t want to give anything away in this superb novel because you’re going to want to read it yourself, but suffice it to say that uploading a consciousness to an extremely small spacecraft is one very viable possibility.

So imagine a crystalline ovoid just a few feet long in which an intelligence can survive, uploaded from the original and, as far as it perceives, a continuation of that original consciousness. One of the ingenious things about this kind of spacecraft in Brin’s novel is that its occupants can make themselves large enough to observe and interact with the outside universe through the walls of their vessel, or small enough for quantum effects to take place, lending the enterprise an air of magic as they ‘conjure’ up habitats of choice and create scientific instruments on the fly. You may recall that Robert Freitas envisioned uploaded consciousness as a solution to the propulsion problem, with a large crew embedded in something the size of a sewing needle.

Brin’s interstellar solutions, of which there are many in this book, all involve a strict adherence to the strictures of Einstein, and anyone who has plumbed the possibilities of lightsails, fusion engines and antimatter will find much to enjoy here, with nary a warp-drive in sight. The big Fermi question looms over everything as we’re forced to consider how it might be answered, with first one and then many solutions being discarded as extraterrestrials are finally contacted, their presence evidently constant through much of the history of the Solar System. Brin’s between-chapter discussions will remind Centauri Dreams readers of many of the conversations we’ve had here on SETI and the repercussions of contact.

Into the Crystal

At the same time that I was finishing up Existence, I happened to be paging through the proceedings of NASA’s 1997 Breakthrough Propulsion Physics Workshop, which was held in Cleveland. I had read Frank Tipler’s paper “Ultrarelativistic Rockets and the Ultimate Fate of the Universe” so long ago that I had forgotten a key premise that relates directly to Brin’s book. Tipler makes the case that interstellar flight will take place with payloads weighing less than a single kilogram because only ‘virtual’ humans will ever be sent on such a journey.

Thinking of Brin’s ideas, I read the paper again with interest:

Recall that nanotechnology allows us to code one bit per atom in the 100 gram payload, so the memory of the payload would [be] sufficient to hold the simulations of as many as 104 individual human equivalent personalities at 1020 bits per personality. This is the population of a fair sized town, as large as the population of ‘space arks’ that have been proposed in the past for interstellar colonization. Sending simulations — virtual human equivalent personalities — rather than real world people has another advantage besides reducing the mass ratio of the spacecraft: one can obtain the effect of relativistic time dilation without the necessity of high γ by simply slowing down the rate at which the spacecraft computer runs the simulation of the 104 human equivalent personalities on board.

Carl Sagan explored the possibilities of time dilation through relativistic ramjets, noting that fast enough flight allowed a human crew to survive a journey all the way to the galactic core within a single lifetime (with tens of thousands of years passing on the Earth left behind). Tipler’s uploaded beings simply slow the clock speed at will to adjust their perceived time, making arbitrarily long journeys possible with the same ‘crew.’ The same applies to problems like acceleration, where experienced acceleration could be adjusted to simulate 1 gravity or less, even if the spacecraft were accelerating in a way that would pulverize a biological crew.

Tipler is insistent about the virtues of this kind of travel:

Since there is no difference between an emulation and the machine emulated, I predict that no real human will ever traverse interstellar space. Humans will eventually go to the stars, but they will go as emulations; they will go as virtual machines, not as real machines.

Multiplicity and Enigma

Brin’s universe is a good deal less doctrinaire. He’s reluctant to assume a single outcome to questions like these, and indeed the beauty of Existence is the fact that the novel explores many different solutions to interstellar flight, including the dreaded ‘berserker’ concept in which intelligent machines roam widely, seeking to destroy biological life-forms wherever found. We tend to think in terms of a single ‘contact’ with extraterrestrial civilization, probably through a SETI signal of some kind, but Brin dishes up a cluster of scenarios, each of which raises as many questions as the last and demands a wildly creative human response.

Thus Brin’s character Tor Povlov, a journalist terribly wounded by fire who creates a new life for herself online. Here she’s pondering what had once been known as the ‘Great Silence’:

Like some kind of billion-year plant, it seems that each living world develops a flower — a civilization that makes seeds to spew across the universe, before the flower dies. The seeds might be called ‘self-replicating space probes that use local resources to make more copies of themselves’ — though not as John Von Neumann pictured such things. Not even close.

In those crystal space-viruses, Von Neumann’s logic has been twisted by nature. We dwell in a universe that’s both filled with ‘messages’ and a deathly stillness.

Or so it seemed.

Only then, on a desperate mission to the asteroids, we found evidence that the truth is… complicated.

Complicated indeed. Read the book to find out more. Along the way you’ll encounter our old friend Claudio Maccone and his notions of exploiting the Sun’s gravitational lens, as well as Christopher Rose and Gregory Wright, who made the case for sending not messages but artifacts (‘messages in a bottle’) to make contact with other civilizations. It’s hard to think of a SETI or Fermi concept that’s not fodder for Brin’s novel, which in my view is a tour de force, the best of his many books. As for me, the notion of an infinitely adjustable existence aboard a tiny starship, one in which intelligence creates its own habitats on the fly and surmounts time and distance by adjusting the simulation, continues to haunt my thoughts.

Summer Evenings Aboard the Starship

For some reason Ray Bradbury keeps coming back to me. Imagine traveling to the stars while living in Green Town, Illinois, the site of Bradbury’s Dandelion Wine. If Clarke was right that a sufficiently advanced technology would be perceived as magic, then this is surely an example, as one of Brin’s characters muses while confronting a different kind of futuristic virtual reality:

Wizards in the past were charlatans. All of them. We spent centuries fighting superstition, applying science, democracy, and reason, coming to terms with objective reality… and subjectivity gets to win after all! Mystics and fantasy fans only had their arrow of time turned around. Now is the era when charms and mojo-incantations work, wielding servant devices hidden in the walls…

As if responding to Ika’s shouted spell, the hallway seemed to dim around Gerald. The gentle curve of the gravity wheel transformed into a hilly slope, as smooth metal assumed the textures of rough-hewn stone. Plastiform doorways seemed more like recessed hollows in the trunks of giant trees.

Splendid stuff, with a vivid cast of characters and an unexpected twist of humor. Are technological civilizations invariably doomed? If we make contact with extraterrestrial intelligence, how will we know if its emissaries are telling the truth? And why would they not? Is the human race evolutionarily changing into something that can survive? My copy of Existence (I read it on a Kindle) is full of underlinings and bookmarks, and my suspicion is that we’ll be returning to many of its ideas here in the near future.

Downloaded the book to my kindle immediately after reading this!

@Eudoxia

Precisely!! You reduced the problem with whole brain emulation right down to one sentence. That is exactly why it is fundamentally impossible to transfer a human mind to a computer- if consciousness is emergent from a purely physical biological phenomena, then you can’t remove that foundation and replace it with something else while retaining that original consciousness.

I don’t know how to scan a brain so perfectly that you can create a perfect simulation of it, but whack-and-slice, as you put it, is a terrible way to start. After electrochemical activity ceases in the brain, neuronal integrity deteriorates in a matter of seconds. The slightest delay in preserving the tissue seriously skews in vitro research results. This should tell you how well plasticizing a dead brain will work in preserving details of the original’s personality- in fact, if the brain is dead, there won’t be any mind left to scan!!

It is relevant in that it shoots down all versions of the transhumanist’s dream of “uploading” themselves to a computer to live indefinitely as “post-human” super-intelligences. It also makes this technique much, much less desirable for anyone who want to become a star traveller- even if “brain simulation” is ever possible, you yourself cannot travel to the stars as an “upload” since your mind is inseparable from your brain. No “Green Town Among the Stars” for you, and what gets to go is at best a simulation that can pass a Turing test. What good is that to an astronaut?

@Eniac

It is not a method of life extension, in my opinion, because it destroys the original and creates a copy±error, unless you view the copies as being essentially the same as you and thus a continuation of you.

There are many other uses of WBE that don’t depend on long debates about the nature of personal identity, as I wrote here:

In other words, it’s the ultimate* form of human enhancement. Then there are uses, like what Robin Hanson’s idea of the “upload economy” (Basically, humans become function calls) and those discussed in Brin’s book and Tipler’s paper, although I am not entirely in agreement: Human are really, really frail; but I don’t see a computer with elements measured in nanometers or even Ängstroms as being much more resilient to the conditions of interstellar flight. Specifically, biology is self-repairing and a synapse can very well withstand a cosmic ray; and biology’s ability to self-repair depends on fundamental properties of it that I don’t believe can be easily transferred (Or transferred at all) to neuromorphic machines in the solid state. Electronics can be hardened, but they may end up requiring more shielding than humans, simply because the switches and transistors or transistor-equivalents will have to be smaller and faster than synapses (And thus frailer) in order to compete with humans in productivity. The one advantage I see to sending uploads to space is that they can be made redundant, multiple neuromorphic machines containing copies of the same person could be strung along in the same ship, millions of kilometers apart, ensuring that some will survive all but the most dangerous journeys.

* In its capabilities, not necessarily its desirability.

@Eniac

You still aren’t seeing my point. As Eudoxia so aptly put it, the the central contradiction of the concept of whole brain emulation is that it begins by saying that consciousness is emergent from purely physical biological phenomena, and then proposes we remove that foundation and replace it with something else. It doesn’t matter what anyone else thinks, including the simulation. When your brain is scanned and resigned to the shredder, you die and experience death.

You are still adhering to a sort of mind/body duality. You seem to think that your continuity of existence and sense of identity are not tied in with the purely physical biological activity of your brain, but with some sort of “software” that is separate from the brain and can be transferred to a computer, and that if that as long as that “software” exists, you have continuity of existence. This is dead wrong.

Your mind and sense of self emerge from the biological activity of your brain, and you can’t remove that foundation and replace it with something else. Whatever is in the crystalline ovoid is not you, nor even a branching of your continuity of existence. It is simply a simulated copy of your brain capable of passing the Turing test. It is not the same “you” that walked into the scanning room. You are not, after all, a piece of paper but a living human with a functioning brain, and if that functioning brain is not transferred by the “mind uploading” process, you are not transferred. Got it?

I think you are right, that is pretty much what I think, and I do not see why it is dead wrong. The mind is to the body as a document is to paper, as software is to computer. If you want to call that adhering to a sort of mind/body duality, then I am guilty of it.

You keep repeating this as if it was somehow self-evident, but to me it does not make any sense. How does a mind “emerge” from a body, and how is that different from a document “emerging” from a piece of paper, or a Google search “emerging” from a computer screen?

Is it because the latter have been put there by human action, while the former has been built by a lifetime of learning? Is that the difference that makes it impossible to transfer minds to a different substrate, while it is easy for documents or software? Are you sure you mean impossible rather than difficult? Difficult I can live with, but impossible is the kind of absolute claim that needs to be better supported than by steady repetition of the “emergent” sentence that I do not find convincing.

If what is in the ovoid has my memories, my experiences, my thoughts and enough sensory ability to read and write, preferably also hear and see, then it is me. Not the same “me” that walked into the scanning room, but only in the sense as “me” today is always different from “me” yesterday. If the process is not perfect, it may feel less like the passing of an ordinary day and more like awakening after a terrible accident with limbs missing and mental or physical disability from brain damage. A different “me”, for sure, but still me, with the same justification as is used for real victims of accident.

Once the process is perfected to where it is more like routine surgery than a terrible accident, I think you will find plenty of people who want to undergo it, and plenty of ovoid inhabitants that are happy that they did. You may also find a lot of “originals” who still walk around in their bodies and feel like they drew the short straw. But, that’s life, you can always try again…

No, I did not get it. We call the mind by this name precisely to distinguish it from the body. The mind and the body are different things. You can have a body without a mind. You cannot have a mind without a body, but nowhere is it written that the body cannot change under the mind. In fact it does change, naturally with time, sometimes unnaturally in accidents. The continuity of self is generally considered unaffected by even fairly drastic changes.

You have previously allowed that a simulation can for purposes of discussion be assumed to be perfectly accurate. Are you saying that replacing the real body by a perfectly accurate simulation of it is somehow more disruptive of the continuity of self than, say, a night of heavy drinking involving the loss of many neurons?

@Eudoxia:

It is, then, in my view, life extension, because I do view the copies as being essentially the same as me, as long as the ±error part is not larger than such changes as may occur after excessive partying or the minor head trauma common in many sports…

I suppose the way to see it is that you create from one mortal individual two of the same identity, one mortal and one not. Before the procedure, I can look forward to both. After the procedure, one of me is still mortal, but can look forward to trying again. The other me has my life extended and will probably rush to sign the contract with “6-sigma Triple Off-Site Backup Vault Co.” ensuring its continued existence even under extreme circumstances. Eventually, the original will die, but the copies will, after some mourning, move on with their lives and strive to accomplish all of their shared dreams and aspirations, which are, of course, the same as those of the deceased original.

@Eniac

Yes, thinking of the mind as “software” that can be copied to a different substrate while preserving continuity of existence is a sort of mind-body dualism. This idea arises from the misconception that the brain is a sort of computer with biological “software” that can be decoupled from the physical structure of the brain and transferred to a new substrate. You nailed the difference between a computer and a brain in the second paragraph I quoted from you, however!!

The brain is never a tabula rosa (blank slate) that is then “programmed” with a mind like an empty computer box. Your brain was wired up and functioning as a mind from your birth, and furthermore the brain forms as the embryo develops. Your personality, memories, and sense of self all are built over a lifetime, and what you consider “you” is an artifact of the changing connections between the 100 billion neurons in your brain. Your mind is tied with your physical brain, not with information about your brain.

With some incredibly advanced scanning and computer technology, maybe our descendants could make simulations of a human brain that can pass the Turing test, but this process can’t transfer those 100 billion neurons that your sense of self is tied up with- and thus, your sense of self can’t be transferred to the “crystalline ovoid”. Creating these simulations does not transfer some sort of ephemeral “software” that is contained in a blank slate brain, but simply recreates a brain’s physical structure like a malfunctioning transporter making a duplicate of Will Riker.

So, do I mean impossible when I say impossible? If by “mind uploading” you mean transferring your mind to different substrate by a physical process, then yes, that’s intrinsically impossible. However, if by “mind uploading” you mean the creation of a simulation of your brain that can pass a Turing test but which leaves your original brain and mind intact, I can only say that that is extremely difficult and quite likely infeasible, but not fundamentally impossible.

This is not true for the definition of “dualism” as used in philosophy, of which is it a technical term. The “mind as software” is instead firmly rooted in physicalism, which is non-overlapping with dualism. But this is just semantics, really.

I do not agree with your assertion that the mind is “tied” with the physical brain any tighter than a document is tied to paper. I do not know how you can be so sure of it, either, as you have not provided a proof or even a strong argument. Whether printed on a laser printer, laboriously chiseled out of stone by a sculptor, or developed in the womb and then imprinted with experience, all of these are merely complex ways of generating an “emergent” configuration of matter. A configuration that has meaning on a higher level than the matter from which it is made, a meaning that can be abstracted from that matter. As physicalists, though, we also have to be sure that “higher level” does not at all mean “beyond physical”.

The other thing that I do not agree with is that apparently you make a distinction between an original mind and an entity that is functionally identical. In philosophy, this is known as the zombie, and as far as I understand, physicalism does not allow a distinction between a real person and a zombie. I am not a philosopher, though, so I could be mixing this up.

My own opinion is that the mind is abstractable from the body, and that there is no distinction between a mind and a copy of a mind that thinks and acts exactly like that mind. I know I am not alone in this, and I believe it is you (and Athena, whom you seem to be channeling) that are in the minority here.

This being a philosophical question, there is probably no point in trying to get at the “truth” by further discussion, we we should probably leave it at that.

Allow me to comment briefly on the can a brain be emulated (and I presume what is meant is perfect emulation) if the physical substrate is replaced by something radically different? I can’t answer that question, and with all due respect, no one else else can either, though it’s fun for a while to fantasize regarding it. There is no question that the more we learn about the brain the more we will be able to encode mind-type functionality into physical matter and make some really cool laptops at the very least. But that is rather removed from this perfect emulation business. Moreover, I think the farther we get from the perfect emulation business, the more realistic will be our expectations. According to Dr. Elkohnon Goldberg, while we’re getting ever deeper into the brain via sensors and observational capabilities and so forth, the breakthrough everybody has hoped for has not happened it. For the record, I think it will at some point, but if Dr. Goldberg says we don’t know how or when that will happen, I accept that answer.

Now, I have read speculations regard that in a post-Singularity (sorry) world, such a world be far more inclined to turn inward than outward. We can say confidently that a good-enough immersive virtual world would open up amazing and garish possibilities for recreation and sensory stimulation. In a word, it would be tempting. One can imagine that there would be plenty of people who would be content enough to spend eternity, or a very long finite approximation of it, in such an environment. And that’s the problem. I just don’t want settle for a simulation-whatever of “me” striding the frozen shores of an alien ocean. It’s just not good enough. The context is not right, it’s limiting and I could only pretend for so long that it is not limiting. One aspect of feeling the crunchy ice encrusted beach beneath my feet in the twi-light light of a fading star would be that it would be close to the experience of walking about the tundra or antarctica. Close, but far, far from perfect. I’ve got to experience the whole thing. It’s not the materal substrate that is so vital, but the whole rich context of the personal experience. And pin-brains or whatever, no matter how advanced and rich in detail, would never be enough. It would be like watching a super-detailed travelogue of a trip to the Bahamas. Sorry, I want to go there not endure some bore-machine telling me how great it was. I want to have the good time with Tiffany. Yes, it’s true that such miniturized brain-like entities will in some fashion to some degree be part of interstellar exploration, but they cannot and must not be the whole of it. Or else we might as well check our humanity at the door and say to hell with it.

The musings reported and commented upon are respectable, yet they are metaphysics more than anything else. They are not science. Metaphysics is closed. Science is about generating hypotheses and then getting into the rough and dirty business of testing them and integrating the results into the whole of knowledge. Science is open. Metaphysics is about speculation and while that is fine for stories, stimulating the imagination and all that which is cool, that’s it. Metaphysics also frequently (often enough in any event) involves what Popper* terms the “craving to be right.” And it is wise to avoid such, or at least to keep it to an absolute minimum.

*”The wrong view of science betrays itself in the craving to be right.”

@Eniac

Regardless of whether you think of the copy of a human brain as equivalent to the original brain, you can’t ignore that you, the original copy, will never wake up “as” that copy. Thus, you yourself can’t secure personal immortality for yourself that way. So, you copy the original, but the original is left by the process- and so will you if you are being copied- even if you resign yourself to the shredder afterwards.

Not only that, but the identical copy of you will begin diverging from the original you from the moment it is created, so he/she won’t think exactly like you do forever. If I were to split you into two people, and send each version to a different place to have different experiences, do you think each copy would remain identical? Not very likely- what if I sent one copy of you to a war zone to be made a prisoner of war while the other was let loose and became owner of a prosperous corporation? One copy of you would be a scarred battle veteran, the other a wealthy CEO- each with an entirely different outlook on life. The difference between a flesh-and-blood human and someone on a computer would likely be equally stark.

How are you so sure that your mind represents software, when an ordinary computer in no way resembles a brain? For a single model of computer, every individual computer is the same, and the same operating systems are installed on every computer. A human brain develops in the womb and the “biological software” or mind emerges from the connections that form in that brain. Your brain is somewhat different from every other human brain on the planet, and so is your mind. Computers are not self-aware, but the human mind/brain is. Computers are programmed by a human- they cannot observe and learn on their own. A human brain learns and develops on its own. You haven’t made any strong argument in favor of the mind being “software”, while I have demonstrated many times that brain is nothing like a computer.

All you have done is state that you believe that the mind is software that can be separated from the brain and that other people believe the same thing, therefore you must be right. That is an argumentum ad populum– the fallacious argument that your proposition must be true because many people believe it. Everyone in the United States could believe that the Earth orbits the Moon, but that wouldn’t make it true.

It is not a “philosophical question” whether or not the person who was originally “uploaded” actually gets to experience being transferred onto a computer or not. As you will not be transferred, you can only use “brain simulation” as a means to leave a clone-like descendant of your mind, but not to secure personal immortality. I think that will matter a lot to anyone considering being “uploaded” in the far future.

@Christopher:

I must reject that last part as having been put in my mouth. I do not think that I must be right. I have spent most of this discussion questioning your absolute certainty that I am “dead wrong”. Myself, I am not certain at all. I do, however, find comfort in that fact that others think similarly. While I have not really based my arguments on that, you are free to call it argumentum ad populum, if you wish.

Sure, and this is a good thought experiment. But why does that make one of them the real you, and the other a fake? And which one is which? Both are equally different from the original, after all.

What if the machine was set up in such a way that one person walks in and two persons out, with no clue of which is which? External observers would not be able to tell, and the persons coming out would both be absolutely convinced of being the one that went in.

The machine might even work in such a way that there is no physical distinction between copy and original. For example, half of your atoms might be moved a foot to the right, the other half a foot to the left, and the missing atoms in each copy replaced by identical new ones.

Unless the copies were you, or of your conviction, they would most likely both be ready to accept the other person’s right to claim the same identity and get along with each other just fine. If one of them died a week after the event, he would do so with the comfort that only one week of his life would be lost. The rest would live on with his “twin”.

Let me recite this from one of my earlier posts, because it speaks to the crux of this matter, and you have not really addressed it:

You are correct in saying that you (#1) are different from you (#2), but you fail to acknowledge that #1 and #2 are both “you” (before being copied), with some extra time (equal amount for both) added on.

Later, when referring to the destructive process, you say “You will die”. This is true, and for you (#1) the attempt at immortality has indeed failed. However, it is also true that you (#2) survive, and the attempt has very much succeeded from your (#2) point of view. You (#1) no longer care, and all that has been lost in the process are the few minutes or seconds between the copy event and your (#1) death. Since the lost experience consists mostly of dying, good riddance to it. What remains is you (#2) being alive and immortal, which is the intended result. You will have all your experiences up to and through the copy event, with full continuity of consciousness.

Do you absolutely reject the notion that a person could be duplicated? That a mind could legitimately be branched into two? Is there some non-physical reason preventing such branching? A soul, perhaps, that cannot be split in principle? Or is it simply the technical difficulty that makes it impossible?

Or, maybe a simpler question: What about the copy? If she is not the same person that went in, then who is she? A newborn? A creation? A zombie, acting like the original, but lacking some critical, imperceptible but real ingredient?

I would really like to understand your thought process in this, because your conviction does not appear rational to me. If you had some simple answers to some of these questions, that might help.

@Christopher Phoenix:

I’m not sure how familiar you are with software/hardware, so perhaps I’ve misunderstood some subtleties of your position, but you seem to contradict yourself. You say that Eniac is wrong to think of the mind as software to the brain’s hardware, and that this amounts to dualism. Instead you say, the mind has no objective reality beyond the data stored in the neurons of the brain and the connections between them, from which the mind emerges.

Yet this is exactly analogous to software and hardware. Software has no objective reality beyond the bits stored on the hardware. It is the pattern of these bits, written in machine language, compiled from a higher programming language, from which the software “emerges” to perform useful tasks.

Software is documented, packaged, marketed, and in general thought of by humans as some kind of entity separate from the physical bits that encode it. This is merely an abstraction, a ‘useful fiction’ that facilitates our feeble thinking. We do the exact same thing with the mind, we abstract it, we even tend to think of it being an entity in its own right, separate from the body – the dualist’s error.

Software is never “separated” from the machine – it doesn’t need to be to copy it, and neither does the mind. Software isn’t hindered by having no reality beyond the encoded bits of hardware. This is just what makes it so easy to move from one machine to another. All one need do is copy the encoded algorithms of the software from one computer to another (in the right places). There’s no connected metaphysical entity one must worry about re-associating with the copied bits.

There are practical concerns, of course; the software may not be compatible with the new operating system, the hardware may even be sufficiently different such that it cannot run properly. If it is important enough though, these obstacles may be overcome, e.g. by running it on a virtual machine or emulator, or rewriting it so that it works on the new system but functions in precisely the same way on the front end. I see no reason why similar tactics could not, in theory, be employed when moving/copying a mind.

The most important distinction between a brain and a modern computer is that the brain is organically assembled by living, multiplying cells according to genetic instructions within those cells, writing its own algorithms as it goes. Another important difference is the brain’s plasticity. There is current, ongoing research into software that rewrites itself, computers that rearrange themselves, computers without a CPU, but with processing collocated with the data, and computers that build themselves. These are all implementation details, and are not theoretical hindrances to moving a mind. The principal barrier is the sheer complexity of the brain, and the difficulties associated with its compact architecture and the need to do the transfer in vivo. Perhaps these will eventually be found to make it implausible, but they are practical considerations only, and there is certainly no way to know now whether they will.

So the mind is just like software, merely an emergent phenomena that results from a particular set of executed algorithms, operating on stored or inputted data, but that is generally abstracted by humans and thought of as a separate entity. And just like software, I see no theoretical reasons that it could not be moved or copied from one machine to another.

It sounded a lot like an argumentum ad populum, and if it wasn’t, why would you bring it up to support your proposition?

You have a really odd idea of continuity of identity. If you (copy #1) die, and (copy #2) cannot possibly be you, the original. Thus you have shown that you accept my proposition that no physical process can transfer copy #1 onto the new computer substrate in such a way that copy #1 actually gets to go- which leaves us with two copies. This proves, beyond a shadow of a doubt, that an individual does not get transferred by this process. You have simply redefined what you think of as “continuity of identity” as “having the same memories” when you realized that you, or as you refer to yourself, “copy #1”, can’t be transferred to the computer and become immortal. But what good is having copy #2 going to do you if you die and experience the death process? All it can do is provide psychological comfort that a simulated copy of you will be left behind after you die.

Still, philosophical arguments aside, you have accepted my only proposition- the original mind cannot be transferred to a computer, only duplicated. Thus, we end up with an original and a copy of the original whose experienced began to diverge from the original the moment it is created. The original experiences being scanned, but is then left by the process unless it is for some reason destructive, in which case the original brain experiences death. Contrary to what you think, I am not prejudiced against the simulated duplicate brain. I am concerned about what happens to whoever undergoes the scanning process, and if it doesn’t offer any chance at personal immortality, you have to admit that a lot of the promise is taken out of the idea of “mind uploading”.

Simply put, nondestructively scanning and creating a simulation of my brain cannot transfer my own personal identity, since I (copy #1) am not transferred. Thus, this process isn’t actually very useful to me personally. You might argue in favor of considering all the “copies” as being the original, but the original would disagree emphatically as he/she is still there after the process. Why are we only going to listen to the simulated copy we just saw created? The original is still there, after all. If the scanning machine destroys the original brain, then that brain experiences death, and still doesn’t get to be transferred into the “crystalline ovoid”. In that case, I am actually killed to create this “brain simulation”, so I will never agree to the process. Who in their right mind would choose to have every neuron in their brain ripped apart while they are still conscious only to create a copy of themselves?

My thought process is simply self-preservation and self-advancement. This process can’t preserve me personally, and I am not such a huge egotist that I insist that some copy of my brain be created to bring light to the world after I die. I certainly wouldn’t die early for such a copy. If mind scanning only creates separate entities that have my neural pattern, why worry so much about creating one? If the scanning process was totally nondestructive and creating such a simulation was traditional, or something like that, maybe I would create one, but it would not ultimately help me achieve immortality or travel to the stars. So, maybe you’ll argue I’m a “selfist” for not caring about copies of me that don’t exist, but you certainly can’t argue I’m an egotist.

@homeidon

You may be right- perhaps, if we accept the proposition that the mind is an emergent property of the brain, we can speak of the mind/brain as being abstracted from the original brain and replicated. However, our own sense of identity has no meaning beyond the biological structure of our brain, so if our brain is replicated somehow, we ourselves will not experience being transferred. So even if there is no reason why a human brain can’t be copied, the original will be left behind by the process.

That said, I don’t think we can accurately describe the brain as any mechanical or electronic device we’ve made so far. Before there were computers, some people thought of the brain as a telephone switchboard, and before there were telephone switchboards someone described the brain as a telegraph line. None of these descriptions are accurate. The human brain develops in the womb, and the human mind develops from the changing connections between its neurons. At no point is the brain “programmed” with a mind that is inserted as an afterthought. That is the difference between a computer and a brain.

That is quite likely, especially since so many portions of the brain process and interpret nerve impulses from the rest of the body. Without those impulses coming in, the brain will likely be driven insane by a high-octane version of nerve pain. I like your idea of using an emulator, though- with a virtual body and brain, perhaps a simulated human mind could survive and remain sane.

@Homeidon: I completely agree. You have said pretty much exactly what I might have said in answer to Christopher’s assertion that the differences between brain and computer amount to something absolute, that they represent a separation by principle rather than by degree. Only, you have said it much better than I ever could have.

As I have said, I am not sure of this, but to me it is the only proposition that makes sense. Allow me to twist your above argument a bit with the goal to show that there are many similarities as well as differences between computers and brains:

There are many different models of computers, and their are many different operating systems and versions of such. In fact, once you consider software installed and files stored, your computer is somewhat different from every other computer on the planet. Software develops, not in the womb, but in the cubicles of software companies. In many respects, the process is analogous, as the software grows, it gets better and more sophisticated as experience is gained over the years.

It is not by accident that we call computers “electronic brains”, their essential function is the same, regardless of the (currently) large differences in capabilities: Both serve to sense, interpret, and control. Or more generally: Both serve to process information.

So, your assertion that “an ordinary computer in no way resembles a brain” does not ring right to me, at all.

@Eniac

Well, you are neglecting the biggest difference between a computer and a human brain. A computer has only one processor, and simply carries out programmed instructions without regard for efficiency, alternative solutions, possible shortcuts, or possible errors in the code. A human brain, on the other hand, is a vast, complex neural network that uses parallel processing, simultaneously processing incoming stimuli. Our brains can form new connections, so we can learn, solve problems, and adapt to new stimuli, unlike a computer. A brain isn’t anything like an ordinary computer. Perhaps someday artificial neural networks will be used to create AIs, but for now no computer built by humans can demonstrate self-awareness, a capability to learn, problem-solving skills, and thought.

I’ll list the reasons why I think that it is probably impossible to transfer a human mind to a computer, and certainly not in a way that preserves the original mind.

1. The quantity of information required to scan, map, and copy the human brain to a non-biological substrate is so large that it will probably take too long to do so. Our brains contain 15-33 billion neurons, each of which is connected by synapses to several thousand other neurons. Copying all of that without making errors seems to me to be as acutely difficult as a Star Trek transporter transfer. You would have to map out every neuron’s position in 3d space, map every synapse, and faithfully record all the biological processes that create the mind. Once you have the scan, running a simulation of a human brain will require incredible amounts of processing power.

2. The human brain and nervous system is fundamentally tied to the rest of the human body. Vast portions of the brain interpret nerve impulses from the rest of the body. Even if we could become disembodied, the portions of our brain that were rendered superfluous- such as those the coordinate automatic functions such as the heartbeat, digestion, movement, and regulate hormonal output- would flail around searching for the proper connections, just as people who have lost limbs experience phantom limb pain. We just aren’t compatible with a disembodied existence on a computer. We would need to create an emulator that simulated a human body for the mind, or sensory deprivation would unhinge the uploaded mind completely.

3. Even if we someday have the infinite technical resources required to scan the human brain and recreate it, the process still wouldn’t offer personal immortality to whoever “uploaded” their mind. Copying every connection in your brain will not mystically “shift” you to a new disembodied existence, for reasons I have discussed many times already- you will only create a clone-like descendant of your brain, not secure immortality for yourself. If the scanning process in non-destructive, you walk out of the scanner, and if it is destructive, you die, but you will not experience being transferred to a computer.

You can read about all the difficulties with mind uploading here. This article mentions that immense computational power would be required, we have no method of scanning a human brain at such high resolutions yet, and that there are many questions about copying individuals and personal identity surrounding the idea of “mind uploading”. While some scientists and futurists think brain emulations are possible or even just around the corner, most scientific journals and mainstream research funders remain skeptical, as do I.

None of the issues I have mentioned extend beyond the human mind. The particular requirements of our physiology do not preclude the creation of a stable intelligence in a computer, which arose within the computer and recognizes that place home just as we arose in our biological brains.

I do not see why it cannot, and I think you are pulling this “cannot” out of thin air. Perhaps it stems from your ill-guided conviction that if a mind is copied, the self, somehow, stays behind.

Please assume for a moment that we do have the hypothetical symmetrical duplicator I described earlier. It moves half of your atoms to the right, and the other half to the left, then filling in the gaps to create two perfectly identical wholes. Then answer me this:

Which is the original, and which the copy? Your above argument can no longer apply, because there is no distinction between individual and copy. How would you correct your above argument given this hypothetical situation?

In the last paragraph, that should be “distinction between original and copy”

I have not neglected any of the many differences between computer and brain. I have instead pointed out that there are substantial similarities, which you have categorically denied. If you do want to continue to argue this, please do so to the point and tell me why the similarities I pointed out aren’t really.

@Eniac

You seem to be growing ever more desperate to prove, somehow, that I am wrong and the original will actually get to experience being transferred to a computer. When you scan and emulate a mind, the original person is not cut in half and the missing halves perfectly replicated (which is physically impossible outside of bad Star Trek scripts)- the original is left as it is (or killed if the process is destructive), and only the information required to emulate his or her brain is copied to the computer. Your thought experiment has absolutely no relation to creating a mind emulation.

At this point, we are getting nowhere. This seems to be a matter of faith to you rather than a rational discussion of a hypothetical technology. Do you think that if your brain was scanned and emulated, you would experience being “transferred” to a computer? Or do you just have so little regard for continuity of identity that you are willing to resign yourself to the shredder after creating a clone-like emulation of your brain, even though you won’t experience the transfer yourself? Please explain- your reasoning is not very clear.

My point is that since your mind has no existence beyond the physical neurons and synapses in your brain, the only way to “upload” your mind is to create a simulation of those neurons and synapses on a computer. What you get is an emulation of the original brain, not the brain itself. Since the original brain is not retained by the process, the original mind that arose from it is not retained either, and it experiences staying in its original body until death. Redefining “self” to include any copy of you that thinks it is you does not help the original mind/brain that gets left behind by the process.

@Eniac

Yes, I have denied that there are substantial similarities between a brain and a computer, because you are simply wrong. A brain is not analogous to or even similar to a computer in any way that is useful to either biology or computer science, starting with the fact that a computer is never a blank chassis that passively accepts software. Arguing with you is like arguing the specifics of a flight to Mars with someone who thinks the Sun orbits the Earth.

Software is not “developed”, it is written. Our minds are not “written” in the womb- who do you think wrote it? God? :-) Our brains change continuously with the strengthening of new synaptic connections, and that is what makes our personalities. Computers just run sets of instructions someone entered into them and store information. Computers can’t rewire themselves. There just aren’t any useful similarities between a computer and a brain. So yeah, the difference between a computer and a brain is a difference of principle rather than degree.

@Eniac

Perhaps I should clarify the difference between someone writing computer software and a brain’s neuronal processes changing. For software to be written and inserted into a computer, someone- a computer programmer- must write those programs. A computer can’t change its own programs. If you are going to argue that software being written by a programmer in a cubicle is directly analogous to our minds forming brains, you must explain who exactly is programming us. Computers aren’t reprogrammed without an intelligent being writing those new programs, so you now have arrived at a situation where an intelligence is required to create our minds!! Not that it matters, because your analogy is just plain wrong- but that should explain why I referenced “the big programmer in the sky”. :-))

Brains, unlike computers, change- neurons form new connections with each other, allowing us to develop our own unique personalities and learn. Computers, on the other hand, do not- and most computers have only one CPU, unlike the brain with its tens of billions of tiny “microprocessors”- its neurons. And, of course, the human brain starts out wired up and functioning as a mind, unlike a computer which starts out empty and passively accepts new programs. All in all, your comparison has completely failed.

This is correct. But if the mind is emulated with sufficient accuracy to include the “self” (as you say, this may be more a matter of definition than disputable fact), then “self” as activated in the computer does indeed experience having been “transferred” to the computer. It will not have experienced death. Since, by definition, “self” is the same as “you” or “I”, the end result is just what was intended.

In the case that the original remains, we have the curious situation of having two “selves”, aka you (#1) and you (#2), which you seem to have difficulty grappling with. This has been treated extensively in science fiction, though, and there is no problem with it, in principle.

So then, what you are saying is that the fact that the copied mind is emulated and the original is physical makes all the difference. If it were possible to create a physical copy, things would be different?

One of the similarities I have pointed out is that brains and computers are both sophisticated information processing systems. This makes them similar to each other and distinct from everything else. I suppose I am “simply wrong” on that, then?

@Eniac

True- the copy experiences being a continuation of the original brain, so in a sense mind uploading transfers “self”- although this a matter of definition, not disputable fact. It is indisputable fact that the emulation created by mind uploading is an independent, autonomous being from the original meat brain, even though they arguably both have the same “self”. That is why I say this process does not offer continuity of existence to the original. His or her emulation continues indefinitely in a computer, but the original remains in his or her body and eventually dies.

It seems that we ended up arguing because we hadn’t agreed on the definitions of the terms we were using. When I said “copy 2 can’t be you”, you interpreted that as meaning that there is some fundamental “self” that can’t be transferred. I was simply pointing out that the original person who is scanned does not experience being recreated on a computer and continuing indefinitely as a mind emulation. Next time, we must be sure that we agree on the definitions of the terms we use.

I don’t have any difficulty with having multiple, autonomous emulations of an original brain. For each uploaded mind on the crystalline ovoid, there is an original back on Earth who doesn’t experience continuing on a new substrate. For them, there is no journey to the stars. So it goes…

However, I think this scenario is highly unlikely to come to pass. The amount of information required to simulate a human brain is enormous, similar to a Star Trek transporter transfer. We don’t know of any way to scan the human brain at a high enough resolution. MRI doesn’t have enough resolution, and plasticizing the brain, slicing it up, and scanning each slice won’t preserve the mind within. An enormously powerful computer is required to run a brain simulation. Finally, as LJK says, an AI would be more efficient as it will be tailor-made to a robotic body, instead of being hardwired for a human body like a human brain is. Why bother will the difficulties of an emulation if you can create an AI to gather information about distant star systems, especially since whoever was uploaded doesn’t even get to experience going in the first place? Maybe we can create VR simulations of the rest of the universe so everyone back on Earth can walk on virtual Epsilon Eridani b, courtesy of an AI starprobe that does not require virtual jellyware to remain sane.

@Eniac

Brains and computers do both process information and store memories, but other than that they are not similar in any useful way. You are “simply wrong” that an embryo’s developing brain is just like someone writing software in a cubicle at a software company, for reasons I already discussed. Even the Matrix series only portrayed Neo as our savior, not as an all-powerful God who writes our very personalities!! :-)

I am glad that we managed to (kind of) agree in the end. If you had said “you (#2) can’t be you (#1)”, it would have been clearer to me. I had reserved the “you” (without number) for the original before the operation, only. Under this definition, both “you (#1)” and “you (#2)” are “you” (or, more precisely, the continuation in time of “you”), but, of course, “you (#1)” is distinct from “you (#2)”, even if only by a few hours or days of experience.

Here, everything hinges on the level of abstraction at which you “emulate” the mind. Certainly, on the level of atoms it would be impossible. You are correct that it would also be practically impossible on the level of live neurons. But there are more levels (which I realize you may think are invalid), such as the level of circuits, where neurons are abstracted as something analogous to logic gates (but with a different, more analog behavior, of course, such as in neural networks). Even higher abstractions bring us into cognitive science, with moods, emotions, thoughts, and memories being more directly emulated.

So, you are right that an AI is a far better solution than a brain simulation, but I think you are wrong in categorically denying that an AI could not be created as the copy of an existing mind with enough of the characteristics and memories of the original to create a continuity of “self”. Putting the bar low, let’s say the emulated self should be better able to fill the original’s (mental) shoes than, say, the original after a serious stroke. Putting the bar higher: a mild stroke. Really high: A concussion.

I expect all this to work itself out as we make progress in AI, and that there will be two kinds of AI: Those designed in the image of an existing person, and those that are truly synthetic. I don’t know which will turn out to be more difficult. If we look at the (much simpler) analogue of visual representation, cinematography has enabled us to make visual copies of actors for more than 100 years, while the creation of synthetic “actors” has had to wait until the advent of computer graphics fairly recently. Although, there were cartoons, much earlier. And, if you were to count puppets… tricky.

Imagine an AI made in the image of Paul, our moderator. This would be a momentous advance, as we could then expect to read his excellent writings on interstellar travel for centuries to come, and continue reading them on our way to the Centauri system!

I never meant to make this claim. I was simply reacting to your assertion that

So I felt I had to point out that actually, every computer is different, in that its hard drive contains different software and data. Also, that software is not static, but continually developed, and that because of all that, a computer is every bit as different from other computers as human brains are from each other. Computers, like brains, even “age”, especially those of the Windows variety as they acquire layers and layers of viruses and spamware.

I did not mean to say that one is “just like” the other. They obviously are not. But they have many similarities, and I stand by this.

Eniac writes:

Which leads to another question. If I were already an AI, having been modeled on the physical person’s mind, would I know it if well enough simulated? Are you sure I’m not already an AI? ;-)

Thank you for the kind words, Eniac.

I am pretty sure, since I gather from your writing that you enjoy a freshly brewed cup of coffee and a glass of great wine way more than an AI could ever hope to simulate…

I would also much love to further reassure myself by meeting you in person over a cup of coffee, should you happen to be located near (or some day pass by) Cambridge, MA.

Thank you, Eniac. I’ll take you up on that offer next time I’m out in your direction. But why stop at coffee — a restaurant with a good wine list is always welcome, and we can kick around interstellar ideas galore! That should resolve the AI issue.

Intelligence, Uplift, and Our Place in a Big Cosmos

David Brin

Contrary Brin

Posted: Sep 26, 2012

A balanced and well-researched Wired article by Jason Kehe reveals the latest “yoo-hoo transmission to aliens” stunt. Of course I consider these things to be at-best dopey, with a small but significant chance of being thoughtlessly dangerous for all of humanity.

Full article here:

http://ieet.org/index.php/IEET/more/brin20120926