Centauri Dreams

Imagining and Planning Interstellar Exploration

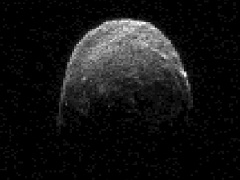

2005 YU55 Closest Approach Today

2005 YU55, an asteroid roughly the size of a city block, makes its closest pass today, approaching within 325,000 kilometers, closer than the distance between the Earth and the Moon. It will be another seventeen years before we get an asteroid as substantial as this in such proximity. That one is 2001 WN5, which will pass halfway between the Moon and the Earth in 2028. Today’s object of interest, 2005 YU55, isn’t in danger of hitting the Earth on this pass, but astronomers track these objects closely because over time their trajectories are known to change.

Image: This radar image of asteroid 2005 YU55 was obtained on Nov. 7, 2011, at 11:45 a.m. PST (2:45 p.m. EST/1945 UTC), when the space rock was at 3.6 lunar distances, which is about 860,000 miles, or 1.38 million kilometers, from Earth. Image credit: NASA/JPL-Caltech.

The asteroid’s discoverer, Robert McMillan (University of Arizona) calls it “…one of the potentially hazardous asteroids that make close approaches from time to time because their orbits either approach or intersect the orbit of the Earth,” all of which reminds us of the need to keep an eye on it and other Earth-crossing objects. McMillan is co-founder and principal investigator for SPACEWATCH, whose job it is to find and track objects that might pose a threat to the Earth. Both it and the Catalina Sky Survey are based at the University of Arizona’s Lunar and Planetary Laboratory, where the CSS has NASA support to discover potentially dangerous asteroids.

SPACEWATCH uses charge-coupled devices (CCDs) and specialized software to study the images it generates, passing suspect objects on to the Minor Planet Center at the Smithsonian Astrophysical Laboratory. It was on one of these searches that 2005 YU55 turned up six years ago, an apparent Near Earth Object. Subsequent investigation refined its trajectory:

“The MPC posted it on their confirmation page, which is monitored by everybody who follows up newly discovered Near Earth Objects,” McMillan said. “So we followed it up on subsequent nights and over the following month. Over time, we refined its orbit to the point that NASA’s Jet Propulsion Laboratory listed a large number of potential close encounters with the Earth. Now, after 767 observations by ground-based observers, we have the orbit of that asteroid really nailed down, so we know it’s not going to hit the Earth on Nov. 8.”

What we know about this object is that it is roughly spherical and measures about 400 meters in diameter, with a complete rotation every 18 hours. The asteroid is a carbonaceous chondrite, but in the absence of more detailed information about its physical properties, its long-range trajectory is hard to predict, especially given variables like the Yarkovsky Effect, which results from uneven heat distribution on the surface as the object radiates sunlight back into space. All the more reason, then to look forward to the OSIRIS-REx mission, a sample return of the carbonaceous chondrite 1999 RQ36 that will bring material from the asteroid back to Earth in 2023.

The Near-Earth Object Observations Program at JPL known as Spaceguard is also part of the detection program for nearby asteroids, discovering and tracking them to analyze their trajectories for possible danger to Earth. What we know of 2005 YU55 is that its orbit regularly brings it close not only to the Earth but also to Venus and Mars, but the 2011 encounter with our planet is the closest it has come to us for the last 200 years. We should have new Arecibo radar images of the asteroid after closest approach at 1828 EST (1128 UTC). More in this NASA news release, and see this University of Arizona page for further background on the 2005 YU55 encounter. Finally, amateur astronomers equipped to do accurate photometry are being sought to help observe the object. If you’re interested, this Sky & Telescope article has the details.

The Light of Alien Cities

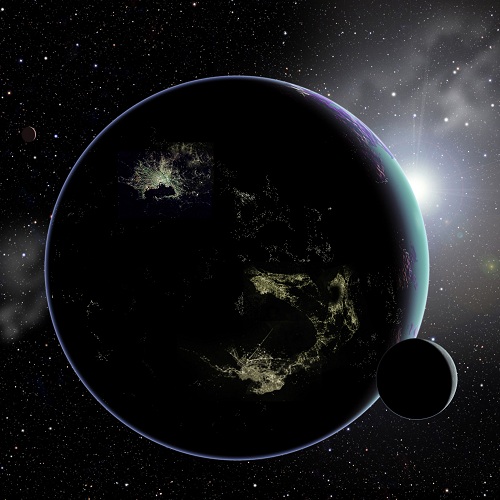

If you’re looking for a new tactic for SETI, the search for extraterrestrial intelligence, Avi Loeb (Harvard-Smithsonian Center for Astrophysics) and Princeton’s Edwin Turner may be able to supply it. The duo are studying how we might find other civilizations by spotting the lights of their cities. It’s an exotic concept and Loeb understates when he says looking for alien cities would be a long shot, but Centauri Dreams is all in favor of adding to our SETI toolkit, which thus far has been filled with the implements of radio, optical and, to a small extent, infrared methods.

Image: If an alien civilization builds brightly-lit cities like those shown in this artist’s conception, future generations of telescopes might allow us to detect them. This would offer a new method of searching for extraterrestrial intelligence elsewhere in our Galaxy. Credit: David A. Aguilar (CfA).

Spotting city lights would be the ultimate case of detecting a civilization not through an intentional beacon but by the leakage of radiation from its activities. In my more naïve days when I just assumed such civilizations filled the galaxy, I imagined someone making an accidental detection of an errant radio signal with a desktop radio receiver, the ultimate ham radio DX catch. As I learned more and came to realize how attenuated such signals would be at these distances, it seemed more likely that we’d need to listen for directed signals, or at best the kind of accidental transmission that might indicate something like one of our own planetary radars.

Then, too, we have to take into account how much our own use of radio has changed, so that because of fiber optic cables and other technologies, we’re lowering our visibility in many wavelengths. An advanced civilization would presumably do the same, but Loeb and Turner figure lighting is something intelligent creatures are going to have no matter what they’re listening to or how they’re listening to it. This is from the recent paper on their work:

Our civilization uses two basic classes of illumination: thermal (incandesent light bulbs) and quantum (light emitting diodes [LEDs] and fluorescent lamps). Such artificial light sources have different spectral properties than sunlight. The spectra of artificial lights on distant objects would likely distinguish them from natural illumination sources, since such emission would be exceptionally rare in the natural thermodynamic conditions present on the surface of relatively cold objects. Therefore, artificial illumination may serve as a lamppost which signals the existence of extraterrestrial technologies and thus civilizations.

The first order of business is to show that searching for artificial lighting is possible within the Solar System, which Loeb and Turner approach by looking at objects in the Kuiper Belt. The technique is “…to measure the variation of the observed flux F as a function of its changing distance D along its orbit.” Working the math, they conclude that “…existing telescopes and surveys could detect the artificial light from a reasonably brightly illuminated region, roughly the size of a terrestrial city, located on a KBO.” Indeed, existing telescopes could pick out the artificially illuminated side of the Earth to a distance of roughly 1000 AU. If something equivalent to a major terrestrial city existed in the Kuiper Belt, we would be able to see its lights.

Objects of interest could be followed up with long exposures on 8 to 10 meter telescopes to examine their spectra for signs of artificial lighting, while radio observatories like the Low Frequency Array (LOFAR) or the Precision Array for Probing the Epoch of Reionization (PAPER) could be used to check for artificial radio signals from the same sources. Interestingly, the Large Synoptic Survey Telescope (LSST) survey will be obtaining much data on KBO brightnesses of the sort that could be plugged into Loeb and Turner’s methodology. Thus running a KBO survey as a tune-up of their methods would involve no additional observational resources.

The researchers aren’t expecting to find cities on KBOs, but they do point out that the next generation of telescopes, both space- and ground-based, is going to be able to reach much further into the universe for signs of artificial lighting. An exoplanet can be examined for changes to the observed flux during the course of its orbit. When it’s in a dark phase, we should see more artificial light on the night side than what is reflected from the day side. A signature like this would have to be bright — the night side would need to have an artificial brightness comparable to natural illumination on the day side — but an advanced civilization might have such cities. For now, the Kuiper Belt provides a handy set of targets we can use to test the technique.

The paper is Loeb and Turner, “Detection Technique for Artificially-Illuminated Objects in the Outer Solar System and Beyond,” submitted to Astrobiology (preprint).

Millis: Of Time and the Starship

What next for the 100 Year Starship Study? NASA and the Defense Advanced Research Projects Agency will make the call, as Tau Zero founder Marc Millis told Alan Boyle in his recent interview. To talk to Boyle, Millis donned virtual garb and appeared in Second Life in robotic form, but the interview is now available as a podcast on BlogTalkRadio and iTunes. I’ll send you there for the discussion in full, but do note that Boyle talked to Millis about starflight before the show and has made an edited transcript of that conversation available on Cosmic Log. The quotes I use below are from the earlier talk, but do pick up the podcast and listen to the whole thing.

Where DARPA goes next is to make a decision about awarding the funds remaining from the $1 million originally put into the project. About $500,000 is available, and Centauri Dreams speculates that DARPA will be more than happy to allocate the funds and be done with them, thus removing ‘starship funding’ from a budget always sensitive to congressional oversight. The plan all along has been to put these funds forward as seed money to the organization that can carry forth the 100 Year Starship idea. In other words, DARPA is hoping to boost interstellar flight by supporting a long-term effort that will find financing and develop technology that one day leads to the stars.

Millis has previously calculated that an actual starship is as much as two centuries away considering historical patterns of energy production and use, but projections like these are always approximations. The important point is that work on a starship has to be approached rationally. Given that we have numerous propulsion options, all of them with huge engineering issues, we have to investigate which of them might evolve to the point where a deep space mission is possible. And we have to take into account huge peripheral matters like equipment reliability over long time-frames, communications at interstellar distances and the matter of human longevity.

Research is invariably incremental, and considering the early state of our knowledge, it will proceed by identifying the key issues and laying a foundation now to chip away at them:

The important issue to figure out today is to make sure we have a sane comparison of the real challenges and the real state of the art, so we’re proceeding wisely here. Then, from that, ask, “OK, if that’s where we are, what can we start tomorrow to chip away at those issues?” We can’t build the starship tomorrow, but we can identify the correct questions to ask, and begin seeking answers to those questions. When it looks more promising, and the advancements are there, fine.

Those advancements may be a long time coming, and there is an inherent danger in impatience. An organization built to last a century or more crosses generations and requires a sustained vision (I’ll be talking about exactly that in an upcoming dialogue with Michael Michaud, to be published here within the next two weeks or so). A public saturated with immediate gratification will not support such projects, but a public educated in the problems involved and the magnitude of their solutions may begin to see things in a long-term context. Amortize interstellar research over two centuries and the costs are more manageable and can grow with the economy. Along the way, as DARPA keeps emphasizing, we should be looking for tangible, near-term spinoffs.

Lately I’ve been reading the new edition of Robert Zubrin’s The Case for Mars, which energetically describes the thinking behind ‘Mars Direct,’ a way to reach Mars with existing technology and with far less expense than many government projections have indicated. But we all know that even Mars, that close and astrobiologically tantalizing world, is still out of reach for the present because of political and economic factors. An interstellar mission is a step so far beyond these tentative steps in our Solar System as to dwarf them entirely. Why, then, look ahead to traveling between the stars when we’re still so early in the game in our own system?

The ultimate, highest-priority benefit of star flight is the survival of the human species beyond the fate of our own solar system and our home planet. In the meantime, the progress we make to try to turn all this stuff into a reality will result in profound improvements in energy conversion, transportation, self-supporting life support — things that would be very useful for life on Earth. And then there’s the social aspect. This effort can give us hope for a better future, expand our opportunities — and hopefully give people a frontier to conquer, rather than being left with no option other than to conquer each other.

Can human cultures pull together to manage major questions of species growth and survival? It’s only one of the questions interstellar flight raises that Boyle and Millis tackle in the longer interview. What we can say is that building a space-based infrastructure is an obvious precursor to an interstellar probe, and getting economically to low-Earth orbit is an obvious precursor to moving outward into the Solar System. Each of these challenges has its advocates and interstellar flight will depend on a satisfactory resolution of all of them. But the challenges of starflight are so immense that chipping away at them now helps to lay a groundwork that will become the roadmap to follow when we do achieve the technologies needed to reach a star.

Dying Stars and their Planets

Although I can’t make the journey just then, I wish I could attend an upcoming conference at Arecibo (Puerto Rico) called Planets around Stellar Remnants. The meeting takes place twenty years after the discovery of the first exoplanets, the worlds orbiting the millisecond pulsar PSR B1257+12. I’ve been interested in the fate of planets around older stars ever since reading H.G. Wells’ The Time Machine as a boy and encountering his image of a swollen, dying Sun. We also have interesting questions to ask about the kind of planets that exist around white dwarfs, and whether new planets (and chances for life) may eventually occur in their systems.

It’s appropriate that the conference be organized by Penn State, for it was that university’s Alexander Wolszczan, working with Dale Frail of the National Radio Astronomy Observatory, who made the discovery of those two and possibly three planets that launched the modern exoplanet era. Nor has Wolszczan slowed in his efforts. The most recent work out of Penn State is the discovery of planets around three dying stars — HD 240237, BD +48 738, and HD 96127 — one of which has an interesting and still unidentified object, perhaps a brown dwarf, in orbit around it.

All three of the stars are much further along in their lifelines than our Sun, says Wolszczan:

“Each of the three stars is swelling and has already become a red giant — a dying star that soon will gobble up any planet that happens to be orbiting too close to it. While we certainly can expect a similar fate for our own Sun, which eventually will become a red giant and possibly will consume our Earth, we won’t have to worry about it happening for another 5 billion years.”

What we get by studying highly evolved stars like these is an updated window into planet formation. 30 known planets and brown dwarf-mass companions are now known to exist around giant stars (all three stars studied here are K-class giants). The object around BD +48 738 is tricky because a long-term radial velocity trend here indicates a distant companion but the data are not yet sufficient to decide between a planet and a low-mass star as the culprit. The star is also orbited by a planet of about 90 percent Jupiter’s mass at roughly 1 AU in a 400-day orbit.

I’ll pause on this because we’re finding a number of companions to giant stars that have minimum masses of about 10 Jupiter masses, making them either brown dwarf candidates or massive planets. It will take further work to identify the object in the outer system of BD +48 738, but if it does turn out to be a brown dwarf, then we have another case of a system with a Jupiter-class inner planet and a distant brown dwarf orbiting the same star. The paper on this work discusses the implications in terms of our primary theories of planet formation:

In principle, such a system could form from a sufficiently massive protoplanetary disk by means of the standard core accretion mechanism (Ida & Lin 2004), with the outer companion having more time than the inner one to accumulate a brown dwarf like mass. A more exotic scenario could be envisioned, in which the inner planet forms in the standard manner, while the outer companion arises from a gravitational instability in the circumstellar disk at the time of the star formation (e.g. Kraus et al. 2011). In any case, it is quite clear that this detection, together with the other ones mentioned above, further emphasizes the possibility that a clear distinction between giant planets and brown dwarfs may be difficult to make…

The three stars appear jittery under observation because they oscillate more than younger stars like the Sun. That made the planet hunt a challenge, but also allowed for the discovery of a negative correlation between the star’s metallicity and the degree to which it oscillates. Wolszczan says that the less metal content the team found in each star, the more noisy and jittery it turned out to be. The paper relates this to p-mode (pressure-driven) oscillations at the surface of the star:

The origin of this trend is most likely related to the fact that higher metallicity (opacity) of the star lowers its temperature, which decreases the amplitude of p-mode oscillations, while lower metallicity has the opposite effect.

This Penn state news release quotes Wolszczan on the future of our own Solar System as the Sun swells to become a red giant and swallows the inner planets. Somewhere in the remote future, says the astronomer, perhaps one to three billion years from now, we may consider moving to Europa, an icy wasteland that under the gaze of a swollen Sun will become a world of ‘vast, beautiful oceans.’ It’s an enchanting thought, and one we’ll doubtless think more about as we continue our investigations of giant stars and the fate of planetary systems around them.

The paper is Gettel et al., “Substellar-Mass Companions to the K-Giants HD 240237, BD +48 738 and HD 96127,” accepted by the Astrophysical Journal (preprint).

Notes & Queries 11/2/11

Tau Zero in Second Life

I have almost no experience with online virtual worlds like Second Life, but I do want to mention that Marc Millis will appear later today (Nov. 2) on the ‘Virtually Speaking’ talk show program, which can be accessed here as well as in Second Life. The focus of the interview is to be on prospects for interstellar travel, what a program like the 100-Year Starship can do, and what Tau Zero and other efforts (such as Project Icarus) are all about. The show begins at 9 PM Eastern time (0200 UTC) this evening, and may wind up being audio-only if the Second Life bit doesn’t work out.

I’m sure it will, but Marc is as new to Second Life as I am, and my last experience with the medium had me wandering around in an enormous virtual house trying to find someone with whom I was supposed to be doing an online interview, and I remember being alternately intrigued and baffled by the options available to me. Old time Second Lifers will find this bizarre, I’m sure, but some of us haven’t yet gotten up to speed with meetings held in virtual worlds, alas.

A Conference on Olaf Stapledon

Be aware of Starmaker: The Philosophy of Olaf Stapledon, a conference to be held at the headquarters of the British Interplanetary Society at 27/29 South Lambeth Road, London on November 23. Born in 1886, Williams Olaf Stapledon was a philosopher by training and a writer by choice, the author of two classics that have had a powerful impact on many scientists now working in aerospace and interstellar studies: Last and First Men (1930) and Star Maker (1937). You may also have heard of Odd John (1935), although it should be noted that Stapledon was prolific at both fiction and non-fiction.

Image: Stapledon lecturing at the British Interplanetary Society, to which he had been invited by Arthur C. Clarke in 1948.

The complete program is online, and among the presentations I note in particular Richard Osborne’s talk on Stapledon and Dyson spheres. Freeman Dyson is on record as saying that it was Stapledon’s futuristic vision in Star Maker that brought about the concept of Dyson spheres, hardly the first instance of this author’s influence. When Stapledon lectured at the BIS, his topic was ‘Interplanetary Man,’ but his vision reached far deeper into the cosmos than our own Solar System, dealing with themes of consciousness and the survival of intelligence in an evolving universe.

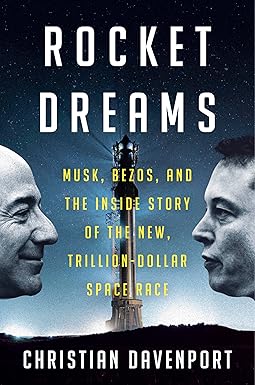

The Alpha Centauri Prize

Project Icarus founder Kelvin Long has gone online with a proposal for an international competition to promote research on the design of a star probe. Long is thinking in terms not dissimilar from the X Prize, though the Alpha Centauri Prize he advocates would be considered an extension of the existing Project Icarus and long-term in nature.

Over time, the concept would be worked upon by future generations and ultimately lead to a direct design blue print for an interstellar probe after several decades of running. Like Project Icarus, it is the hope that other teams around the world would be assembled to work on specific proposals investigated historically such as NERVA, Starwisp, Vista, Longshot, AIMStar, Orion or one of the many others. This way, the technological maturity of different propulsion schemes can be improved over time and the case could be better made for precursor missions to the outer solar system and one day to the nearest stars.

Long envisions teams competing for a cash prize every two to three years in an academic competition run by a non-profit organization. Over time, engineering design ideas for a probe to another star would evolve. The process is a gradual one that may not settle upon a single optimum propulsion method:

… we may find that what may emerge is not a single choice for going to the stars in the coming centuries, but instead a realization that it is a combination of approaches with highly optimized engineering designs that will be the way to go. This may suggest hybrid propulsion schemes and could for example be along the lines of a fusion-based drive with anti-proton catalyzed reactions but using a nuclear electric engine for supplementary power and perhaps a solar sail and MagSail for solar system escape or upon arrival. From the two decades of research will develop reliable engineering studies, practical progress of the technology and several clear front runner designs to focus initially divergent research options towards the proper investment into the clear front runner designs by a process of gradual down select.

Can an Alpha Centauri Prize be a serious incentive for research and an enabler for new technologies, as well as a driver for inspiring students and educating the public? More on the idea in The Alpha Centauri Prize: Taking Volunteer Research To A New Level.

Propulsion Conference Abstract Deadline

On 30 July to 1 August, 2012 the 48th AIAA/ASME/SAE/ASEE Joint Propulsion Conference & Exhibit takes place in Atlanta. The focus is broad, as the AIAA comments on its site:

The objective for JPC 2012 is to identify and highlight how innovative aerospace propulsion technologies powering both new and evolving systems are being designed, tested, and flown. Flight applications include next generation commercial aircraft, regional, and business jets, military applications, supersonic/hypersonic high speed propulsion applications, commercial and government-sponsored launch systems, orbital insertion, satellite, and interstellar propulsion.

Next summer seems a long way off as the weather changes toward late fall in the northern hemisphere, but I mention this now because abstracts for the conference are due by November 21. You can view the call for papers here.

Upcoming Interstellar Workshop

Finally, be aware of the Tennessee Valley Interstellar Workshop, running November 28 & 29, 2011 in Oak Ridge, TN at the Doubletree Hilton Hotel. Here’s the agenda for Tuesday the 29th, following an opening reception the night before:

8:00 Welcome and Opening Remarks (Les Johnson)

8:15 Sun Focus Comes First; Interstellar Comes Second (Dr. Claudio Maccone)

9:00 Interstellar Travel: Realistic Ideas and Fanciful Dreams (Les Johnson)

9:30 Human and Institutional Barriers to Large Scale Scale Geoengineering and Interstellar Spaceflight (Dr. Kent Williams)

10:00 Coffee Break and Group Picture

10:30 Power and Propulsion: An Informal Survey of Opportunities Within Particle Physics (Dr. Jim Woosely)

11:00 Project Icarus (Dr. Richard Obousy)

11:30 Antimatter Propulsion (Harold Gerrish)??

12:00 Lunch

1:00 Interstellar Light Sails (Dr. Gregory Matloff)

1:30 TBD (Dr. Conley Powell)

2:00 Jovian Tesla Radio (Dr. David Fields)

2:30 Lasers Revisited: Their Superior Utility for Interstellar Beacons, Communications and Travel (Dr. John Rather)

3:00 Interstellar Exploration Through Art (C Bangs)

3:30 Humanity in the Outlands: Anthropological and Sociological Concerns in the Face of Touching the Universe? (Dr. Robert C. Lightfoot)

4:00 Shell Worlds (Robert Kennedy)?

4:30 Sublight Colonization of the Galaxy (Ken Roy)?

5:00 The Fermi Paradox: A Roundtable Discussion (Stephanie Osborn)

6:00 Dinner

8:00 Public Forum (Robert Kennedy)

The Fate of Planets Near Galactic Center

?It was Gregory Benford who used the wonderful phrase ‘the first hard science fiction convention’ to describe what happened at the 100 Year Starship Symposium. It was an apt choice of words. ‘Hard’ science fiction refers to SF that goes out of its way to get the science right, and in which the scientific and technical details play a major role in the development of the plot. Science fiction critic P. Schuyler Miller evidently coined the term in one of his reviews for Astounding Science Fiction back in the 1950s. In many ways, the Symposium operated under a science fictional meme.

Science fiction at its best exists to paint possibilities for us. Some scientific speculations may be remarkable in their own right but only become vivid when portrayed by writers who can make the background science into a scenario that plays out in fictional terms. An obvious case is the classic Isaac Asimov tale “Nightfall,” published in Astounding’s September, 1941 issue. Asimov took us to a place that knew night only once every 2000 years because of the configuration of the six suns in its planetary system. His craft painted a world few would have imagined, and showed us the consequences of its existence upon a civilization there.

All these musings were triggered by the latest news from the University of Leicester, and I’d love to see the science fiction story that might emerge when ‘hard’ SF tackles its findings. Surely someone will tell the story of a civilization too close to galactic center (speaking of places that are well lit!), and the consequences as radiation levels begin to rise to untenable levels on a world too near the central black hole. If interstellar flight is possible, surely it would happen here as a means of species survival.

For black holes seem to be common at galactic center in many galaxies, and in particular the supermassive ones that lurk at the center of galaxies like our Milky Way. Huge cloaks of dust obscure many of these, and a team of scientists led by Sergei Nayakshin at Leicester thinks that collisions between planets and asteroids occurring at speeds up to 1000 kilometers per second could be the cause. Nayakshin’s team argues that the accretion disc around supermassive black holes will eventually form planets, and that planets and asteroids that formed in the outer regions of the disc would be stripped away by the close passage of stars in the disc, given the tight quarters at galactic center.

From the paper:

Released from their host stars, these solids and planets orbit the SMBH independently. Since the velocity kick required to unbind them from the host is in km/s range, whereas the star’s orbital velocity around the SMBH is ? 1000 km/s, orbits of the solids are initially only slightly different from that of their hosts. AGN gas discs are expected to be very geometrically thin (e.g., Nayakshin & Cuadra 2005), and if they always lay in the same plane (e.g., the disc galaxy’s mid-plane) then the resulting distribution of solids would be quite thin and planar as well.

So far so good, but planets in this scenario seem bound to come to grief:

However, there is no particularly compelling reason for a single-plane mode of accretion in AGN as the inner parsec is such a tiny region compared with the rest of the bulge (Nayakshin & King 2007), and chaotically-oriented accretion may be much more likely…

Collisions are bad enough, but the planets would have already been sterilized as they orbited the supermassive black holes, says Nayakshin in a related news release:

“Too bad for life on these planets, but on the other hand the dust created in this way blocks much of the harmful radiation from reaching the rest of the host galaxy. This in turn may make it easier for life to prosper elsewhere in the rest of the central region of the galaxy.”

The team takes its lead from the zodiacal dust in our own Solar System, known to be the result of collisions between solid objects like asteroids and comets. And its work may help us understand how black holes grow and affect the galaxies within which they reside. The dust and gas in the inner regions of our own galaxy, much of which might have been expelled or destroyed by this process, would otherwise have contributed to the formation of more stars and planets. The black hole at galactic center would have thus played a significant role in the evolution of the Milky Way.

Image: ‘Light echo’ of dust illuminated by a nearby star V838 Monocerotis as it became 600,000 times more luminous than our Sun in January 2002. The flash is believed to have been caused by a giant collision of some kind, e.g., between two stars or a star and a planet. Collisions of smaller objects, such as asteroids or minor planets near a supermassive black hole could also be dramatic due to the huge collision velocities and would release a lot of dust. Credit: NASA/ESA.

The paper is Nayakshin, et al., “Are SMBHs shrouded by ‘Super-Oort’ clouds of comets and asteroids?” in press at Monthly Notices of the Royal Astronomical Society (preprint). The science fiction story based on it remains to be written.