Knowing the position of a firefly within one inch from a distance of 200 miles would not be easy, but it’s the kind of precision astronomers Adam Kraus and Trent Dupuy needed when trying to establish the distance of nearby brown dwarfs. The firefly simile belongs to Kraus (University of Texas at Austin), who with Dupuy (Harvard-Smithsonian Center for Astrophysics) embarked on a study of the initial sample of the coldest brown dwarfs discovered by the Wide-Field Infrared Survey Explorer satellite (WISE). Their paper appears today is Science Express online.

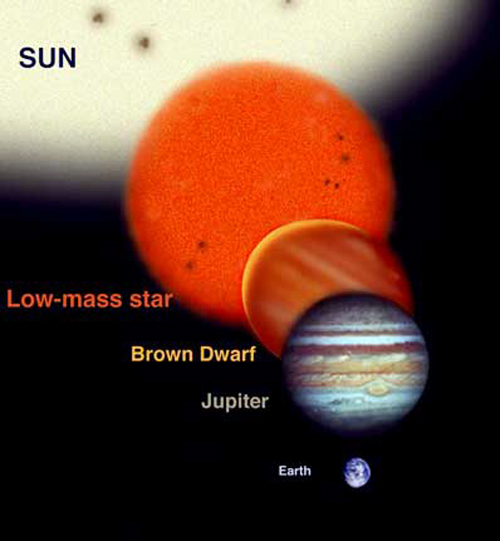

Image: Brown dwarfs in relation to more familiar celestial objects. Credit: Gemini Observatory/Jon Lomberg.

Just how cool can brown dwarfs get? When we’re focusing on small dwarfs somewhere between 5 and 20 times the mass of Jupiter that have been cooling for billions of years, we’re talking about objects whose only source of energy is gravitational contraction, and as Dupuy notes, the fine-grained distinctions between star and planet begin to get blurred here: “If one of these objects was found orbiting a star, there is a good chance that it would be called a planet.”

So what does make the difference? The key determinant is that brown dwarfs formed on their own rather than in a proto-planetary disk. Moreover, they’re exceedingly hard to characterize because most of their light is emitted at infrared wavelengths and their small size and low temperature make finding them tricky. The astronomers, in Dupuy’s words, “wanted to find out if they were colder, fainter and nearby or if they were warmer, brighter and more distant.”

To answer these questions involved measuring their distance accurately. For that, the duo turned to the Spitzer Space Telescope and put parallax methods to work on the nearby brown dwarfs previously identified by WISE. We’ve often discussed parallax in these pages as the method used to make measurements of stellar distance, a feat first accomplished by the German astronomer Friedrich Wilhelm Bessel in 1842 with his work on 61 Cygni — Bessel’s reading of 10.3 light years wasn’t all that far off the now accepted value of 11.4 light years.

The early days of parallax in astronomy (and there were 60 stellar parallaxes in the literature by 1900) involved observing a star from one side of the Sun’s orbit and then, half a year later, the other, looking for the slight changes that would make the calculation possible. In the case of Kraus and Dupuy’s work with the Spitzer instrument, the needed precision was mind-boggling but measurable, enough to determine that the objects in question range between 20 and 50 light years away.

The upshot: Brown dwarf temperatures in the range of 395 to 450 K (250 to 350 degrees Fahrenheit), allowing them to retain their status as the coldest known free-floating celestial bodies but making them a bit warmer than some earlier studies have suggested. These objects cool slowly over time, their heat produced by contraction rather than fusion. Recent work has suggested that brown dwarfs could sustain habitable conditions for tightly orbiting planets for several billion years. I mention this because yesterday we saw in the work of Mukremin Kilic that planets in certain configurations around white dwarfs could have eight billion years of habitability.

What lies ahead is the great hunt to discover whether worlds like these actually exist. What a time to enter exoplanet studies — a theme we keep encountering is that habitable conditions may exist around stars far different from our Sun. M-dwarfs first opened our eyes to this but brown and white dwarfs push us into whole new realms of investigation as we try to learn how frequently planets can form around them and whether any might have astrobiological possibilities. Not so long ago we assumed that other solar systems would look more or less like ours. Now we look into a cosmos filled with exotica and ask whether we’re not the outliers.

For more on brown dwarf planets and habitability, see Andreeshchev and Scalo, “Habitability of Brown Dwarf Planets,” Bioastronomy 2002: Life Among the Stars. IAU Symposium, Vol. 213, 2004 (abstract), as discussed in Brown Dwarf Planets and Habitability. See also this Astrobiology Magazine feature on why white and brown dwarf planets may not be capable of sustaining life (thanks to David Cummings for the reference to this one).

The important thing is to find and verify rocky planets in habitable zones. The more we find, and the more we verify as meeting the conditions of even the possibility of life, the more we slowly shift the public’s attention (and hopefully with it public spending) to the subject of star travel.

This business of assuming that it would take billions of years for large

sentient beings to arise from an alien planet is based on the assumption

that the earth was an ideal abode for life to begin and arise.

If this assumption is off base and our world was actually a somewhat tough place for life to arise, we are in for a suprise by a young stars say 2 billion years giving rise to species as advanced as mammals & avian species.

I can imagine worlds that are more benign and give evolution a good

pump now and then. These worlds would have fewer sterilizing impact events and their crust wont create the equivalent of the Siberian Trapps.

And instead of the moon and it’s 200 ft tides, when it was young, what about a planetary arrangement that bring the 2 planets w/i .2 AU, that would create more benign tides from the begining.

Given that we have only one example of intelligent, tool-using life that can communicate and potentially travel across interstellar distances, we should wring that example to drain every possible clue to what conditions might result in ETI. A combination of observations lend themselves to a rare Earth hpothesis. One is that intelligent life began on land, and not in the ocean. Yet, if the current hypothesis that life began in the ocean is true, it seems that ocean life had something like a billion years head start on land-based life. Still,we see no technological civilizations in the ocean. This seems to indicate that technology is a trait limited to land-based creatures. What is the difference between a land environment and an ocean environment that makes industrial civilization more likely on land?

Drawing the analysis further along the same path, why did it take 500 million years for land-based life to develop intelligence advanced enough to develop an industrial civilization? Some of the earliest land organisms were multi-cellualr, complex life forms with brains. They had the basic ingredients needed to evolve technological species, yet it took 500 million years. Does it really take 500 million years? Or did something change in the last few million years to allow technological civilization to evolve?

Any thoughts on significant differences in the Earth’s land environment over the last 1 – 10 million years that could account for the evolution of really smart monkeys?

Well I think, Humans arising from rather small simians in 77+ millon yrs is abit long if you assume the Earth was fairly benign during that period.

Some primates have a been around alot more than 7 milions years of homonid existance. It is not necessarily so that if you rewind the clock that it would take 70 M.Y. for Hominids to arise. IMO it could have happened in significantly shorter time. I suspect destabilizing weather

patterns (for whatever reason) is the key there.

I suppose this line of though ties into the Fermi Paradox, if life does rise in say 1,000 Planets in the galaxy (which is a .0000001% prob) could one

not assume that one world gets extremely lucky and undergoes a much a faster evolution to intelligence. I don’t think 2 billion years is too little time in such a case.

One possible change is the quaternary ice ages that are at least coincident with the evolution of hominids. It is just possible that the different concurrent hominid species drove their evolution through direct or indirect competition.

But there is also a lot of path dependency too. That humans learned to cook food is hypothesized to have reduced the energy needed to chew and digest food, allowing for greater brain development. Couple this for sex selection based on more capable cognitive skills and you may have a hypothesis.

Note that much of our ability to use culture to bootstrap ourselves in each succeeding generation seems to have happened in the ballpark of 40-80 kya.

“Any thoughts on significant differences in the Earth’s land environment over the last 1 – 10 million years that could account for the evolution of really smart monkeys?”

Evolution requires adversity. A life form in a truly benign environment tends to remain stable long term, as evidenced by certain top predators (sharks, monitor lizards, and crocodilians) within the fossil record. They adapted to be supremely well adapted to their environment, and have little benefit from further changes, so such changes do not have strong advantage, thus do not spread widely in the gene pool.

Brain power is an expensive adaptation for mammals; in humans it’s around 20% of metabolic energy for powering the brain, and it’s large size has dictated a need for long childhood to allow for postpartum growth.

In an XTI, intelligence must have arisen because it was more adaptive at the time of arising than other attainable mutations, just like it must have in hominins, and more generally, in primates. Early primates were rodent-like, and far from top-predators; increased intellect was adaptive by allowing for both collective behavior and non-instinct behavior transmission (a.k.a. culture). Culture, once adopted, mutates more readily; it has been shown in dolphins, monkeys and apes that their cultural practices passed on by observation change readily by comparison to genetics, thus not needing a change in genetics to adapt to many short term forms of species stress.

We can expect, therefore, XTI’s to have had stresses changing near the limit for genetic adaptation; benign environments need to be not too benign, or the need to adapt can be met by genetics.

On earth, in the last 10 million years, we’ve had radical climate shifts, including the hominid ancestral area going from jungle to steppe to desert, and back and forth again. Multiple extinctions, but primates and felinoids adapted well, as well as canines, ungulates and rodents. 4 different genetic models.

Primates had two superadaptations – curiousity and sufficient intellect for culture; these make up for slow (2-10 year) generations, low birth rates, and slow propagation of genetic advantages due to low birth rates and non-harem breeding.

Ungulates developed speed and harem breeding – the most capable males breed with most of the females; a male that adapts to change spreads that far and wide, and his offspring with it dominant will interbreed and rapidly alter the species despite the 3-5 years per generation.

Rodents adapted by breeding rapidly – generation times of fractions of a year, and so any adaptations that worked spread. The side effect is many locally adapted species, and their range changing.

Felinoids have apparently instinctual cooperative hunting, and small harem breeding; predators need adapt less to the conditions, so long as they adapt to the prey. Felinoids are just smart enough to be able to adapt to new prey, just curious enough to try new prey, just enough harem model to spread a successful male’s biological adaptations (speed, stamina, tooth spacing) readily, but not so much so that it requires too much wasted effort, moderately long generations (3-5 years), allowing for significant winnowing of the gene pool, and multiple births per mating allowing redundancy in case of accident, so more copies are made of each match. Big cats haven’t needed to get smarter until recently… but they’ve adapted to the radical changes of African climate with a minimum of speciation. And they eat primates.

Canines are generally more promiscuous than felines by virtue of having males remain in the pack, but are otherwise similar; they show slower but wider variation than felines, and have incredible amounts of recessive genes.

Hominids outpaced them all by being smarter, not better physically; fire is a cultural adaptation that reduced their threat to hominids. Hominids hit a certain point, and physically, things seem to simply have held still. Culture became the dominant adaptation. But note the various pheontypical adaptations by environment – slow, non-speciation-level adaptations, many of which are liabilities when modern technology allows removal from the adapted environment. Cooperative, curious, and smart, but slow breeding. The product of the mixture of predatory stresses and climate changes leads to several different adaptive modes, and we just happen to be the one that worked best in the long term. But if not for savannah replacing forest, we’d still be chimp-like; they stayed, we went.

‘Any thoughts on significant differences in the Earth’s land environment over the last 1 – 10 million years that could account for the evolution of really smart monkeys?’

The change in climate in Africa caused forests to fade away to form Savannah’s -in short we had fewer trees to cling to and had to walk more upright to see over the grasslands for predator’s and for food, we the homo-sapiens, the smart monkeys, succeeded.

Thank you for the informative responses. You have provided a lot of food for thought.

A common theme in the responses seem to be that a key to the development of intelligence was adaptation to climate change, whether ice ages or changes from forest to savannah or other shifts. I can see how intelligence would provide a significant advantage in a changing climate (or, indeed, almost any significant environmental change). However, was the climate change over the time when intelligence blossomed somehow different than the previous eras? My understanding of climate history is that the modern era is not really special in this way. Am I missing something about the nature of recent climate change? Was it somehow more rapid, more extreme, less extreme, more localized, less localized, or different in some other way?

Am I somehow off base in thinking that the raw ingredients for higher intelligence were in place for at least tens of millions of years, but potentially hundreds of millions of years? If this is true, and we couple that with the fact that technology-capable intelligence only arose recently, then it seems logical to conclude that something changed to allow intelligence to rapidly develop, something recent as measured in geologic time. If we can figure out what that was, that might give us a clue to what types of planets are most likely to harbor ETI. It might also provide a clue that ETI could develop more quickly than on earth.

However, it may just be that, in addition to the right conditions, a planet has to cook for a few hundred million years before higher intelligence can emerge from complex life forms with limited intelligence. However, I am not sure what evidence would support this option. Does it imply that higher intelligence should progress steadily from lower intelligence? Does it imply that higher intelligence is so exceedingly rare that it takes hundreds of millions of years before it emerges by chance?

That seems very reasonable to me.

This, too makes sense. How else would you evolve a big brain if not through a progression of smaller brains?

Who knows how much time it “should” take? We know how much time it actually took, and we have no evidence whatsoever leading us to conclude that this time was surprisingly long or short, somehow.

“The key determinant is that brown dwarfs formed on their own rather than in a proto-planetary disk.” News to me. My understanding is that determing how an object formed is not easy from observations, maybe not even possible, and that the key determinant is whether the object was massive enough to support deuterium fusion in the early part of its life.

Although this is to a small extent dependent upon composition, it does lead to a simple borderline at 13 Jupiter masses: below that it is a planet, with little or no fusion of any kind; above that but below 75 Jupiter masses it is a brown dwarf, with significant deuterium fusion after it forms but not massive enough for regular hydrogen fusion. This is a simple criterion, and one which can be easily applied to any body whose mass can be determined.

Stephen

Oxford, UK

Astronist: ” My understanding is that determing how an object formed is not easy from observations, maybe not even possible,…”

No, not easy. Though possible, but not with high certainty. Astronomers use spectra of trace metals, for example, to determine if objects could have condensed from the same cloud. Angular momenta can also shed some insight, such as if a planet’s orbital plane is or isn’t closely aligned with the star’s equator. There may be others.