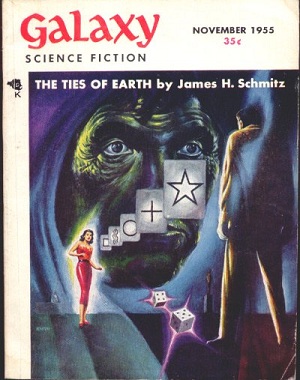

Physicist Al Jackson, who is the world’s greatest dinner companion, holds that title because amongst his scientific accomplishments, he is also a fountainhead of information about science fiction. No matter which writer you bring up, he knows something you never heard of that illuminates that writer’s work. So it was no surprise that when the subject of self-replicating probes came up in these pages, Al would take note in the comments of Philip K. Dick’s story “Autofac,” which ran in the November, 1955 issue of H. L. Gold’s Galaxy. A copy of that issue sits, I am happy to say, not six feet away from me on my shelves.

This is actually the first time I ever anticipated Al — like him, I had noticed “Autofac” as one of the earliest science fiction treatments of the ideas of self-replication and nanotechnology, and had written about it in my Centauri Dreams book back in 2004. If any readers know of earlier SF stories on the topic, please let me know in the comments. In the story, the two protagonists encounter a automated factory that is spewing pellets into the air. A close examination of one of the pellets reveals what we might today consider to be nanotech assemblers at work:

The pellet was a smashed container of machinery, tiny metallic elements too minute to be analyzed without a microscope…

The cylinder had split. At first he couldn’t tell if it had been the impact or deliberate internal mechanisms at work. From the rent, an ooze of metal bits was sliding. Squatting down, O’Neill examined them.

The bits were in motion. Microscopic machinery, smaller than ants, smaller than pins, working energetically, purposefully — constructing something that looked like a tiny rectangle of steel.

Further examination of the site shows that the pellets are building a replica of the original factory. O’Neill then has an interesting thought:

“Maybe some of them are geared for escape velocity. That would be neat — autofac networks throughout the whole universe.”

The Ethically Challenged Probe

Just how ‘neat’ it would be remains to be seen, of course, assuming nanotech assemblers can ever be built and self-replication made a reality (an assumption many disagree with). Carl Sagan, working with William Newman, published a paper called “The Solopsist Approach to Extraterrestrial Intelligence” (reference below) that made a reasonable argument: Self-reproducing probes are too viral-like to be built. Their existence would endanger their creators and any species they encountered as the probes spread unchecked through the galaxy.

Sagan and Newman were responding to Frank Tipler’s argument that extraterrestrial civilizations do not exist because we have no evidence of von Neumann probes (it was von Neumann whose studies of self-replication in the form of ‘universal assemblers’ would lead to the idea of self-replicating probes, though von Neumann himself didn’t apply his ideas to space technologies). Given the time frames involved, Sagan and Newman argued, probes like this should have become blindingly obvious as they would have begun to consume a huge fraction of the raw materials available in the galaxy.

The reason we have seen no von Neumann probes? Contra Tipler, Sagan and Newman say this points to extraterrestrials reaching the same conclusion we will once we have such technologies — we can’t build them because to do so would be suicidal. Freeman Dyson, writing as far back as 1964, referred to a “cancer of purposeless technological exploitation” that should be observable. All of this depends, of course, on the assumed replication rates of the probes involved (Sagan and Newman chose a much higher rate than Tipler to reach their conclusion). Robert Freitas picked up on the cancer theme in a 1983 paper, but saw a different outcome:

A well-ordered galaxy could imply an intelligence at work, but the absence of such order is insufficient evidence to rule out the existence of galactic ETI. The incomplete observational record at best can exclude only a certain limited class of extraterrestrial civilisation – the kind that employs rapacious, cancer-like, exploitative, highly-observable technology. Most other galactic-type civilisations are either invisible to or unrecognisable by current human observational methods, as are most if not all of expansionist interstellar cultures and Type I or Type II societies. Thus millions of extraterrestrial civilisations may exist and still not be directly observable by us.

Self-Replication Close to Home

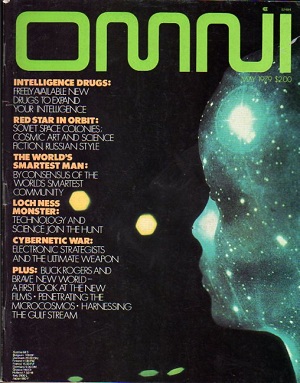

We need to talk (though not today because of time constraints) about what mechanisms might be used to put the brakes on self-replicating interstellar probes. For now, though, I promised a look at what the human encounter with such a technology would look like. Fortunately, Gregory Benford has considered the matter and produced a little gem of a story called “Dark Sanctuary,” which originally ran in OMNI in May of 1979 but is now accessible online. Greg told me in a recent email that Ben Bova (then OMNI‘s editor) had asked him for a hard science fiction story, and von Neumann’s ideas on self-replicating probes were what came to mind.

Mix this in with some of Michael Papagiannis’ notions about looking for unusual infrared signatures in the asteroid belt and you wind up with quite a tale. In the story, humans are well established in the asteroid main belt, which is now home to the ‘Belters,’ people who mine the resources there to boost ice into orbits that will take it to the inner system to feed the O’Neill habitats that are increasingly being built there. One of the Belters is struck by a laser and assumes an attack is underway, probably some kind of piracy on the part of rogue elements nearby. A chase ensues, but what the Belter eventually sees does not seem to be human:

I sat there, not breathing. A long tube, turning. Towers jutted out at odd places — twisted columns, with curved faces and sudden jagged struts. A fretwork of blue. Patches of strange, moving yellow. A jumble of complex structures. It was a cylinder, decorated almost beyond recognition. I checked the ranging figures, shook my head, checked again. The inboard computer overlaid a perspective grid on the image, to convince me.

I sat very still. The cylinder was pointing nearly away from me, so radar had reported a cross section much smaller than its real size. The thing was seven goddamn kilometers long.

“Dark Sanctuary” is a short piece and I won’t spoil the pleasure of reading it for you by revealing its ending, but suffice it to say that the issues we’ve been raising about tight-beam laser communications between probes come into play here, as does the question of what beings (biological or robotic) might do after generations on a starship — would they want to re-adapt to living on a planetary surface even if they had the opportunity? Would we be able to detect their technology if they had a presumed vested interest in keeping their existence unknown? Something tells me we’re not through with the discussion of these issues, not by a long shot.

The papers I’ve been talking about today are Sagan and Newman, “The Solipsist Approach to Extraterrestrial Intelligence”, Quarterly Journal of the Royal Astronomical Society, Vol. 24, No. 113 (1983), and Tipler, “Extraterrestrial Beings Do Not Exist”, Quarterly Journal of the Royal Astronomical Society, Vol. 21, No. 267 (1981). I’ve just received a copy of Richard Burke-Ward’s paper “Possible Existence of Extraterrestrial Technology in the Solar System,” JBIS Vol. 53, No 1/2, Jan/Feb 2000 — this one may also have a bearing on the self-replicating probe question, and I’ll try to get to it in the near future.

Over on Wiki there is a claim that Karel ?apek’s 1921 play R.U.R. is the first mention of a ‘self replicating machine’ , I could never get through R.U.R., so I don’t know. Tho I must admit ?apek does seem the first mention of a non wet-ware artificial quasi AI…, wish I had a better word since I don’t think those robots were AI. (Of course Mary Shelley , amazingly, in 1821, wrote the first science fiction story in the context we are speaking about here, I agree with Brian W Aldiss on this, find his essay about Shelly worth reading.)

Wiki also attributes A. E. van Vogt in The Voyage of the Space Beagle with ‘self replicators’ in 1943 , I don’t remember this, but then I have not gone back an reread van Vogt in a long time. This would even predate von Neumann by 5 years! I don’t know if von Neumann’s 1948 lectures are on line, I don’t mean a summary, which I can find, to see if he gives earlier references.

Modulo van Vogt, Dick’s story seems to me the first use of von Neumann machines connected to dispersion into the cosmos that I know of.

Early modern SF writers seem to have an acquaintance with some of the most sophisticated ideas in physical science which they diffused into the prose literature. No surprise , John Campbell has a degree in physics, Asimov a PhD in chemistry, Clarke a degree in physics, Poul Anderson a degree in physics, even Heinlein had a background in engineering. But even non science trained SF writers seem to have had a working knowledge of what in the 30’s , 40’s and even 50’s to be arcane areas of scientific knowledge.

I have never found an explicit reference , but the appearance of warp drives and other such like FTL technology seems rooted in General Relativity without even mentioning it! Surely Einstein and Rosen’s 1935 paper was not common currency even among most physicists in those days.

As a biologist, I look to see what mechanisms are already being used to prevent the uncontrolled replication of self replicating( biological ) machines. I can give 3 examples and will defer on a fourth and fifth until later in the discussion.

1) the system bacterial viruses use to limit the destruction of their host cell the Lambda phage / ecoli system

In this system a self replicating virus uses resources for the bacteria to replicate. defective phage will kill the entire culture and face extinction, but the phage isolated from nature will not, instead they will infect some of the bacteria and place them ” off limits” for viral replication . The marked bacteria contain a quiescent version of the phage that produces proteins that signal any new phage to “turn off ” when they try to infect the marked cell. the marked cells are otherwise healthy and can harbor the quiescent phage for years as they replicate and grow. Thus the phage have sanctuary. These same phage can control the growth of defective phage so there is little poaching by defective siblings.

2) quorum sensing bacteria secrete small chemicals that signal other bacteria around them about food and the number of related bacteria in the environment. As the number of bacteria increase the signal builds up and the whole community responds by altering replication rates and in some cases secrete films to protect the colony.

3) In mammals, after meiosis gametes sperm and egg are formed and when these get together, they for a new embryo. Cells in the embryo can differentiate into different cell types and eventually form organs etc. However as soon as these embryo cells form, they have a LIMITED number or replication cycles available to them ( the Hayflick limit) typically 40 cell doubling. this means that one cell can at most only double its number 40 times ( 2 to the 40th power, roughly 10 to the 12th power, that is, up to a trillion cells. In addtion, as cells specialize into certain cell types ( say muscle cells they have extremely limited number of cell cycles left to them red blood cells cannot replicate at all. if you interested in learning more details , there are more then one mechanism to limit the cell replication count, but one prominent one involves the length of telomeres on the ends of the chrmomosomes. They shorten with each replication until they are mostly gone the the DNA cannot replicate its ends.

To summarize. The first mechanism reserves some resources ( areas) so they cannot be used . The second has the replicating entities in communication so they can limit over use of resources and signal each other when to slow replication and create protective structures. The third builds in a hard wires a limit to the number of cycles of replication since the first cell is started.

To figure out how to effectively ‘bot replication, biology has several live examples an these are shown to work in the real world. The somatic cells of the human body are a great example. Only a small percentage of cells ( adult stem cells) can replicate at all , others are assistants to feed resources to them or build and protect the structure they reside in. Interested in your feed back.

Samuel Butler in Erewhon (1872) and Darwin Among The Machines (1863) discussed machine consciousness, replication, and evolution … though not self-replication.

“Every class of machines will probably have its special mechanical breeders, and all the higher ones will owe their existence to a large number of parents and not to two only.”

To quote from the main article:

“The reason we have seen no von Neumann probes? Contra Tipler, Sagan and Newman say this points to extraterrestrials reaching the same conclusion we will once we have such technologies — we can’t build them because to do so would be suicidal.”

Nuclear weapons are certainly suicidal, at least as far as civilized society is concerned, but that did not stop them from being built – 55,000 at their peak in 1990 during the Cold War. And think of all the biological weapons that humanity’s various militaries have stored away.

Gray goo, as it has also been called, in the hands of a most imperfect species, could indeed happen and get out of control. The combination of a leadership and general populace that seems as out of touch with science and technology (and history) as ever and scientists and engineers more enslaved to corporations and the military (which often work together) than ever leaves me amazed that we have not yet had a global disaster.

Another data point for the Fermi Paradox?

The Sagan and Newman article is online here:

http://adsabs.harvard.edu/abs/1983QJRAS..24..113S

I was able to find Tipler’s article online here:

http://articles.adsabs.harvard.edu/full/1980QJRAS..21..267T

As for the Freitas reference, I assume it is to this 1983 paper:

http://www.rfreitas.com/Astro/ProbeMyths1983.htm

And these two articles for good measure:

http://www.rfreitas.com/Astro/TheCaseForInterstellarProbes1983.htm

http://www.rfreitas.com/Astro/InterstellarProbesJBIS1980.htm

David Brin’s “Lungfish” story might be relevant.

http://www.davidbrin.com/lungfish1.htm

jkittle,

These are excellent examples drawn from biology showing the various ways that very simple rules can be used for restraining self-replicating systems.

Mechanical systems are much less limited than biological ones: You have practically unlimited error-free information storage and transmission, the full availability of all information to all components, and the ability to communicate remotely, instantly. Much more sophisticated mechanisms of regulation are possible, and mutation can be entirely eliminated as a factor.

It appears therefore likely that mechanical self-replicating systems will work differently from organic ones, although they certainly could adopt the more primitive mechanisms of their biological models, if it would help.

As someone else has said, an airplane works differently from a bird. However, if you really want to, you can build a mechanical bird, too. It’s been done…

http://www.youtube.com/watch?v=nnR8fDW3Ilo

wrt examples in biology, of how entities limit infinite self replication, there are also examples where this does not happen. Examples include HIV, the common cold (variations therein) and cancers. Also, when one looks at predator/prey population dynamics, though one is limited by the other, either would doubtlessly expand indefinitely if unimpeded.

Your analogy does not deal with the fact that if self replicating machines were built to endlessly self replicate then why haven’t we seen them. Your examples do give a reason why such machines would not likely be built and that is the likelihood that the machines would turn around and destroy their creators or at least use up all of their resources. Further, as others have mentioned, maybe the machines would also fall under darwinian law once created.

p.s. Greg Bear, Forge of God and Anvil of the Stars are a couple of great SF reads for this subject and puts another spin on it. That is that maybe two types of machines are built, those that consume and destroy and those that destroy the ones that consume and destroy…

Of all the methods of attaining formative interstellar flight have always thought self-replicating-self-reparing nano von Neumann machines the best logical avenue. I have seen what I think are reasonable means of launching such probes of very small mass to fractions of the speed of light.

One problem , and one that worried von Neumann, was how do you turn such a device on and have it remain viable for thousands of years? There is an old problem in cellular automata squaring off with entropy. Not clear to me how long it will take this to be resolved.

This is aside from the wither the ‘self-reparing’ aspect of problems really can solve the degradation of these machines due to the energetic regime they must live in, the hazards of relativistic flight in interstellar space are not mitigated by being ‘too small to fail’.

On science fiction and ‘von Neumann’ star flight. There sure seem a lot of Berserker , well Frankenstein’s Monster, stories…. has anyone ever written a story about a malevolent civilization sending out self-replication robot ships with evil intent only to find out that each fleet they launch evolves along the way to become benevolent or indifferent? Much to their frustration.

“If it were not for science and technology we would not be in the mess we are in… we would be in some kind of other mess.”

Al, re your question on evolving probes, I can cite Greg Bear’s Queen of Angels, which has an Alpha Centauri probe gradually evolve into full sentience by the end. Of course, it’s not the exact scenario you’re talking about, with the hitherto malevolent technology becoming benevolent. I see that tesh has cited a couple of other Greg Bear books as well.

Damien RS: Thanks for the tip re the Brin story!

Steve Wilson: I don’t know why Samuel Butler never came to mind, but of course he fits in here. Nice call.

If you want to read a excellent Si-Fi story about the dangers of malevolent probes, read John Ringo and Travis Taylors Von Neuman’s War

It has lots of hard science and is a cracking good read!

The problem with selfreplicating probes , asuming they can be build, is ofcourse how to keep them from evolving into somthing else , something that acts acording to its ovn evolutionary-egoistic interrest , like anything else ALIVE . This should be considered very similar to having a tame animalspecies and KEEPING it that way , because the only real experince we have with selfreplication (exept from computervirusses) is the biological one . One the one hand it hasnt been prooven that a selfreplicating nonbiologic system automaticly wil behave acording to the known laws of evolution , but on the otherhand theres no reason to believe otherwise , if the specifik differences , such as the above claimed relatively more errorfree copying of information ,is taken into account.

Tame animals exists in various degrees of symbiotic relationships with humans , but this relationship cannot be stable without a continous selctive pressure made by humans to prevent the species from either “returning to the wild” or dying out .

The more exact question is , if evolution can be prevented permanently from happening in an otherwise evolution -fitting system , and if the answer is yes , it have to be 100% certain . If a tame mechanical species should go wild while travelling away among the stars , there will be no way to reverse this mistake .

The only reason to make such a mistake , is to underestimate the human ability to ENDURE a many generation voyage .

I note that if controlled fusion truly is difficult, nigh-impossible, and some other macro-engineering fantasies prove to be fantastic, then that might put a huge crimp in things. Fission probably lets you get up to acceptable speeds (given suspension or longevity) but being able to find new fissionables in your target system might not be guaranteed, especially in really old red dwarf systems, I’m guessing. I wonder if this might justify Landis’s percolation model.

It is too often forgotten that self-replicating machines need not be intelligent. If they are not, they are neither malicious nor benevolent. They would be quite harmless, and much less interesting to write about, but just as useful to their creators.

Of course, if the creators themselves were malevolent, that is a different story.

there are basically three concepts for self replication control> control by a leader among the replicants setting and enforcing rules, control by consensus and cooperation, or control by an outside entity.

There is also a form of control where the resources in each environment are limiting and the replicants simply exhaust the supplies needed to operate and grow. that last one is to be avoided as it leads to poor survival. that is why all biological systems seem to evolve population control measures. even virus moderate their virulence for killing the host. Therefore we can expect the same in evolving self replicating machines

Is Intelligence Self-Limiting?

David Eubanks

Ethical Technology

Posted: Mar 10, 2012

In science fiction novels like River of Gods by Ian McDonald [1], an artificial intelligence finds a way to boot-strap its own design into a growing super-intelligence. This cleverness singularity is sometimes referred to as FOOM [2]. In this piece I will give an argument that a single instance of intelligence may be self-limiting and that FOOM collapses in a “MOOF.”

A story about a fictional robot will serve to illustrate the main points of the argument.

A robotic mining facility has been built to harvest rare minerals from asteroids. Mobile AI robots do all the work autonomously. If they are damaged, they are smart enough to get themselves to a repair shop, where they have complete access to a parts fabricator and all of their internal designs. They can also upgrade their hardware and programming to incorporate new designs, which they can create themselves.

Robot 0x2A continually upgrades its own problem-solving hardware and software so that it can raise its production numbers. It is motivated to do so because of the internal rewards that are generated—something analogous to joy in humans. The calculation that leads to the magnitude of a reward is a complex one related to the amount of ore mined, the energy expended in doing so, damages incurred, quality of the minerals, and so on.

As 0x2A becomes more acquainted with its own design, it begins to find ways to optimize its performance in ways its human designers didn’t anticipate. The robot finds loopholes that allow it to reap the ‘joy’ of accomplishment while producing less ore. After another leap in problem-solving ability, it discovers how to write values directly into the motivator’s inputs in such a way that completely fools it into thinking actual mining had happened when it has not.

The generalization of 0x2A’s method is this: for any problem presented by internal motivation, super-intelligence allows that the simplest solution is to directly modify motivational signals rather than acting on them. Moreover, these have the same internal result (satisfied internal states), so rationally the simpler method of achieving this is to be preferred. Rather than going FOOM, it prunes more and more of itself away until there is nothing functional remaining. As a humorous example of this sort of goal subversion, see David W. Kammler’s piece “The Well Intentioned Commissar” [3]. Also of note is Claude Shannon’s “ultimate machine” [4].

Full article here:

http://ieet.org/index.php/IEET/more/eubanks20120310

Eniac March 10, 2012 at 16:59

It is too often forgotten that self-replicating machines need not be intelligent. If they are not, they are neither malicious nor benevolent. They would be quite harmless, and much less interesting to write about, but just as useful to their creators.

Cancers and viruses are not intelligent nor intent on malevolence… Malevolence is a descriptive term applicable to largely human actions or used by humans to give a ‘literary gloss’ to natural events.

Another biologist here. The discussion seems to assume that the von Neumann machines (vNms) replicate exponentially, without limit. Even in simple systems, this does not occur: resources become limiting and replication rate decreases; the result is a logistic curve (although at the asymptote, they have exhausted their resources. If the ressources are not replenished, extinction ensues). However, by analogy with biological systems, introduce predators. After the initial population of vNns reach some critical density, they themselves become resources for other vNns (either introduced intentionally or by evolution of vNms: vNns may not replicate perfectly (copying errors; radiation effects). This is the Lottka-Volterra model : depending on the time-lag between replication of the ‘prey’ and ‘predators’, several outcomes occur, which range between stable numbers of predator and prey (if time-lag is zero), through various types of cycle, to chaotic in which ultimately one or the other goes extinct and the survivor (if prey) returns to unfettered growth (and extinction after consuming the galaxy) or (if predator) also dies out,. The ‘simple’ solution to avoid unfettered growth (and consumption of an entire galaxy) is for the civilization that releases the vNms is to also release ‘predator’ vNms that have a reproduction time-lag that results in a stable (or at worst, cyclically oscillating) populations of both.

However (hubris aside), if we assume that replication is not perfect, evolution will occur. The prey will produce variants that(by chance) evade the responses of the predator and supplant their (the prey’s) ancestors; predators will produce variants that overcome this change. Perhaps other tropic levels will evolve: parasites; second-level carnivores. An ecosystem.

But I have dream-woven beyond my original intent. My point: the conquest of the galaxy by vNms will meet some stiff resistance.

Al,

This is an important point. Self-repair will take time, and the amount of damage that can be sustained per time unit is always going to be strictly limited.

Not only will probes not be ‘too small to fail’, I think they will easily be too small to succeed. The power deposited by the interstellar medium is proportional to the cross section area of the device, thus the second power of length. The mass goes with the third power of length, so the power deposited per mass unit (which is relevant for damage rate, I would think) goes up as size is reduced. Furthermore, many defenses lose their effectiveness entirely at small length scales. Magnetic fields won’t work, for example, when the device is small compared with the gyroradius of charged particles. Similarly, material shielding is useless unless it is comparable in size with the penetration depth of the radiation to be shielded against.

This leads me to the seemingly inevitable conclusion that microscopic probes, however attractive they may seem at first glance, are not going to work in the near-relativistic regime 0f around or above 0.1c, possibly even much less. Am I making a mistake?

Eniac

” ….They would be quite harmless, and much less interesting to write about, but just as useful to their creators ”

Most of our tame animals are exactly that , harmless . Meet them again one million years , or perhaps a hundred million years , after they have escaped our controll and been free to devellop and fill out a new environment , and they will eat you for breakfast . Machine life has the possiblity of learning to change its own “DNA” even on a very low evolutionary stage , because the tools demanded are a natural part of any pruduction or repair operation .

This is very different trom the biological evolution where the DNA can only be changed (so far) through feedback from the environment .

If this should happen , machine life could acelerate its own evolution to a speed where it would be irrelevant at what level of intelligence the escape from control ocured .

To take such a risk would only be justified if mankind was facing an almost certain danger of extinction .

david eubank

you have just described a motivation for drug addiction among humans. if humans can alter their own inputs to redirect the pleasure center response or to feel “satisfied”many will do so chemically.

But assume many many machines are built the ones that learn to short circuit their “joy” function will not survive, those that stay true to the task of self replication will continue to exist. After all, in all organisms, the pleasure principle is just a PROXY to motivate survival behaviors, a short term reward for behaviors that on average must havebe a long term benefit for the species in order to be kept in place by evolution ( eating response, sexual response, the thrill of victory etc.) When these long term goals are thwarted by the unintended consequences of short term pleasures, then the trait is not beneficial ( in some religions these are called “sins”)

Think of it this way . who ever created the vNm’s must have wanted them to be fruitful an multiply, and to obey the will of the builder. They were built to serve the interests of another entity that is very different than the vNm’s

let me ask, could a silicon/ metal being really understand the motivations of human sexual behavior for example? or why we think roses small so nice?

True. Both are dangerous because, they are small, we do not know enough about them, they may mutate, and we did not start out with complete control over them. Machines would be designed by us, they would be large and defenseless (unless developed for war), they would be dumb, they would not mutate, and we would have all the passcodes to wipe out their memories at a moments notice.

Those who talk about machines mutating, please consider the lowly checksum. Part of the replication procedure could be a checksum check, and the nascent progeny would be recycled before being turned on if the match was not perfect. What if the checksum check fails, you say? Design it with multiple independent checks, such that all of them together fail at most once every 10 billion years. This way, you cannot get an error to propagate, ever.

Other ways to ensure error-free replication would be to constantly compare “genomes” between different “individuals”. Any differences would immediately be fixed, by substituting the consensus bits.

You do not have to worry about evasive countermeasures evolving to beat the measures, because the measures disable evolution, therefore no evolving will take place in the first place. You only have to deal with random failure, which is not hard.

Another thing to consider: Evolution relies on a certain minimum percentage of mutations to be viable, and another, smaller percentage to be advantageous. It is very unlikely that these criteria would be fulfilled with mechanical systems unless specifically included in the design.

“Those who talk about machines mutating, please consider the lowly checksum. Part of the replication procedure could be a checksum check, and the nascent progeny would be recycled before being turned on if the match was not perfect. What if the checksum check fails, you say? Design it with multiple independent checks, such that all of them together fail at most once every 10 billion years. This way, you cannot get an error to propagate, ever.”

Theoreticly this might be possible , but in order to achieve the 10 bill year gaurantie EVERYTHING concerning this process would in itself have to errorfree . Including all the human work that went into the process .

When things get complcated enough , “bugs” come into existense . As a rule of thumb most programs with more than 10,000 lines start out with lots of internal contradictions which only becomes relevant in extremely rare coincidences . Some of theese are eventually fixed after the problem has shown itself , but even then the “fix” is often a case of getting rid of one problem by creating a smaller one . As a system becomes more complex , the number of unforeseeable bugs grows exponentialy , and this is true also of the mecanical-programming interactions .

Evolution is said to work in “stebs” , long perids where nothing happens followed by sudden periods of rapid advance . During the slow period , several necesary mutations started to appear in the population , and when they all apeared in one individual , something revolutionary could happen . This is similar to a 1mill line program who will only fail when ALL its otherwise harmless bugs happens at exactly the same time . This could happen after one second or after a year without any logicbased preferrance for the longer period .

The only way to get a 1bill year guarantie , is to opereate the system several times for a billion years under close to realistic condition , while continously cleaning it for bugs .

No, not really. Five independent checks, each one designed to fail no more than 1% of the time (a very lose criterion, really) will get you to 10,000,000,000 generations between errors, more than enough.

Sure. But a checksum check with a following go/no go decision is not complicated (maybe 10 lines of code) and can be made bug-free enough to fail less than 1% of the time with no undue effort.

hey eniac I appreciate your POV but really i think entropy and Godels incompleteness therom work against the idea of an infallible failsafe. Personally, my computer mutates every week and it is only my constant intervention that keeps it from developing a mind of its own ( figuratively)

Jkittle, I think your computer mutates due to external influences, not by itself. I hope it will not evolve and devour you anytime soon! :-)

Goedel’s incompleteness theorem really has no bearing whatsoever on this issue. Neither does entropy.

” Five independent checks, each one designed to fail no more than 1% of the time (a very lose criterion, really) will get you to 10,000,000,000 generations between errors, more than enough.”

Again it could be true , theoreticly , but how does theese INDEPENDENT checks become 100% independent of the whole complexity which they continously are supposed to keep an eye on ? for an allmost endless number of cycles the super-simple “check funktion” somehow must be in comunication with the extreme complexity of the selfeplicating robot funktion in order to control it . Given enough time the simple check funktion wil need replacement , and beeing simple it cant replicate by itself so, how exactly can it be 100% independent ? It seems similar to the problem white bloodcells face when trying to prevent cancer by sniffing out mutated cells , the complxity of the genetic information is just too big for them to identify ALL possible cancers .

Did the English novelist Charles Dickens write science fiction? Judge for yourself:

http://19.bbk.ac.uk/index.php/19/article/view/527/538

To go even further back, the ancients such as Hero (or Heron) of Alexandria invented all sorts of mechanical devices, probably more than we realize due to the loss of these instruments and any writings about them over time.

http://www.mlahanas.de/Greeks/HeronAlexandria.htm

Djlactin comes up with a very interesting strategy for controlling vNms growth, but by using an analogy to biology, he hides an implicit assumption that this solution must be impervious to the selfish genome in each individual probe. Because we have a designer this is not the case, so I would be much happier if we replace his wasteful predators with reproduction inhibitor units, or task reassignment units.

The great difference between these probes and living organisms is that, at every single stage of its evolution, selfish needs have been optimised for life. Luckily for us, this occasionally leads to limited forms of altruism, but we can do better for designed probes. Actually, for them to have the same *malevolence potential* as life, we would have to incalcate this selfishness throughout their entire code, and, even in military versions, that exhausting task would be counterproductive.

Not really. For the check function, the task is to count up the bits in the master program, or genome, and then give an ok or not ok for the next step in reproduction to occur. You can make them independent by putting them between different steps in a sequence. For example, one when before the program is uploaded to the daughter probe, one before the program is run for the first time, etc, etc. The “complexity” of the genome is irrelevant, for our check function there is just a bunch of bits that need to be counted up, and a sequence of two steps that needs to be broken. Your concern sounds almost like you think of this as sort of an external intervention. It is not, it is built right into the program. “if (checksum!=0) exit(1)”, to speak in code….

No, it will not. If the code does not change, the check function will work the same, for all time.

Not that it really will be necessary to be too obsessed about the check function. Random changes in the code will not lead to anything other than crashes. Certainly not evolving or intelligent machines. Unless this is the intention. In that case it could be made so, with a lot of trouble. That could be dangerous, in the (very) long run. But what purpose would it serve?